Steinbjörn Jónsson

Unsupervised Single-shot Depth Estimation using Perceptual Reconstruction

Feb 16, 2022

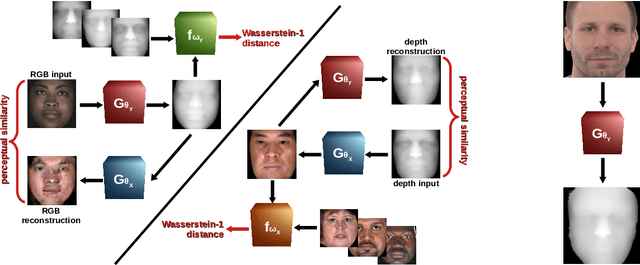

Abstract:Real-time estimation of actual object depth is a module that is essential to performing various autonomous system tasks such as 3D reconstruction, scene understanding and condition assessment of machinery parts. During the last decade of machine learning, extensive deployment of deep learning methods to computer vision tasks has yielded approaches that succeed in achieving realistic depth synthesis out of a simple RGB modality. While most of these models are based on paired depth data or availability of video sequences and stereo images, methods for single-view depth synthesis in a fully unsupervised setting have hardly been explored. This study presents the most recent advances in the field of generative neural networks, leveraging them to perform fully unsupervised single-shot depth synthesis. Two generators for RGB-to-depth and depth-to-RGB transfer are implemented and simultaneously optimized using the Wasserstein-1 distance and a novel perceptual reconstruction term. To ensure that the proposed method is plausible, we comprehensively evaluate the models using industrial surface depth data as well as the Texas 3D Face Recognition Database and the SURREAL dataset that records body depth. The success observed in this study suggests the great potential for unsupervised single-shot depth estimation in real-world applications.

Unpaired Single-Image Depth Synthesis with cycle-consistent Wasserstein GANs

Mar 31, 2021

Abstract:Real-time estimation of actual environment depth is an essential module for various autonomous system tasks such as localization, obstacle detection and pose estimation. During the last decade of machine learning, extensive deployment of deep learning methods to computer vision tasks yielded successful approaches for realistic depth synthesis out of a simple RGB modality. While most of these models rest on paired depth data or availability of video sequences and stereo images, there is a lack of methods facing single-image depth synthesis in an unsupervised manner. Therefore, in this study, latest advancements in the field of generative neural networks are leveraged to fully unsupervised single-image depth synthesis. To be more exact, two cycle-consistent generators for RGB-to-depth and depth-to-RGB transfer are implemented and simultaneously optimized using the Wasserstein-1 distance. To ensure plausibility of the proposed method, we apply the models to a self acquised industrial data set as well as to the renown NYU Depth v2 data set, which allows comparison with existing approaches. The observed success in this study suggests high potential for unpaired single-image depth estimation in real world applications.

Machine Learning for Nondestructive Wear Assessment in Large Internal Combustion Engines

Mar 15, 2021

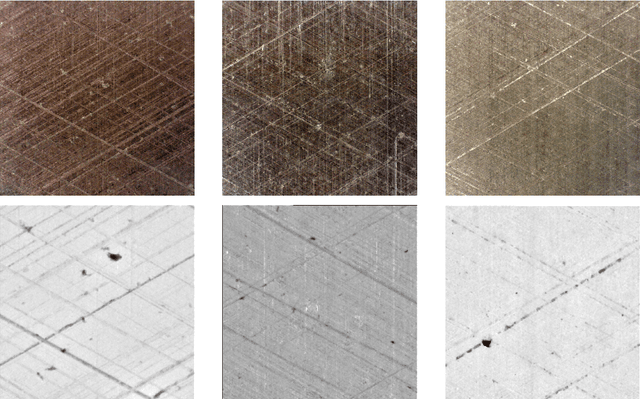

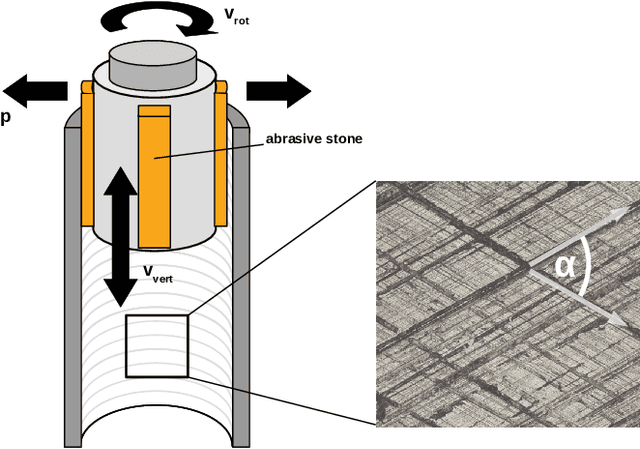

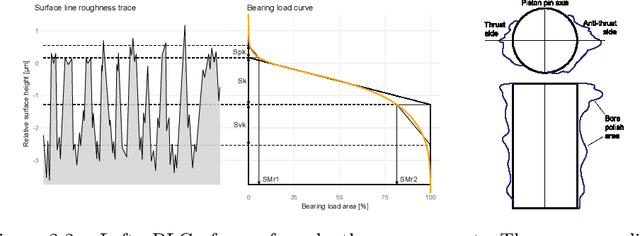

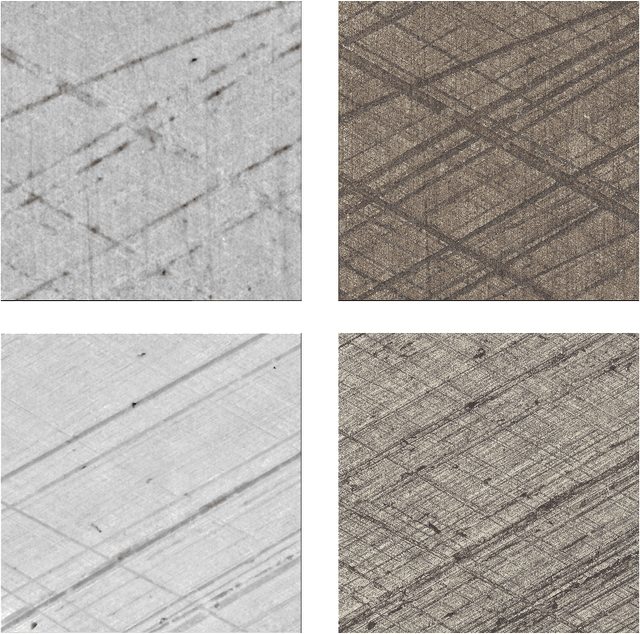

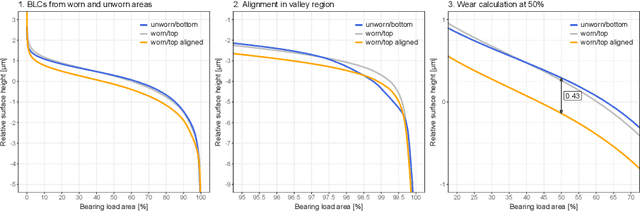

Abstract:Digitalization offers a large number of promising tools for large internal combustion engines such as condition monitoring or condition-based maintenance. This includes the status evaluation of key engine components such as cylinder liners, whose inner surfaces are subject to constant wear due to their movement relative to the pistons. Existing state-of-the-art methods for quantifying wear require disassembly and cutting of the examined liner followed by a high-resolution microscopic surface depth measurement that quantitatively evaluates wear based on bearing load curves (also known as Abbott-Firestone curves). Such reference methods are destructive, time-consuming and costly. The goal of the research presented here is to develop simpler and nondestructive yet reliable and meaningful methods for evaluating wear condition. A deep-learning framework is proposed that allows computation of the surface-representing bearing load curves from reflection RGB images of the liner surface that can be collected with a simple handheld device, without the need to remove and destroy the investigated liner. For this purpose, a convolutional neural network is trained to estimate the bearing load curve of the corresponding depth profile, which in turn can be used for further wear evaluation. Training of the network is performed using a custom-built database containing depth profiles and reflection images of liner surfaces of large gas engines. The results of the proposed method are visually examined and quantified considering several probabilistic distance metrics and comparison of roughness indicators between ground truth and model predictions. The observed success of the proposed method suggests its great potential for quantitative wear assessment on engines and service directly on site.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge