Steffen Rühl

Meta Neural Ordinary Differential Equations For Adaptive Asynchronous Control

Jul 25, 2022

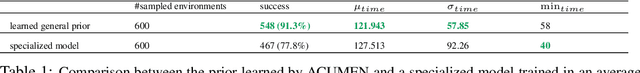

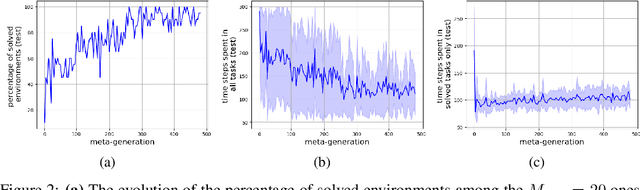

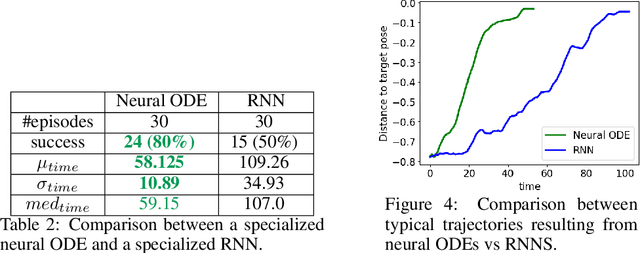

Abstract:Model-based Reinforcement Learning and Control have demonstrated great potential in various sequential decision making problem domains, including in robotics settings. However, real-world robotics systems often present challenges that limit the applicability of those methods. In particular, we note two problems that jointly happen in many industrial systems: 1) Irregular/asynchronous observations and actions and 2) Dramatic changes in environment dynamics from an episode to another (e.g. varying payload inertial properties). We propose a general framework that overcomes those difficulties by meta-learning adaptive dynamics models for continuous-time prediction and control. We evaluate the proposed approach on a simulated industrial robot. Evaluations on real robotic systems will be added in future iterations of this pre-print.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge