Stefano Paladino

Unimodal Thompson Sampling for Graph-Structured Arms

Nov 22, 2016

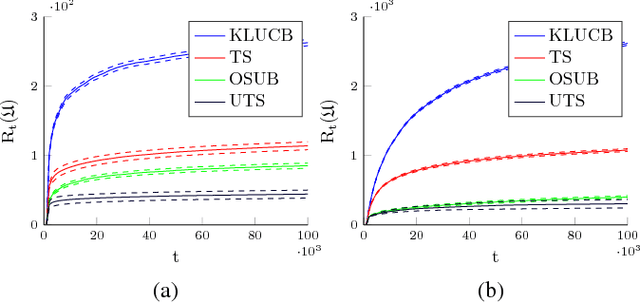

Abstract:We study, to the best of our knowledge, the first Bayesian algorithm for unimodal Multi-Armed Bandit (MAB) problems with graph structure. In this setting, each arm corresponds to a node of a graph and each edge provides a relationship, unknown to the learner, between two nodes in terms of expected reward. Furthermore, for any node of the graph there is a path leading to the unique node providing the maximum expected reward, along which the expected reward is monotonically increasing. Previous results on this setting describe the behavior of frequentist MAB algorithms. In our paper, we design a Thompson Sampling-based algorithm whose asymptotic pseudo-regret matches the lower bound for the considered setting. We show that -as it happens in a wide number of scenarios- Bayesian MAB algorithms dramatically outperform frequentist ones. In particular, we provide a thorough experimental evaluation of the performance of our and state-of-the-art algorithms as the properties of the graph vary.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge