ShuTao Xia

Energy Aligning for Biased Models

Jun 07, 2021

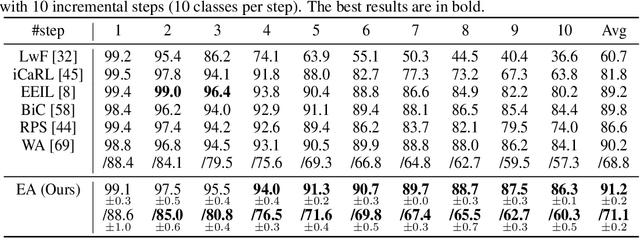

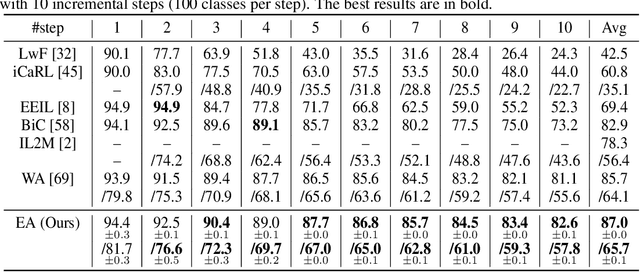

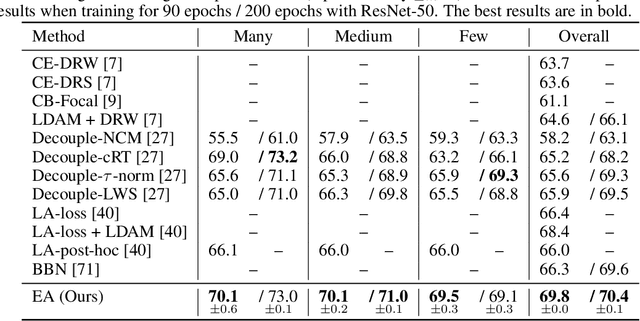

Abstract:Training on class-imbalanced data usually results in biased models that tend to predict samples into the majority classes, which is a common and notorious problem. From the perspective of energy-based model, we demonstrate that the free energies of categories are aligned with the label distribution theoretically, thus the energies of different classes are expected to be close to each other when aiming for ``balanced'' performance. However, we discover a severe energy-bias phenomenon in the models trained on class-imbalanced dataset. To eliminate the bias, we propose a simple and effective method named Energy Aligning by merely adding the calculated shift scalars onto the output logits during inference, which does not require to (i) modify the network architectures, (ii) intervene the standard learning paradigm, (iii) perform two-stage training. The proposed algorithm is evaluated on two class imbalance-related tasks under various settings: class incremental learning and long-tailed recognition. Experimental results show that energy aligning can effectively alleviate class imbalance issue and outperform state-of-the-art methods on several benchmarks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge