Shengyu He

PhoneBit: Efficient GPU-Accelerated Binary Neural Network Inference Engine for Mobile Phones

Dec 05, 2019

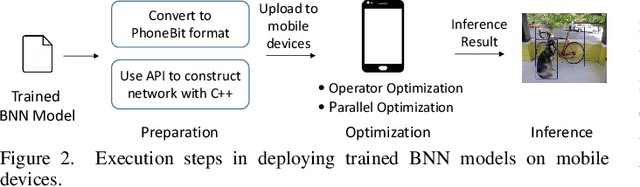

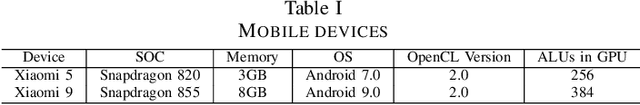

Abstract:Over the last years, a great success of deep neural networks (DNNs) has been witnessed in computer vision and other fields. However, performance and power constraints make it still challenging to deploy DNNs on mobile devices due to their high computational complexity. Binary neural networks (BNNs) have been demonstrated as a promising solution to achieve this goal by using bit-wise operations to replace most arithmetic operations. Currently, existing GPU-accelerated implementations of BNNs are only tailored for desktop platforms. Due to architecture differences, mere porting of such implementations to mobile devices yields suboptimal performance or is impossible in some cases. In this paper, we propose PhoneBit, a GPU-accelerated BNN inference engine for Android-based mobile devices that fully exploits the computing power of BNNs on mobile GPUs. PhoneBit provides a set of operator-level optimizations including locality-friendly data layout, bit packing with vectorization and layers integration for efficient binary convolution. We also provide a detailed implementation and parallelization optimization for PhoneBit to optimally utilize the memory bandwidth and computing power of mobile GPUs. We evaluate PhoneBit with AlexNet, YOLOv2 Tiny and VGG16 with their binary version. Our experiment results show that PhoneBit can achieve significant speedup and energy efficiency compared with state-of-the-art frameworks for mobile devices.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge