Sergio L. Toral Marín

Beyond Task Performance: A Metric-Based Analysis of Sequential Cooperation in Heterogeneous Multi-Agent Destructive Foraging

Feb 11, 2026Abstract:This work addresses the problem of analyzing cooperation in heterogeneous multi-agent systems which operate under partial observability and temporal role dependency, framed within a destructive multi-agent foraging setting. Unlike most previous studies, which focus primarily on algorithmic performance with respect to task completion, this article proposes a systematic set of general-purpose cooperation metrics aimed at characterizing not only efficiency, but also coordination and dependency between teams and agents, fairness, and sensitivity. These metrics are designed to be transferable to different multi-agent sequential domains similar to foraging. The proposed suite of metrics is structured into three main categories that jointly provide a multilevel characterization of cooperation: primary metrics, inter-team metrics, and intra-team metrics. They have been validated in a realistic destructive foraging scenario inspired by dynamic aquatic surface cleaning using heterogeneous autonomous vehicles. It involves two specialized teams with sequential dependencies: one focused on the search of resources, and another on their destruction. Several representative approaches have been evaluated, covering both learning-based algorithms and classical heuristic paradigms.

A Unified Experimental Architecture for Informative Path Planning: from Simulation to Deployment with GuadalPlanner

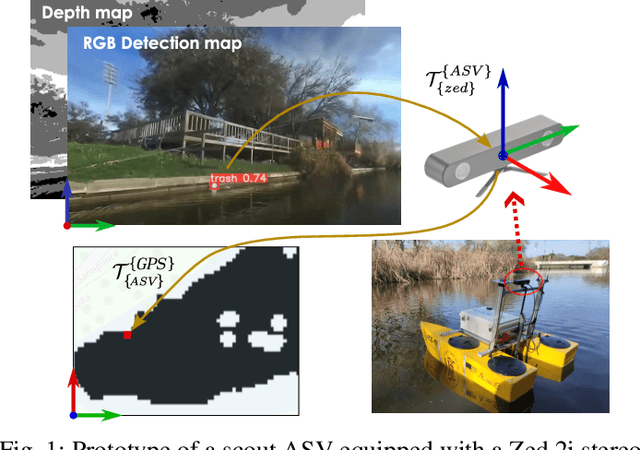

Feb 11, 2026Abstract:The evaluation of informative path planning algorithms for autonomous vehicles is often hindered by fragmented execution pipelines and limited transferability between simulation and real-world deployment. This paper introduces a unified architecture that decouples high-level decision-making from vehicle-specific control, enabling algorithms to be evaluated consistently across different abstraction levels without modification. The proposed architecture is realized through GuadalPlanner, which defines standardized interfaces between planning, sensing, and vehicle execution. It is an open and extensible research tool that supports discrete graph-based environments and interchangeable planning strategies, and is built upon widely adopted robotics technologies, including ROS2, MAVLink, and MQTT. Its design allows the same algorithmic logic to be deployed in fully simulated environments, software-in-the-loop configurations, and physical autonomous vehicles using an identical execution pipeline. The approach is validated through a set of experiments, including real-world deployment on an autonomous surface vehicle performing water quality monitoring with real-time sensor feedback.

Optimizing Plastic Waste Collection in Water Bodies Using Heterogeneous Autonomous Surface Vehicles with Deep Reinforcement Learning

Dec 03, 2024

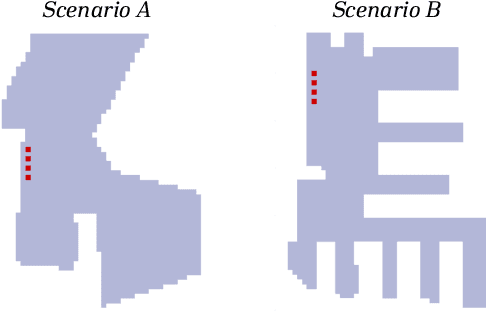

Abstract:This paper presents a model-free deep reinforcement learning framework for informative path planning with heterogeneous fleets of autonomous surface vehicles to locate and collect plastic waste. The system employs two teams of vehicles: scouts and cleaners. Coordination between these teams is achieved through a deep reinforcement approach, allowing agents to learn strategies to maximize cleaning efficiency. The primary objective is for the scout team to provide an up-to-date contamination model, while the cleaner team collects as much waste as possible following this model. This strategy leads to heterogeneous teams that optimize fleet efficiency through inter-team cooperation supported by a tailored reward function. Different trainings of the proposed algorithm are compared with other state-of-the-art heuristics in two distinct scenarios, one with high convexity and another with narrow corridors and challenging access. According to the obtained results, it is demonstrated that deep reinforcement learning based algorithms outperform other benchmark heuristics, exhibiting superior adaptability. In addition, training with greedy actions further enhances performance, particularly in scenarios with intricate layouts.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge