Sen Cheng

A model of semantic completion in generative episodic memory

Nov 26, 2021

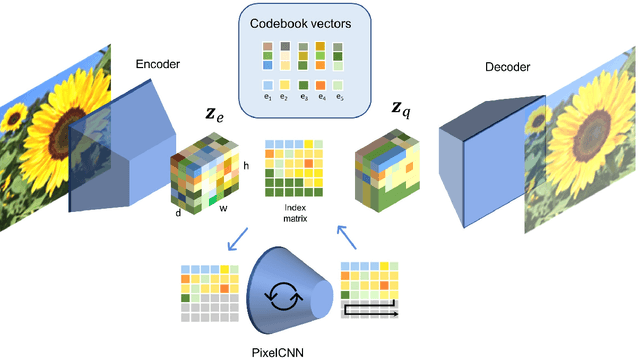

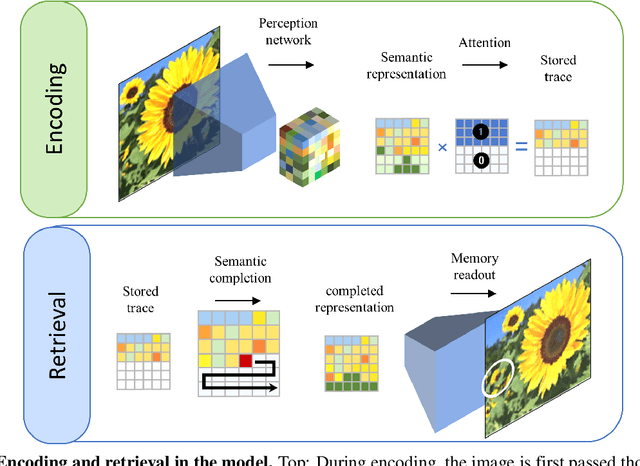

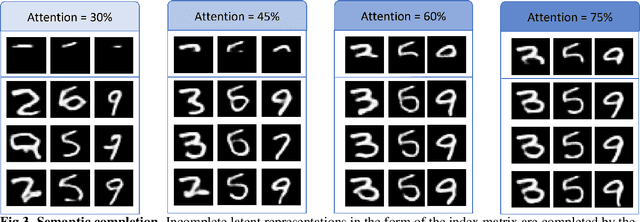

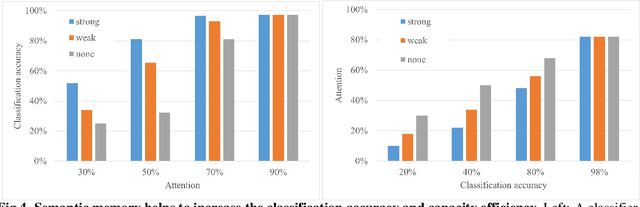

Abstract:Many different studies have suggested that episodic memory is a generative process, but most computational models adopt a storage view. In this work, we propose a computational model for generative episodic memory. It is based on the central hypothesis that the hippocampus stores and retrieves selected aspects of an episode as a memory trace, which is necessarily incomplete. At recall, the neocortex reasonably fills in the missing information based on general semantic information in a process we call semantic completion. As episodes we use images of digits (MNIST) augmented by different backgrounds representing context. Our model is based on a VQ-VAE which generates a compressed latent representation in form of an index matrix, which still has some spatial resolution. We assume that attention selects some part of the index matrix while others are discarded, this then represents the gist of the episode and is stored as a memory trace. At recall the missing parts are filled in by a PixelCNN, modeling semantic completion, and the completed index matrix is then decoded into a full image by the VQ-VAE. The model is able to complete missing parts of a memory trace in a semantically plausible way up to the point where it can generate plausible images from scratch. Due to the combinatorics in the index matrix, the model generalizes well to images not trained on. Compression as well as semantic completion contribute to a strong reduction in memory requirements and robustness to noise. Finally we also model an episodic memory experiment and can reproduce that semantically congruent contexts are always recalled better than incongruent ones, high attention levels improve memory accuracy in both cases, and contexts that are not remembered correctly are more often remembered semantically congruently than completely wrong.

A Hippocampus Model for Online One-Shot Storage of Pattern Sequences

May 30, 2019

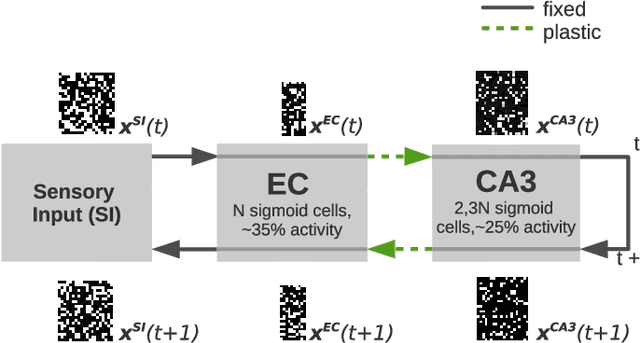

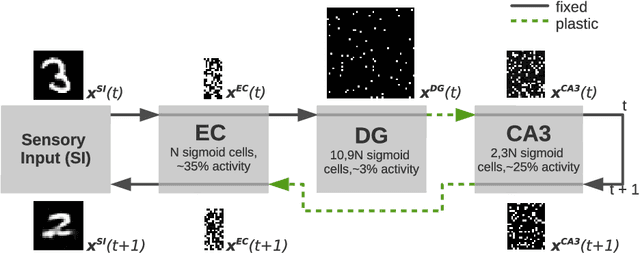

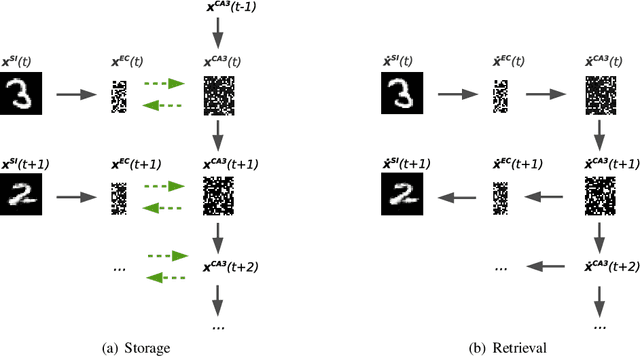

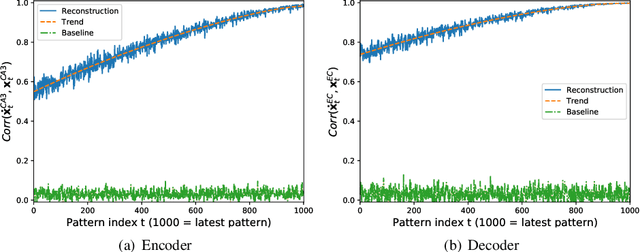

Abstract:We present a computational model based on the CRISP theory (Content Representation, Intrinsic Sequences, and Pattern completion) of the hippocampus that allows to continuously store pattern sequences online in a one-shot fashion. Rather than storing a sequence in CA3, CA3 provides a pre-trained sequence that is hetero-associated with the input sequence, which allows the system to perform one-shot learning. Plasticity on a short time scale therefore only happens in the incoming and outgoing connections of CA3. Stored sequences can later be recalled from a single cue pattern. We identify the pattern separation performed by subregion DG to be necessary for storing sequences that contain correlated patterns. A design principle of the model is that we use a single learning rule named Hebbiand-escent to train all parts of the system. Hebbian-descent has an inherent forgetting mechanism that allows the system to continuously memorize new patterns while forgetting early stored ones. The model shows a plausible behavior when noisy and new patterns are presented and has a rather high capacity of about 40% in terms of the number of neurons in CA3. One notable property of our model is that it is capable of `boot-strapping' (improving) itself without external input in a process we refer to as `dreaming'. Besides artificially generated input sequences we also show that the model works with sequences of encoded handwritten digits or natural images. To our knowledge this is the first model of the hippocampus that allows to store correlated pattern sequences online in a one-shot fashion without a consolidation process, which can instantaneously be recalled later.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge