S. Karthikeyan

Weakly Supervised Localization using Deep Feature Maps

Mar 29, 2016

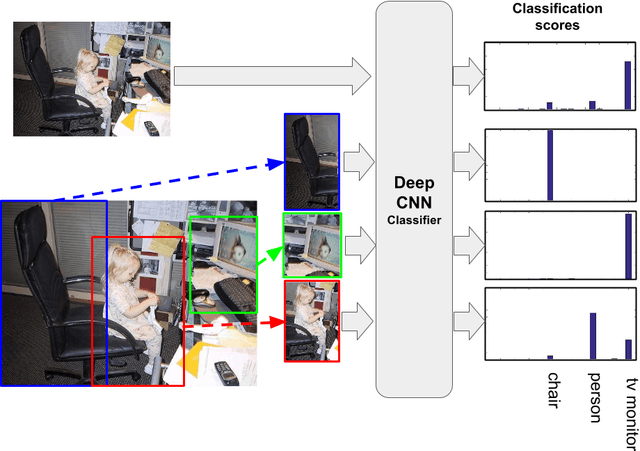

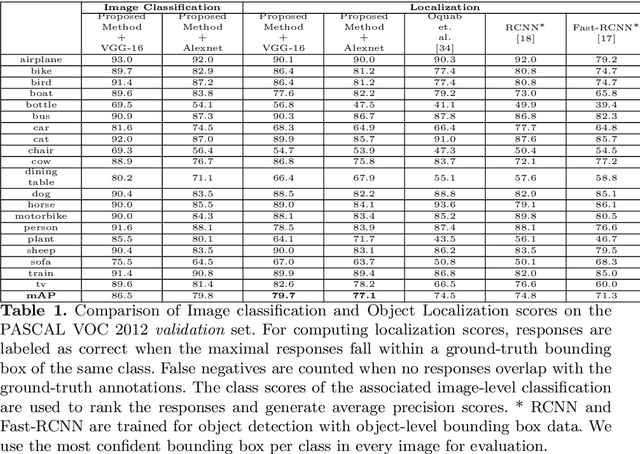

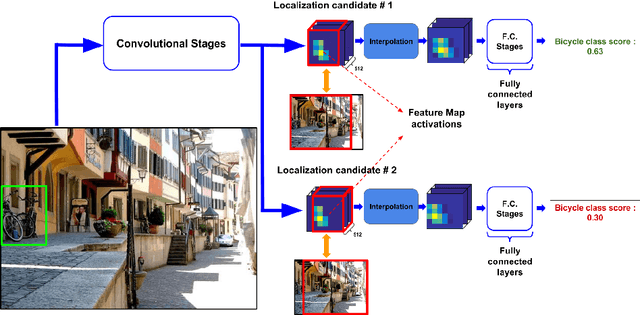

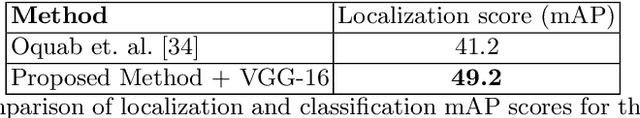

Abstract:Object localization is an important computer vision problem with a variety of applications. The lack of large scale object-level annotations and the relative abundance of image-level labels makes a compelling case for weak supervision in the object localization task. Deep Convolutional Neural Networks are a class of state-of-the-art methods for the related problem of object recognition. In this paper, we describe a novel object localization algorithm which uses classification networks trained on only image labels. This weakly supervised method leverages local spatial and semantic patterns captured in the convolutional layers of classification networks. We propose an efficient beam search based approach to detect and localize multiple objects in images. The proposed method significantly outperforms the state-of-the-art in standard object localization data-sets with a 8 point increase in mAP scores.

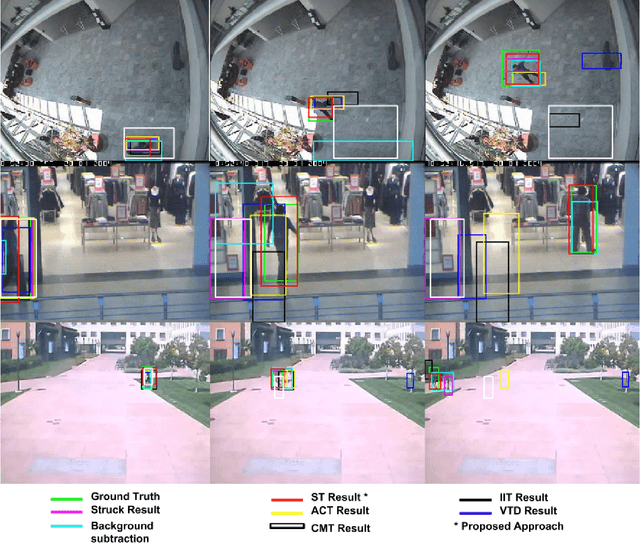

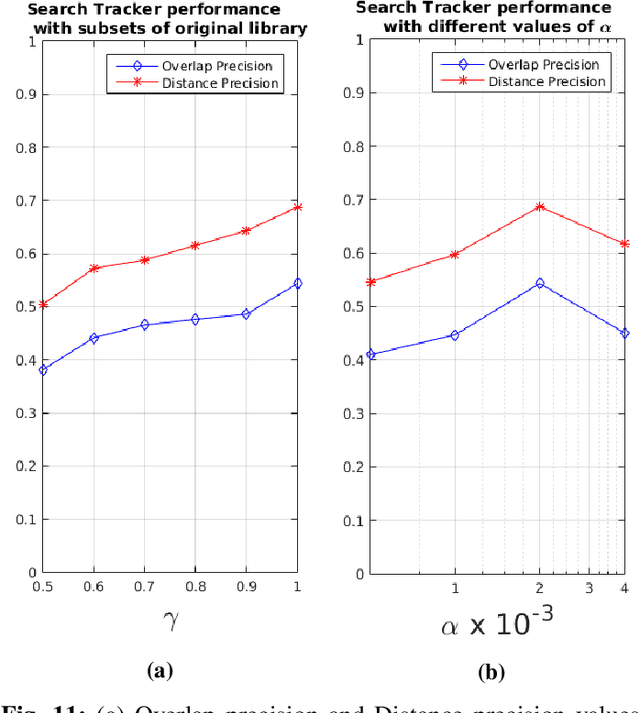

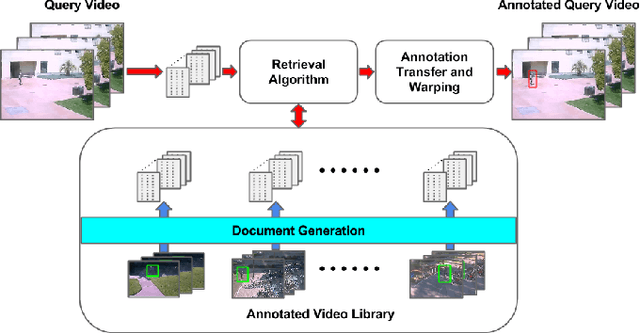

Search Tracker: Human-derived object tracking in-the-wild through large-scale search and retrieval

Feb 05, 2016

Abstract:Humans use context and scene knowledge to easily localize moving objects in conditions of complex illumination changes, scene clutter and occlusions. In this paper, we present a method to leverage human knowledge in the form of annotated video libraries in a novel search and retrieval based setting to track objects in unseen video sequences. For every video sequence, a document that represents motion information is generated. Documents of the unseen video are queried against the library at multiple scales to find videos with similar motion characteristics. This provides us with coarse localization of objects in the unseen video. We further adapt these retrieved object locations to the new video using an efficient warping scheme. The proposed method is validated on in-the-wild video surveillance datasets where we outperform state-of-the-art appearance-based trackers. We also introduce a new challenging dataset with complex object appearance changes.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge