Rutvik Reddy

Multi-level Attention network using text, audio and video for Depression Prediction

Sep 03, 2019

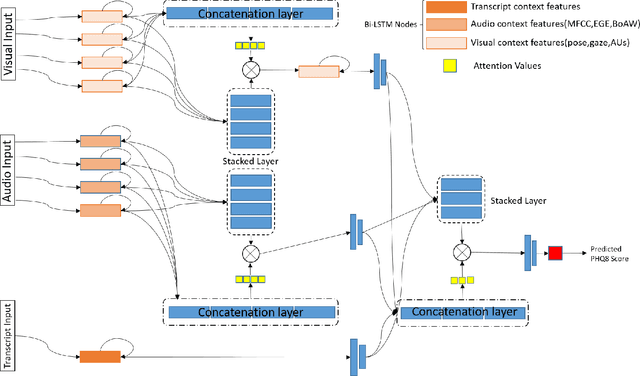

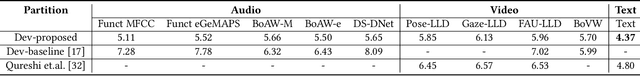

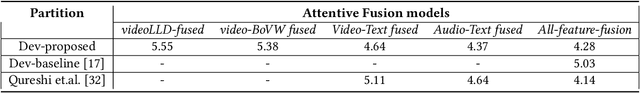

Abstract:Depression has been the leading cause of mental-health illness worldwide. Major depressive disorder (MDD), is a common mental health disorder that affects both psychologically as well as physically which could lead to loss of lives. Due to the lack of diagnostic tests and subjectivity involved in detecting depression, there is a growing interest in using behavioural cues to automate depression diagnosis and stage prediction. The absence of labelled behavioural datasets for such problems and the huge amount of variations possible in behaviour makes the problem more challenging. This paper presents a novel multi-level attention based network for multi-modal depression prediction that fuses features from audio, video and text modalities while learning the intra and inter modality relevance. The multi-level attention reinforces overall learning by selecting the most influential features within each modality for the decision making. We perform exhaustive experimentation to create different regression models for audio, video and text modalities. Several fusions models with different configurations are constructed to understand the impact of each feature and modality. We outperform the current baseline by 17.52% in terms of root mean squared error.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge