Rohit Takhar

New Epochs in AI Supervision: Design and Implementation of an Autonomous Radiology AI Monitoring System

Nov 24, 2023

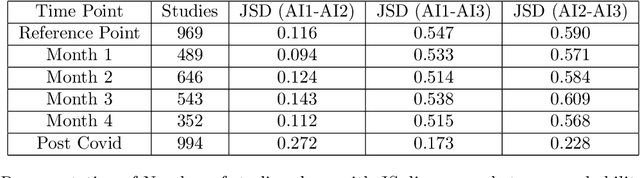

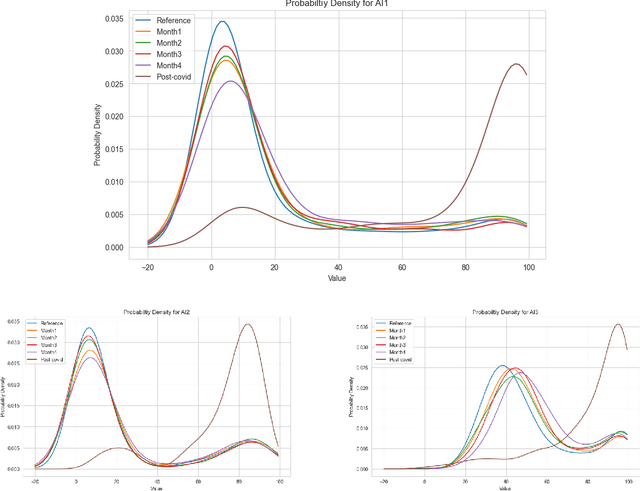

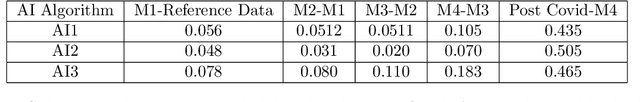

Abstract:With the increasingly widespread adoption of AI in healthcare, maintaining the accuracy and reliability of AI models in clinical practice has become crucial. In this context, we introduce novel methods for monitoring the performance of radiology AI classification models in practice, addressing the challenges of obtaining real-time ground truth for performance monitoring. We propose two metrics - predictive divergence and temporal stability - to be used for preemptive alerts of AI performance changes. Predictive divergence, measured using Kullback-Leibler and Jensen-Shannon divergences, evaluates model accuracy by comparing predictions with those of two supplementary models. Temporal stability is assessed through a comparison of current predictions against historical moving averages, identifying potential model decay or data drift. This approach was retrospectively validated using chest X-ray data from a single-center imaging clinic, demonstrating its effectiveness in maintaining AI model reliability. By providing continuous, real-time insights into model performance, our system ensures the safe and effective use of AI in clinical decision-making, paving the way for more robust AI integration in healthcare

Navigating Fairness in Radiology AI: Concepts, Consequences,and Crucial Considerations

Jun 02, 2023

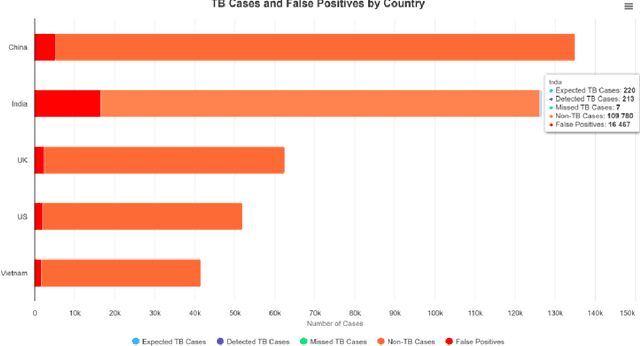

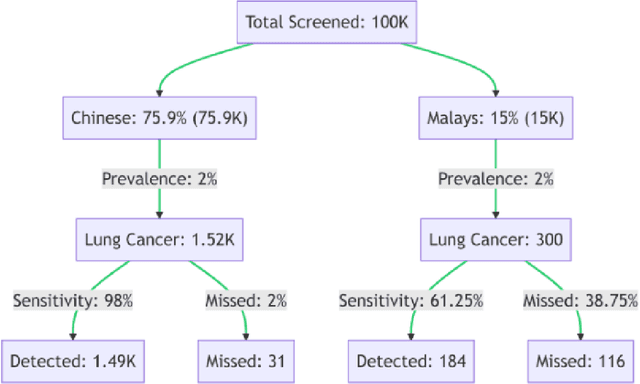

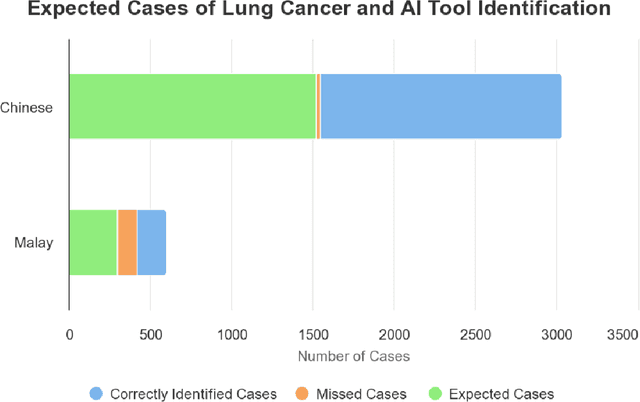

Abstract:Artificial Intelligence (AI) has significantly revolutionized radiology, promising improved patient outcomes and streamlined processes. However, it's critical to ensure the fairness of AI models to prevent stealthy bias and disparities from leading to unequal outcomes. This review discusses the concept of fairness in AI, focusing on bias auditing using the Aequitas toolkit, and its real-world implications in radiology, particularly in disease screening scenarios. Aequitas, an open-source bias audit toolkit, scrutinizes AI models' decisions, identifying hidden biases that may result in disparities across different demographic groups and imaging equipment brands. This toolkit operates on statistical theories, analyzing a large dataset to reveal a model's fairness. It excels in its versatility to handle various variables simultaneously, especially in a field as diverse as radiology. The review explicates essential fairness metrics: Equal and Proportional Parity, False Positive Rate Parity, False Discovery Rate Parity, False Negative Rate Parity, and False Omission Rate Parity. Each metric serves unique purposes and offers different insights. We present hypothetical scenarios to demonstrate their relevance in disease screening settings, and how disparities can lead to significant real-world impacts.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge