Robert Podschwadt

SoK: Privacy-preserving Deep Learning with Homomorphic Encryption

Jan 01, 2022

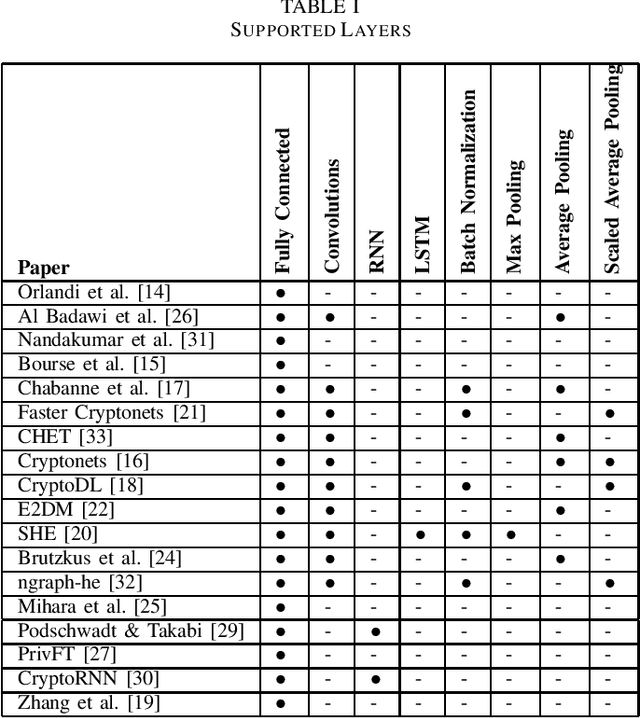

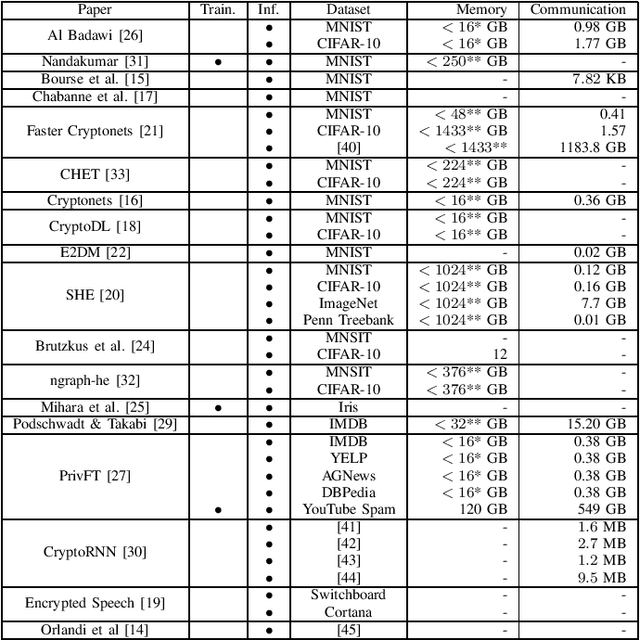

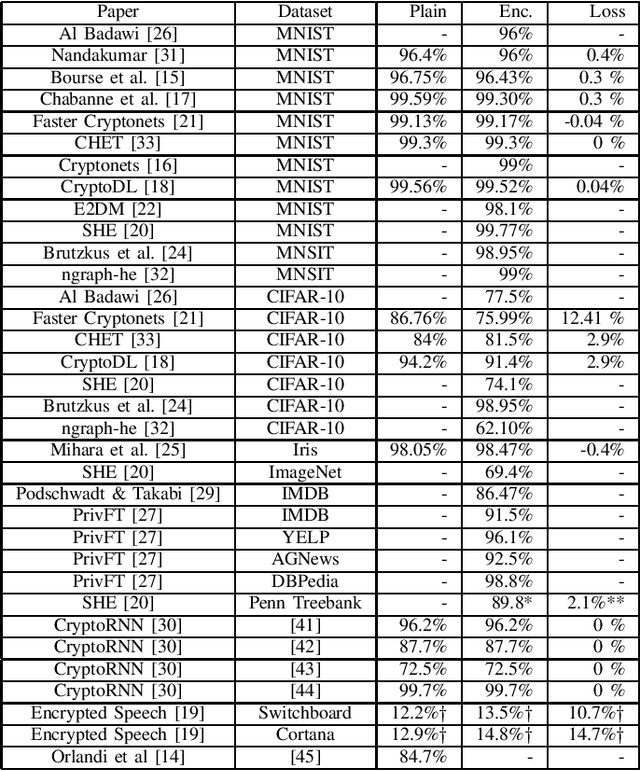

Abstract:Outsourced computation for neural networks allows users access to state of the art models without needing to invest in specialized hardware and know-how. The problem is that the users lose control over potentially privacy sensitive data. With homomorphic encryption (HE) computation can be performed on encrypted data without revealing its content. In this systematization of knowledge, we take an in-depth look at approaches that combine neural networks with HE for privacy preservation. We categorize the changes to neural network models and architectures to make them computable over HE and how these changes impact performance. We find numerous challenges to HE based privacy-preserving deep learning such as computational overhead, usability, and limitations posed by the encryption schemes.

Privacy preserving Neural Network Inference on Encrypted Data with GPUs

Nov 26, 2019

Abstract:Machine Learning as a Service (MLaaS) has become a growing trend in recent years and several such services are currently offered. MLaaS is essentially a set of services that provides machine learning tools and capabilities as part of cloud computing services. In these settings, the cloud has pre-trained models that are deployed and large computing capacity whereas the clients can use these models to make predictions without having to worry about maintaining the models and the service. However, the main concern with MLaaS is the privacy of the client's data. Although there have been several proposed approaches in the literature to run machine learning models on encrypted data, the performance is still far from being satisfactory for practical use. In this paper, we aim to accelerate the performance of running machine learning on encrypted data using combination of Fully Homomorphic Encryption (FHE), Convolutional Neural Networks (CNNs) and Graphics Processing Units (GPUs). We use a number of optimization techniques, and efficient GPU-based implementation to achieve high performance. We evaluate a CNN whose architecture is similar to AlexNet to classify homomorphically encrypted samples from the Cars Overhead With Context (COWC) dataset. To the best of our knowledge, it is the first time such a complex network and large dataset is evaluated on encrypted data. Our approach achieved reasonable classification accuracy of 95% for the COWC dataset. In terms of performance, our results show that we could achieve several thousands times speed up when we implement GPU-accelerated FHE operations on encrypted floating point numbers.

Effectiveness of Adversarial Examples and Defenses for Malware Classification

Sep 10, 2019

Abstract:Artificial neural networks have been successfully used for many different classification tasks including malware detection and distinguishing between malicious and non-malicious programs. Although artificial neural networks perform very well on these tasks, they are also vulnerable to adversarial examples. An adversarial example is a sample that has minor modifications made to it so that the neural network misclassifies it. Many techniques have been proposed, both for crafting adversarial examples and for hardening neural networks against them. Most previous work has been done in the image domain. Some of the attacks have been adopted to work in the malware domain which typically deals with binary feature vectors. In order to better understand the space of adversarial examples in malware classification, we study different approaches of crafting adversarial examples and defense techniques in the malware domain and compare their effectiveness on multiple datasets.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge