Ravin Gunawardena

Out-of-Distribution Data: An Acquaintance of Adversarial Examples -- A Survey

Apr 08, 2024

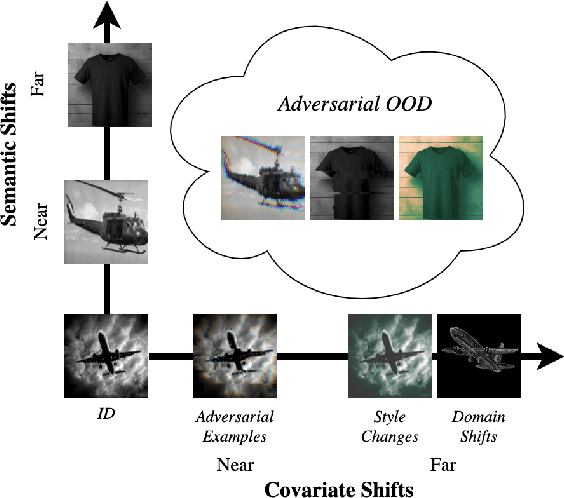

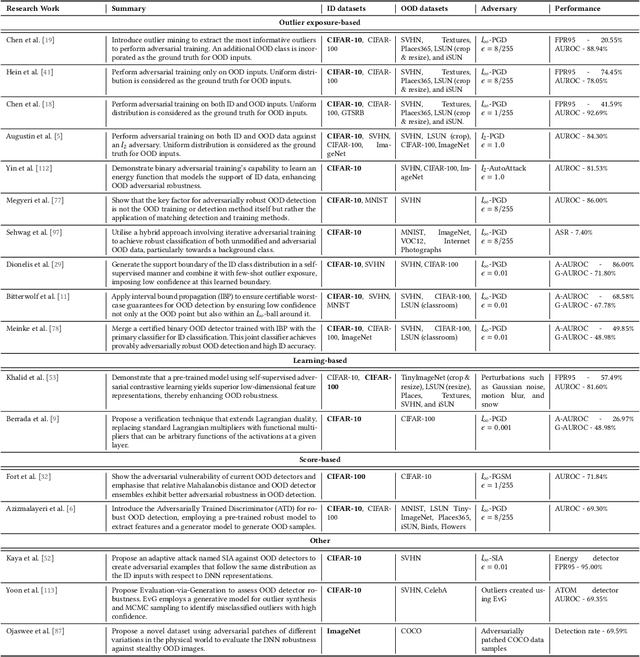

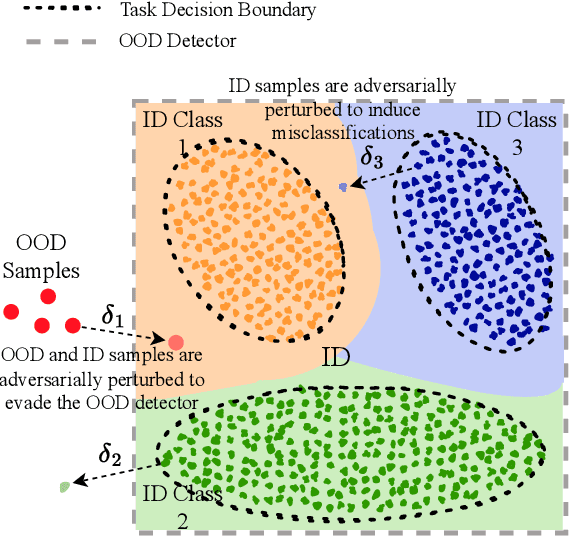

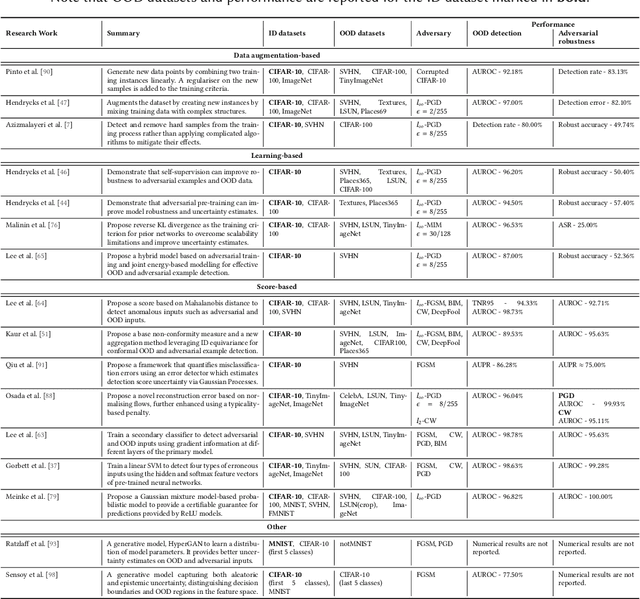

Abstract:Deep neural networks (DNNs) deployed in real-world applications can encounter out-of-distribution (OOD) data and adversarial examples. These represent distinct forms of distributional shifts that can significantly impact DNNs' reliability and robustness. Traditionally, research has addressed OOD detection and adversarial robustness as separate challenges. This survey focuses on the intersection of these two areas, examining how the research community has investigated them together. Consequently, we identify two key research directions: robust OOD detection and unified robustness. Robust OOD detection aims to differentiate between in-distribution (ID) data and OOD data, even when they are adversarially manipulated to deceive the OOD detector. Unified robustness seeks a single approach to make DNNs robust against both adversarial attacks and OOD inputs. Accordingly, first, we establish a taxonomy based on the concept of distributional shifts. This framework clarifies how robust OOD detection and unified robustness relate to other research areas addressing distributional shifts, such as OOD detection, open set recognition, and anomaly detection. Subsequently, we review existing work on robust OOD detection and unified robustness. Finally, we highlight the limitations of the existing work and propose promising research directions that explore adversarial and OOD inputs within a unified framework.

Data-Driven Simulation of Ride-Hailing Services using Imitation and Reinforcement Learning

Apr 06, 2021

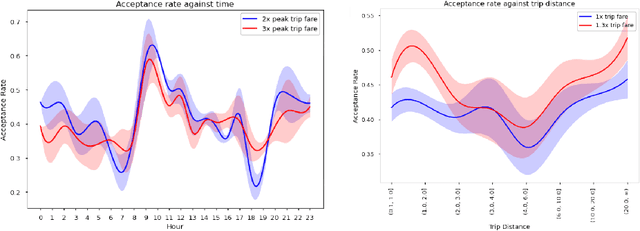

Abstract:The rapid growth of ride-hailing platforms has created a highly competitive market where businesses struggle to make profits, demanding the need for better operational strategies. However, real-world experiments are risky and expensive for these platforms as they deal with millions of users daily. Thus, a need arises for a simulated environment where they can predict users' reactions to changes in the platform-specific parameters such as trip fares and incentives. Building such a simulation is challenging, as these platforms exist within dynamic environments where thousands of users regularly interact with one another. This paper presents a framework to mimic and predict user, specifically driver, behaviors in ride-hailing services. We use a data-driven hybrid reinforcement learning and imitation learning approach for this. First, the agent utilizes behavioral cloning to mimic driver behavior using a real-world data set. Next, reinforcement learning is applied on top of the pre-trained agents in a simulated environment, to allow them to adapt to changes in the platform. Our framework provides an ideal playground for ride-hailing platforms to experiment with platform-specific parameters to predict drivers' behavioral patterns.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge