Ramesh Visvanathan

Adversarially Tuned Scene Generation

Jul 07, 2017

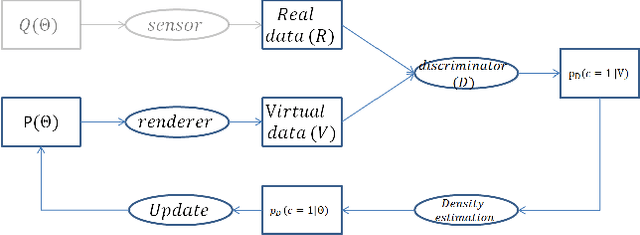

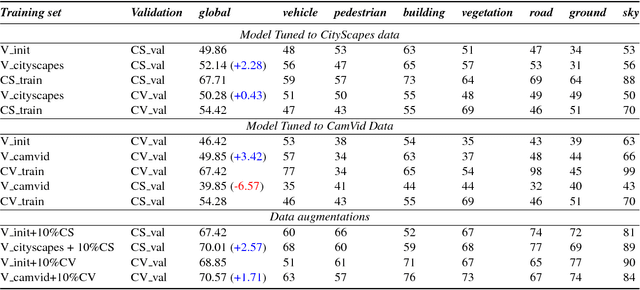

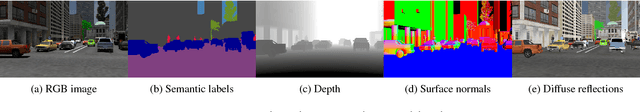

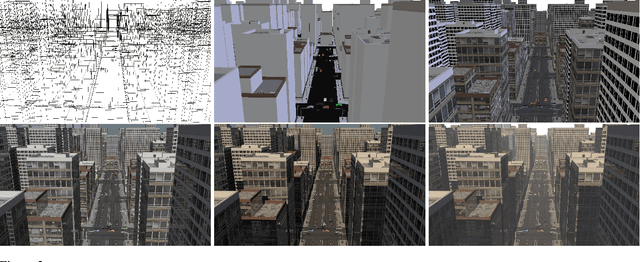

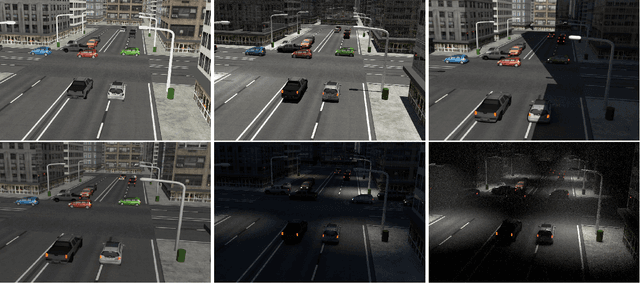

Abstract:Generalization performance of trained computer vision systems that use computer graphics (CG) generated data is not yet effective due to the concept of 'domain-shift' between virtual and real data. Although simulated data augmented with a few real world samples has been shown to mitigate domain shift and improve transferability of trained models, guiding or bootstrapping the virtual data generation with the distributions learnt from target real world domain is desired, especially in the fields where annotating even few real images is laborious (such as semantic labeling, and intrinsic images etc.). In order to address this problem in an unsupervised manner, our work combines recent advances in CG (which aims to generate stochastic scene layouts coupled with large collections of 3D object models) and generative adversarial training (which aims train generative models by measuring discrepancy between generated and real data in terms of their separability in the space of a deep discriminatively-trained classifier). Our method uses iterative estimation of the posterior density of prior distributions for a generative graphical model. This is done within a rejection sampling framework. Initially, we assume uniform distributions as priors on the parameters of a scene described by a generative graphical model. As iterations proceed the prior distributions get updated to distributions that are closer to the (unknown) distributions of target data. We demonstrate the utility of adversarially tuned scene generation on two real-world benchmark datasets (CityScapes and CamVid) for traffic scene semantic labeling with a deep convolutional net (DeepLab). We realized performance improvements by 2.28 and 3.14 points (using the IoU metric) between the DeepLab models trained on simulated sets prepared from the scene generation models before and after tuning to CityScapes and CamVid respectively.

Model Validation for Vision Systems via Graphics Simulation

Dec 04, 2015

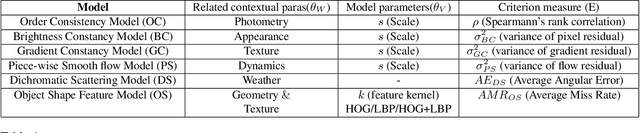

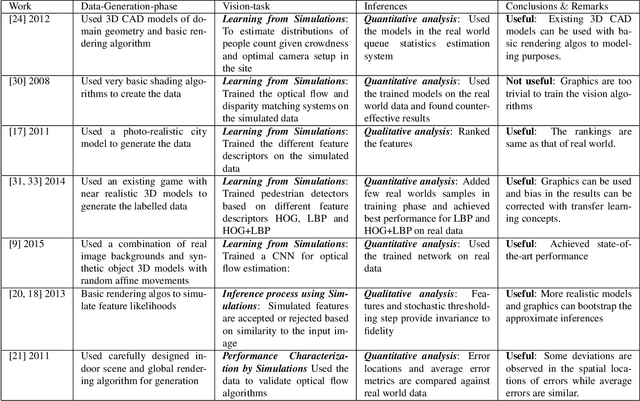

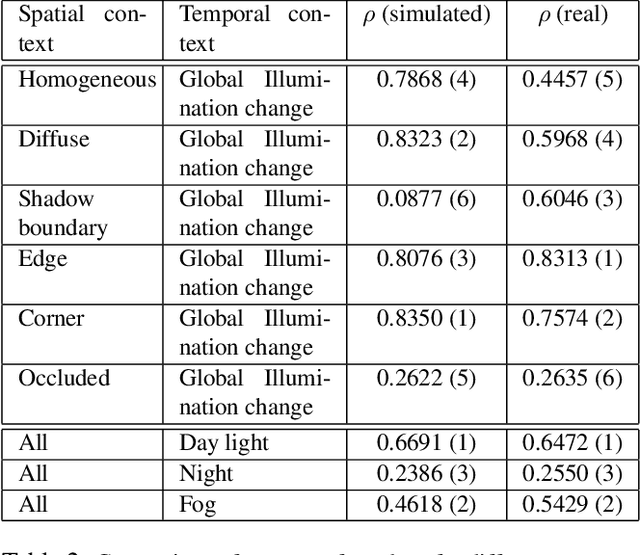

Abstract:Rapid advances in computation, combined with latest advances in computer graphics simulations have facilitated the development of vision systems and training them in virtual environments. One major stumbling block is in certification of the designs and tuned parameters of these systems to work in real world. In this paper, we begin to explore the fundamental question: Which type of information transfer is more analogous to real world? Inspired from the performance characterization methodology outlined in the 90's, we note that insights derived from simulations can be qualitative or quantitative depending on the degree of the fidelity of models used in simulations and the nature of the questions posed by the experimenter. We adapt the methodology in the context of current graphics simulation tools for modeling data generation processes and, for systematic performance characterization and trade-off analysis for vision system design leading to qualitative and quantitative insights. In concrete, we examine invariance assumptions used in vision algorithms for video surveillance settings as a case study and assess the degree to which those invariance assumptions deviate as a function of contextual variables on both graphics simulations and in real data. As computer graphics rendering quality improves, we believe teasing apart the degree to which model assumptions are valid via systematic graphics simulation can be a significant aid to assisting more principled ways of approaching vision system design and performance modeling.

Simulations for Validation of Vision Systems

Dec 03, 2015

Abstract:As the computer vision matures into a systems science and engineering discipline, there is a trend in leveraging latest advances in computer graphics simulations for performance evaluation, learning, and inference. However, there is an open question on the utility of graphics simulations for vision with apparently contradicting views in the literature. In this paper, we place the results from the recent literature in the context of performance characterization methodology outlined in the 90's and note that insights derived from simulations can be qualitative or quantitative depending on the degree of fidelity of models used in simulation and the nature of the question posed by the experimenter. We describe a simulation platform that incorporates latest graphics advances and use it for systematic performance characterization and trade-off analysis for vision system design. We verify the utility of the platform in a case study of validating a generative model inspired vision hypothesis, Rank-Order consistency model, in the contexts of global and local illumination changes, and bad weather, and high-frequency noise. Our approach establishes the link between alternative viewpoints, involving models with physics based semantics and signal and perturbation semantics and confirms insights in literature on robust change detection.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge