Rajiv Misra

HinTel-AlignBench: A Framework and Benchmark for Hindi-Telugu with English-Aligned Samples

Nov 19, 2025Abstract:With nearly 1.5 billion people and more than 120 major languages, India represents one of the most diverse regions in the world. As multilingual Vision-Language Models (VLMs) gain prominence, robust evaluation methodologies are essential to drive progress toward equitable AI for low-resource languages. Current multilingual VLM evaluations suffer from four major limitations: reliance on unverified auto-translations, narrow task/domain coverage, limited sample sizes, and lack of cultural and natively sourced Question-Answering (QA). To address these gaps, we present a scalable framework to evaluate VLMs in Indian languages and compare it with performance in English. Using the framework, we generate HinTel-AlignBench, a benchmark that draws from diverse sources in Hindi and Telugu with English-aligned samples. Our contributions are threefold: (1) a semi-automated dataset creation framework combining back-translation, filtering, and human verification; (2) the most comprehensive vision-language benchmark for Hindi and and Telugu, including adapted English datasets (VQAv2, RealWorldQA, CLEVR-Math) and native novel Indic datasets (JEE for STEM, VAANI for cultural grounding) with approximately 4,000 QA pairs per language; and (3) a detailed performance analysis of various State-of-the-Art (SOTA) open-weight and closed-source VLMs. We find a regression in performance for tasks in English versus in Indian languages for 4 out of 5 tasks across all the models, with an average regression of 8.3 points in Hindi and 5.5 points for Telugu. We categorize common failure modes to highlight concrete areas of improvement in multilingual multimodal understanding.

GASCADE: Grouped Summarization of Adverse Drug Event for Enhanced Cancer Pharmacovigilance

May 07, 2025Abstract:In the realm of cancer treatment, summarizing adverse drug events (ADEs) reported by patients using prescribed drugs is crucial for enhancing pharmacovigilance practices and improving drug-related decision-making. While the volume and complexity of pharmacovigilance data have increased, existing research in this field has predominantly focused on general diseases rather than specifically addressing cancer. This work introduces the task of grouped summarization of adverse drug events reported by multiple patients using the same drug for cancer treatment. To address the challenge of limited resources in cancer pharmacovigilance, we present the MultiLabeled Cancer Adverse Drug Reaction and Summarization (MCADRS) dataset. This dataset includes pharmacovigilance posts detailing patient concerns regarding drug efficacy and adverse effects, along with extracted labels for drug names, adverse drug events, severity, and adversity of reactions, as well as summaries of ADEs for each drug. Additionally, we propose the Grouping and Abstractive Summarization of Cancer Adverse Drug events (GASCADE) framework, a novel pipeline that combines the information extraction capabilities of Large Language Models (LLMs) with the summarization power of the encoder-decoder T5 model. Our work is the first to apply alignment techniques, including advanced algorithms like Direct Preference Optimization, to encoder-decoder models using synthetic datasets for summarization tasks. Through extensive experiments, we demonstrate the superior performance of GASCADE across various metrics, validated through both automated assessments and human evaluations. This multitasking approach enhances drug-related decision-making and fosters a deeper understanding of patient concerns, paving the way for advancements in personalized and responsive cancer care. The code and dataset used in this work are publicly available.

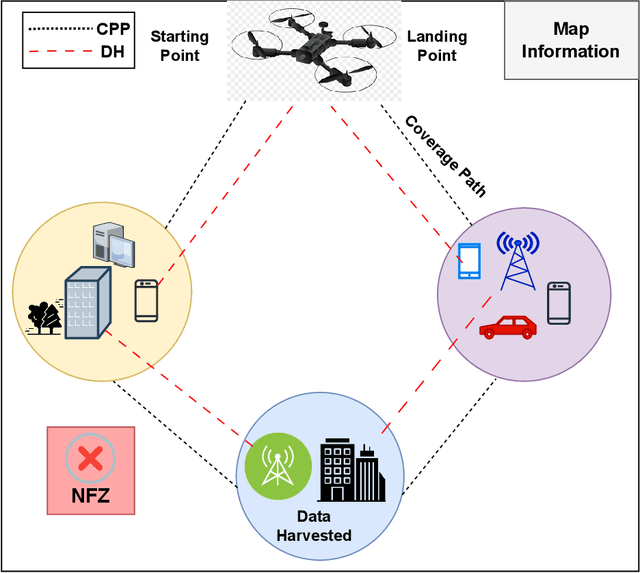

ARDDQN: Attention Recurrent Double Deep Q-Network for UAV Coverage Path Planning and Data Harvesting

May 17, 2024

Abstract:Unmanned Aerial Vehicles (UAVs) have gained popularity in data harvesting (DH) and coverage path planning (CPP) to survey a given area efficiently and collect data from aerial perspectives, while data harvesting aims to gather information from various Internet of Things (IoT) sensor devices, coverage path planning guarantees that every location within the designated area is visited with minimal redundancy and maximum efficiency. We propose the ARDDQN (Attention-based Recurrent Double Deep Q Network), which integrates double deep Q-networks (DDQN) with recurrent neural networks (RNNs) and an attention mechanism to generate path coverage choices that maximize data collection from IoT devices and to learn a control scheme for the UAV that generalizes energy restrictions. We employ a structured environment map comprising a compressed global environment map and a local map showing the UAV agent's locate efficiently scaling to large environments. We have compared Long short-term memory (LSTM), Bi-directional long short-term memory (Bi-LSTM), Gated recurrent unit (GRU) and Bidirectional gated recurrent unit (Bi-GRU) as recurrent neural networks (RNN) to the result without RNN We propose integrating the LSTM with the Attention mechanism to the existing DDQN model, which works best on evolution parameters, i.e., data collection, landing, and coverage ratios for the CPP and data harvesting scenarios.

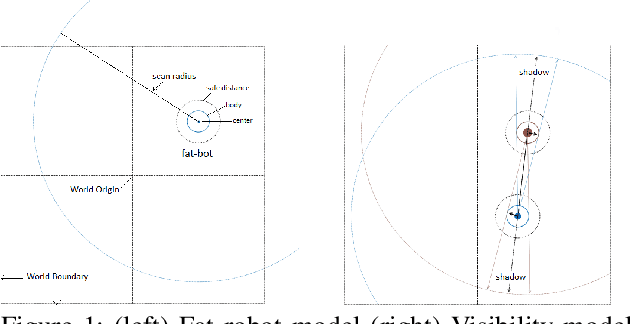

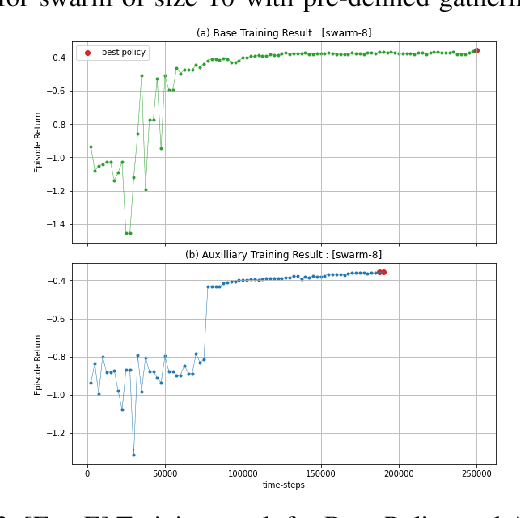

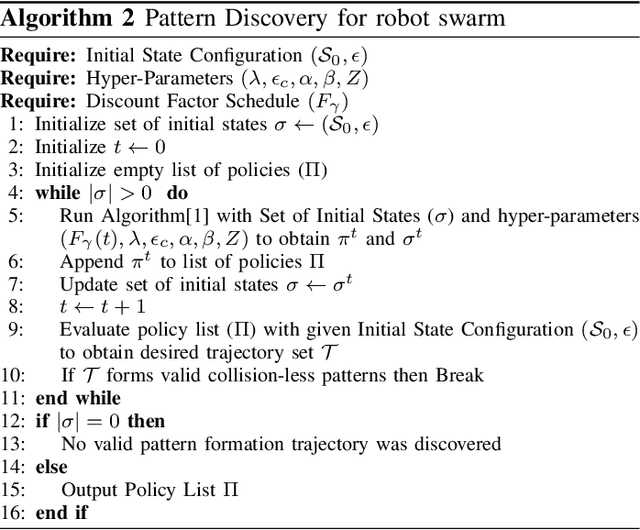

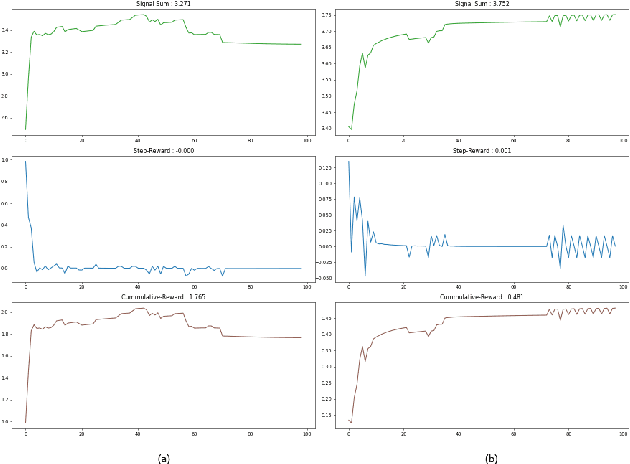

Collisionless Pattern Discovery in Robot Swarms Using Deep Reinforcement Learning

Sep 20, 2022

Abstract:We present a deep reinforcement learning-based framework for automatically discovering patterns available in any given initial configuration of fat robot swarms. In particular, we model the problem of collision-less gathering and mutual visibility in fat robot swarms and discover patterns for solving them using our framework. We show that by shaping reward signals based on certain constraints like mutual visibility and safe proximity, the robots can discover collision-less trajectories leading to well-formed gathering and visibility patterns.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge