Rafael Menelau Oliveira E Cruz

Dynamic Template Selection Through Change Detection for Adaptive Siamese Tracking

Mar 07, 2022

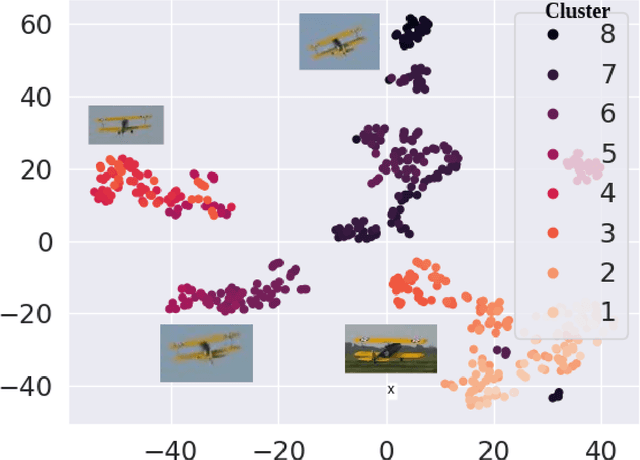

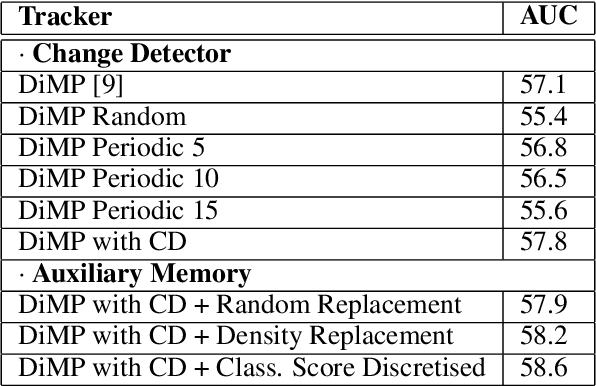

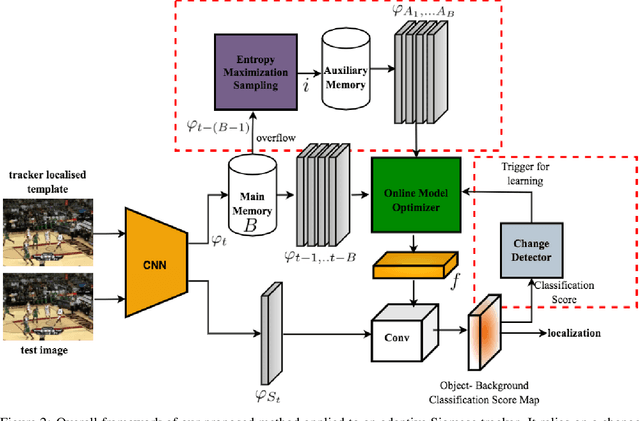

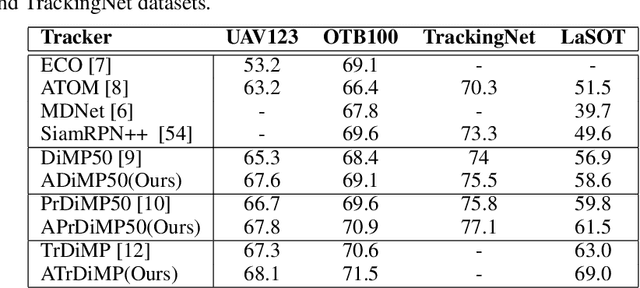

Abstract:Deep Siamese trackers have recently gained much attention in recent years since they can track visual objects at high speeds. Additionally, adaptive tracking methods, where target samples collected by the tracker are employed for online learning, have achieved state-of-the-art accuracy. However, single object tracking (SOT) remains a challenging task in real-world application due to changes and deformations in a target object's appearance. Learning on all the collected samples may lead to catastrophic forgetting, and thereby corrupt the tracking model. In this paper, SOT is formulated as an online incremental learning problem. A new method is proposed for dynamic sample selection and memory replay, preventing template corruption. In particular, we propose a change detection mechanism to detect gradual changes in object appearance and select the corresponding samples for online adaption. In addition, an entropy-based sample selection strategy is introduced to maintain a diversified auxiliary buffer for memory replay. Our proposed method can be integrated into any object tracking algorithm that leverages online learning for model adaptation. Extensive experiments conducted on the OTB-100, LaSOT, UAV123, and TrackingNet datasets highlight the cost-effectiveness of our method, along with the contribution of its key components. Results indicate that integrating our proposed method into state-of-art adaptive Siamese trackers can increase the potential benefits of a template update strategy, and significantly improve performance.

Generative Target Update for Adaptive Siamese Tracking

Feb 21, 2022

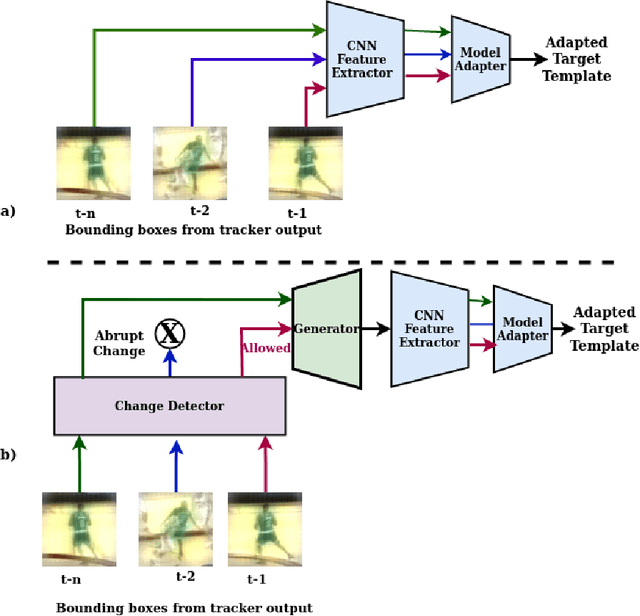

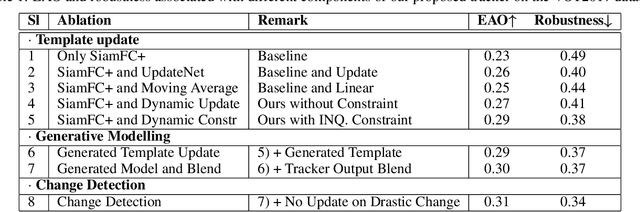

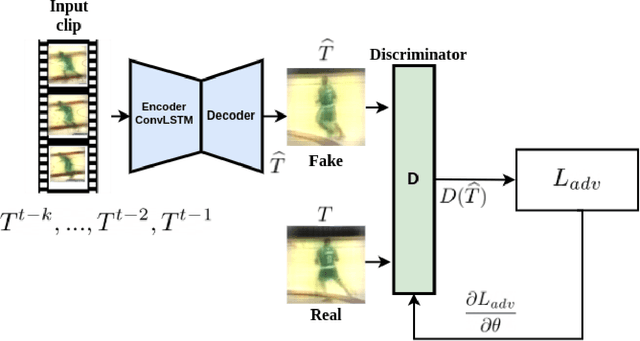

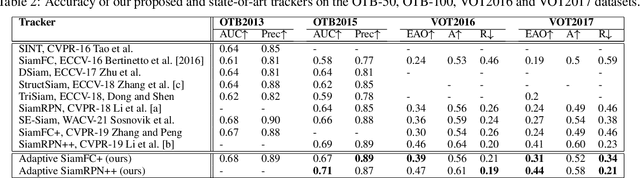

Abstract:Siamese trackers perform similarity matching with templates (i.e., target models) to recursively localize objects within a search region. Several strategies have been proposed in the literature to update a template based on the tracker output, typically extracted from the target search region in the current frame, and thereby mitigate the effects of target drift. However, this may lead to corrupted templates, limiting the potential benefits of a template update strategy. This paper proposes a model adaptation method for Siamese trackers that uses a generative model to produce a synthetic template from the object search regions of several previous frames, rather than directly using the tracker output. Since the search region encompasses the target, attention from the search region is used for robust model adaptation. In particular, our approach relies on an auto-encoder trained through adversarial learning to detect changes in a target object's appearance and predict a future target template, using a set of target templates localized from tracker outputs at previous frames. To prevent template corruption during the update, the proposed tracker also performs change detection using the generative model to suspend updates until the tracker stabilizes, and robust matching can resume through dynamic template fusion. Extensive experiments conducted on VOT-16, VOT-17, OTB-50, and OTB-100 datasets highlight the effectiveness of our method, along with the impact of its key components. Results indicate that our proposed approach can outperform state-of-art trackers, and its overall robustness allows tracking for a longer time before failure.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge