Radu Mărginean

When Does Machine Learning FAIL? Generalized Transferability for Evasion and Poisoning Attacks

Mar 19, 2018

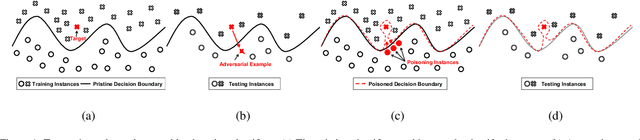

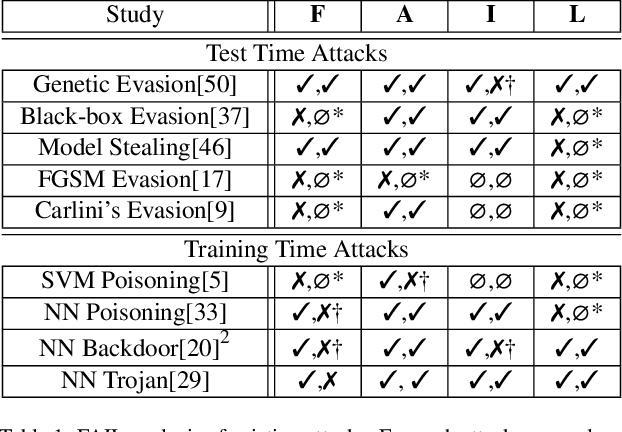

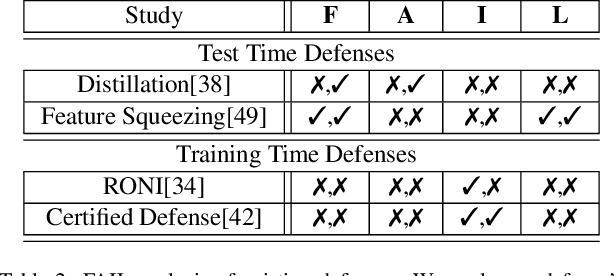

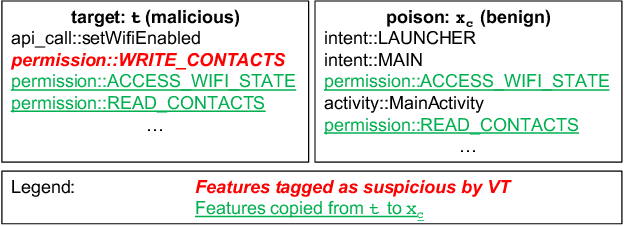

Abstract:Attacks against machine learning systems represent a growing threat as highlighted by the abundance of attacks proposed lately. However, attacks often make unrealistic assumptions about the knowledge and capabilities of adversaries. To evaluate this threat systematically, we propose the FAIL attacker model, which describes the adversary's knowledge and control along four dimensions. The FAIL model allows us to consider a wide range of weaker adversaries that have limited control and incomplete knowledge of the features, learning algorithms and training instances utilized. Within this framework, we evaluate the generalized transferability of a known evasion attack and we design StingRay, a targeted poisoning attack that is broadly applicable---it is practical against 4 machine learning applications, which use 3 different learning algorithms, and it can bypass 2 existing defenses. Our evaluation provides deeper insights into the transferability of poison and evasion samples across models and suggests promising directions for investigating defenses against this threat.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge