Puspita Triana Dewi

Open Continuum Robotics -- One Actuation Module to Create them All

Apr 24, 2023

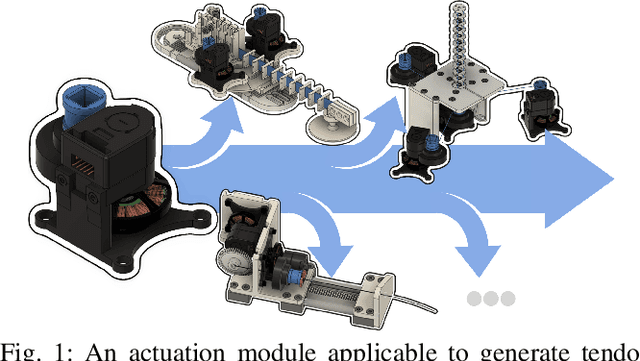

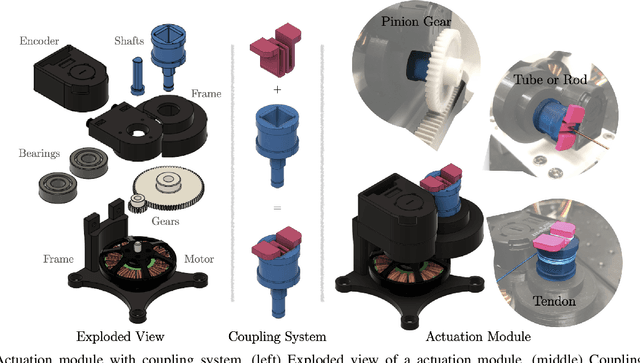

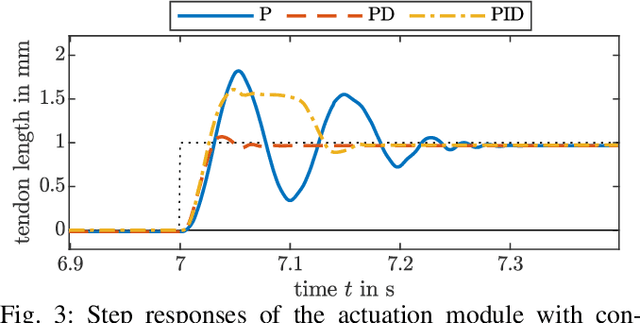

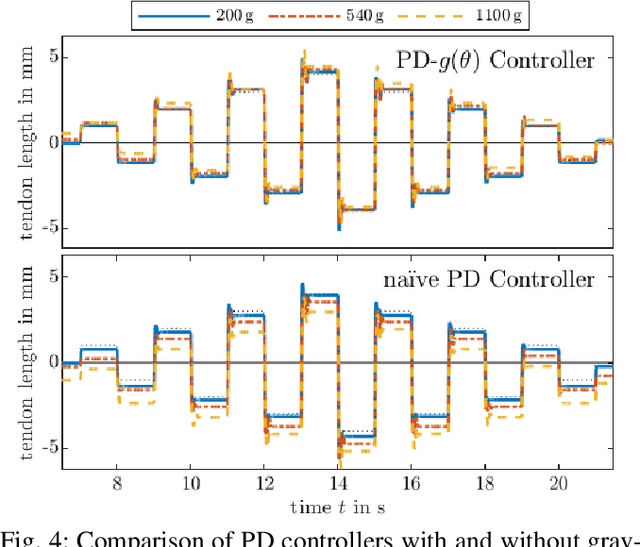

Abstract:Experiments on physical continuum robot are the gold standard for evaluations. Currently, as no commercial continuum robot platform is available, a large variety of early-stage prototypes exists. These prototypes are developed by individual research groups and are often used for a single publication. Thus, a significant amount of time is devoted to creating proprietary hardware and software hindering the development of a common platform, and shifting away scarce time and efforts from the main research challenges. We address this problem by proposing an open-source actuation module, which can be used to build different types of continuum robots. It consists of a high-torque brushless electric motor, a high resolution optical encoder, and a low-gear-ratio transmission. For this letter, we create three different types of continuum robots. In addition, we illustrate, for the first time, that continuum robots built with our actuation module can proprioceptively detect external forces. Consequently, our approach opens untapped and under-investigated research directions related to the dynamics and advanced control of continuum robots, where sensing the generalized flow and effort is mandatory. Besides that, we democratize continuum robots research by providing open-source software and hardware with our initiative called the Open Continuum Robotics Project, to increase the accessibility and reproducibility of advanced methods.

MoSS: Monocular Shape Sensing for Continuum Robots

Mar 02, 2023

Abstract:Continuum robots are promising candidates for interactive tasks in various applications due to their unique shape, compliance, and miniaturization capability. Accurate and real-time shape sensing is essential for such tasks yet remains a challenge. Embedded shape sensing has high hardware complexity and cost, while vision-based methods require stereo setup and struggle to achieve real-time performance. This paper proposes the first eye-to-hand monocular approach to continuum robot shape sensing. Utilizing a deep encoder-decoder network, our method, MoSSNet, eliminates the computation cost of stereo matching and reduces requirements on sensing hardware. In particular, MoSSNet comprises an encoder and three parallel decoders to uncover spatial, length, and contour information from a single RGB image, and then obtains the 3D shape through curve fitting. A two-segment tendon-driven continuum robot is used for data collection and testing, demonstrating accurate (mean shape error of 0.91 mm, or 0.36% of robot length) and real-time (70 fps) shape sensing on real-world data. Additionally, the method is optimized end-to-end and does not require fiducial markers, manual segmentation, or camera calibration. Code and datasets will be made available at https://github.com/ContinuumRoboticsLab/MoSSNet.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge