Pier-Luca Lanzi

A Cognitive Architecture Based on a Learning Classifier System with Spiking Classifiers

Aug 31, 2015

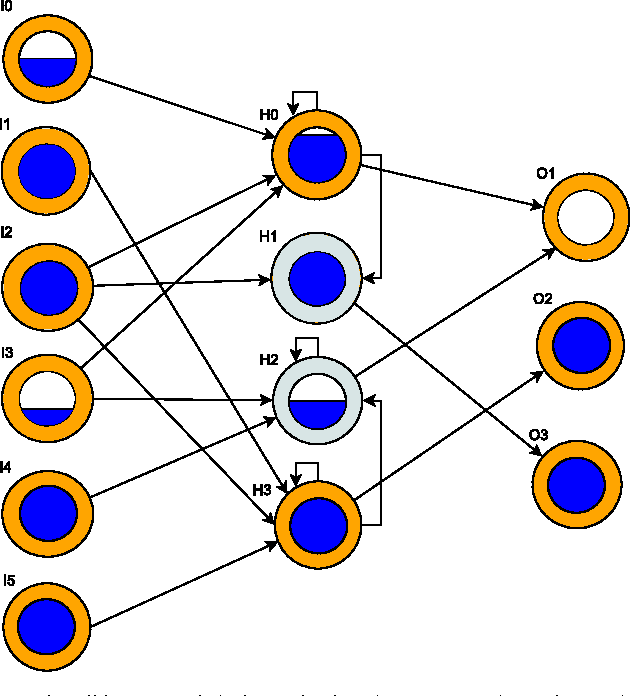

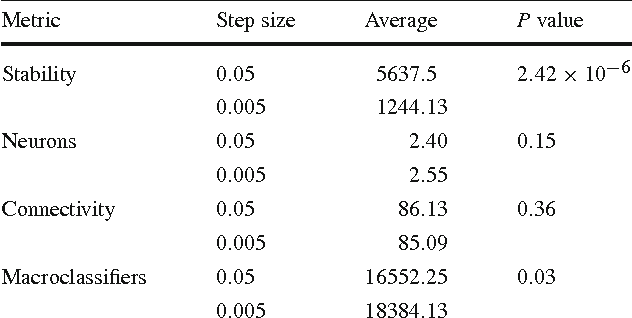

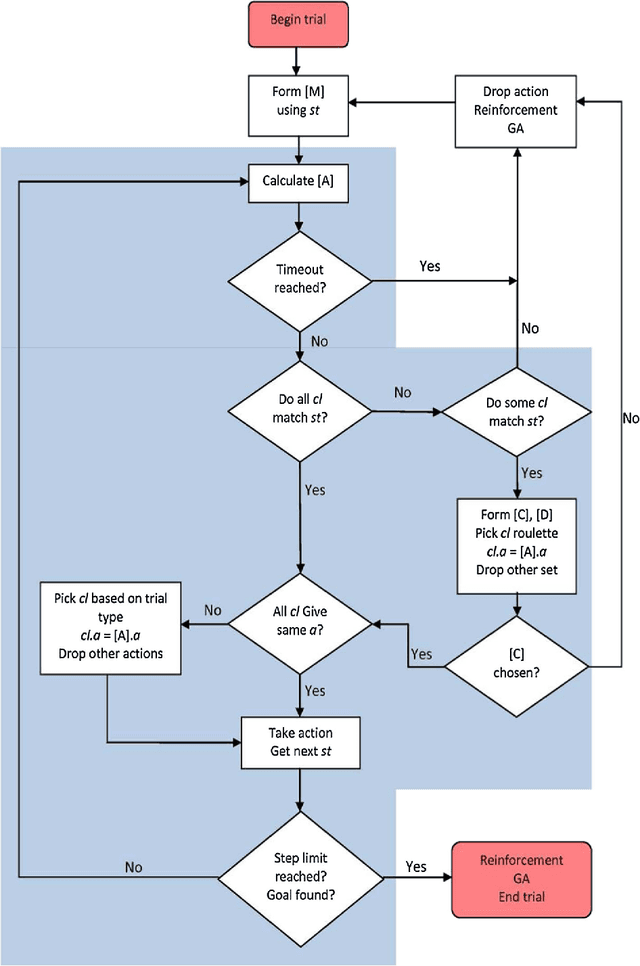

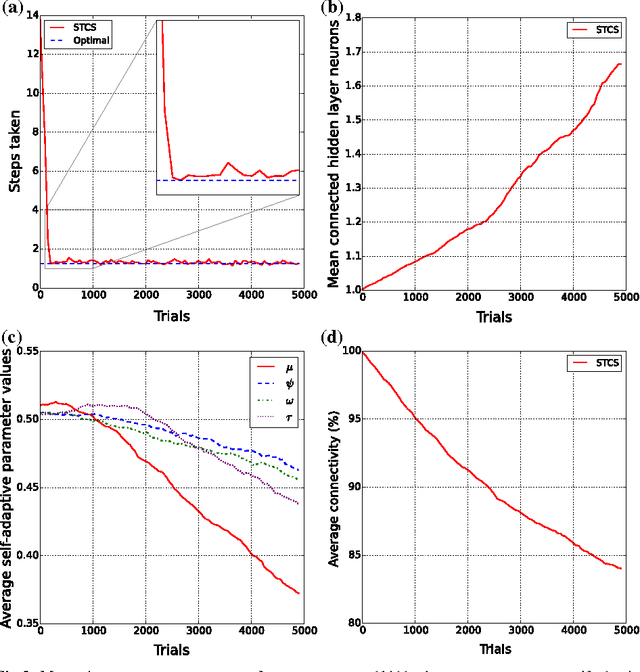

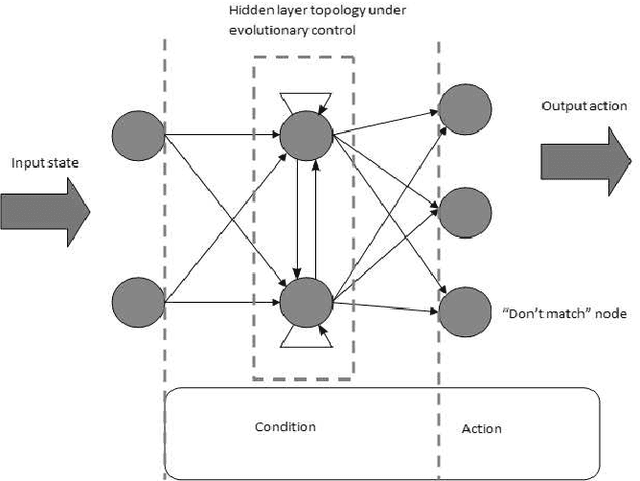

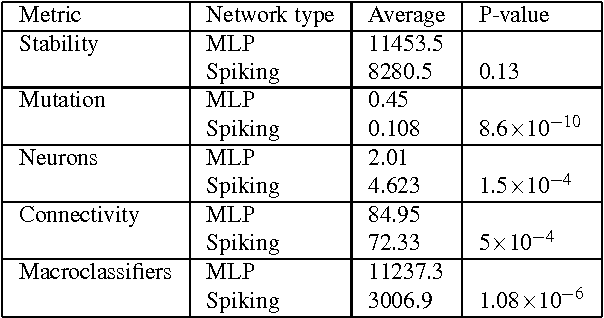

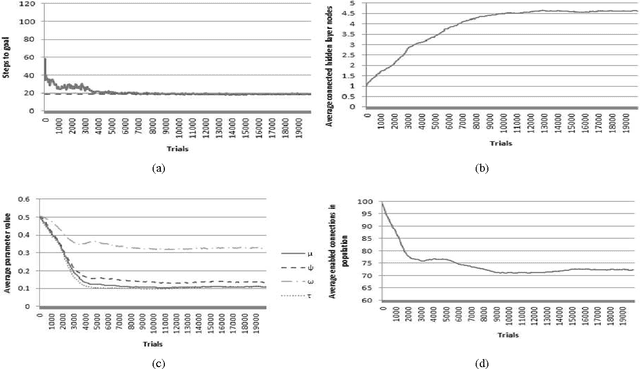

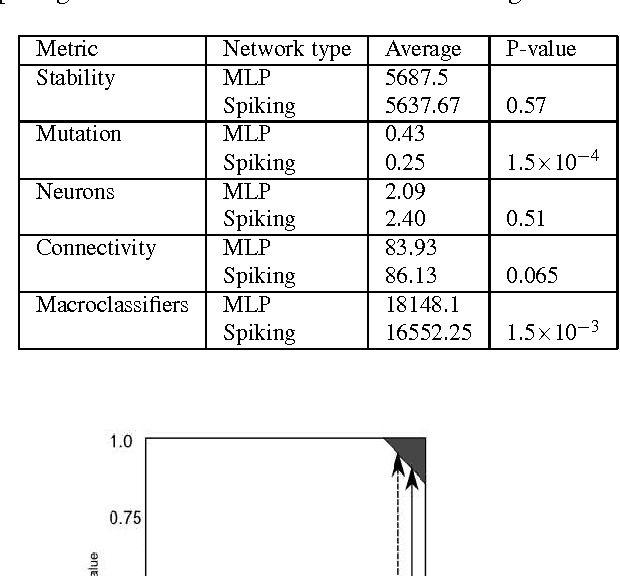

Abstract:Learning Classifier Systems (LCS) are population-based reinforcement learners that were originally designed to model various cognitive phenomena. This paper presents an explicitly cognitive LCS by using spiking neural networks as classifiers, providing each classifier with a measure of temporal dynamism. We employ a constructivist model of growth of both neurons and synaptic connections, which permits a Genetic Algorithm (GA) to automatically evolve sufficiently-complex neural structures. The spiking classifiers are coupled with a temporally-sensitive reinforcement learning algorithm, which allows the system to perform temporal state decomposition by appropriately rewarding "macro-actions," created by chaining together multiple atomic actions. The combination of temporal reinforcement learning and neural information processing is shown to outperform benchmark neural classifier systems, and successfully solve a robotic navigation task.

A Spiking Neural Learning Classifier System

Jan 16, 2012

Abstract:Learning Classifier Systems (LCS) are population-based reinforcement learners used in a wide variety of applications. This paper presents a LCS where each traditional rule is represented by a spiking neural network, a type of network with dynamic internal state. We employ a constructivist model of growth of both neurons and dendrites that realise flexible learning by evolving structures of sufficient complexity to solve a well-known problem involving continuous, real-valued inputs. Additionally, we extend the system to enable temporal state decomposition. By allowing our LCS to chain together sequences of heterogeneous actions into macro-actions, it is shown to perform optimally in a problem where traditional methods can fail to find a solution in a reasonable amount of time. Our final system is tested on a simulated robotics platform.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge