Philipp Ratz

EquiPy: Sequential Fairness using Optimal Transport in Python

Mar 12, 2025Abstract:Algorithmic fairness has received considerable attention due to the failures of various predictive AI systems that have been found to be unfairly biased against subgroups of the population. Many approaches have been proposed to mitigate such biases in predictive systems, however, they often struggle to provide accurate estimates and transparent correction mechanisms in the case where multiple sensitive variables, such as a combination of gender and race, are involved. This paper introduces a new open source Python package, EquiPy, which provides a easy-to-use and model agnostic toolbox for efficiently achieving fairness across multiple sensitive variables. It also offers comprehensive graphic utilities to enable the user to interpret the influence of each sensitive variable within a global context. EquiPy makes use of theoretical results that allow the complexity arising from the use of multiple variables to be broken down into easier-to-solve sub-problems. We demonstrate the ease of use for both mitigation and interpretation on publicly available data derived from the US Census and provide sample code for its use.

Geospatial Disparities: A Case Study on Real Estate Prices in Paris

Jan 29, 2024

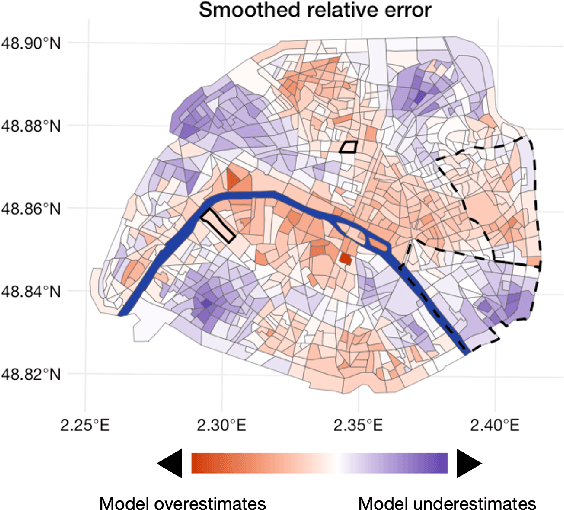

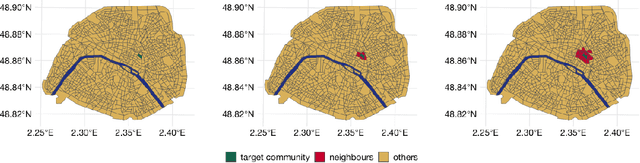

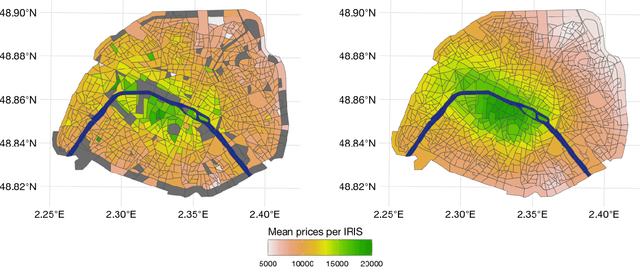

Abstract:Driven by an increasing prevalence of trackers, ever more IoT sensors, and the declining cost of computing power, geospatial information has come to play a pivotal role in contemporary predictive models. While enhancing prognostic performance, geospatial data also has the potential to perpetuate many historical socio-economic patterns, raising concerns about a resurgence of biases and exclusionary practices, with their disproportionate impacts on society. Addressing this, our paper emphasizes the crucial need to identify and rectify such biases and calibration errors in predictive models, particularly as algorithms become more intricate and less interpretable. The increasing granularity of geospatial information further introduces ethical concerns, as choosing different geographical scales may exacerbate disparities akin to redlining and exclusionary zoning. To address these issues, we propose a toolkit for identifying and mitigating biases arising from geospatial data. Extending classical fairness definitions, we incorporate an ordinal regression case with spatial attributes, deviating from the binary classification focus. This extension allows us to gauge disparities stemming from data aggregation levels and advocates for a less interfering correction approach. Illustrating our methodology using a Parisian real estate dataset, we showcase practical applications and scrutinize the implications of choosing geographical aggregation levels for fairness and calibration measures.

Parametric Fairness with Statistical Guarantees

Oct 31, 2023

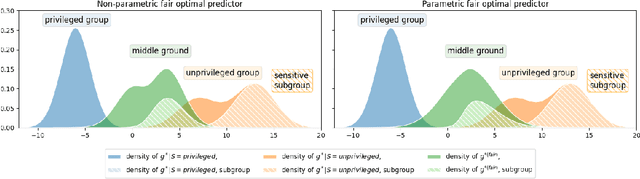

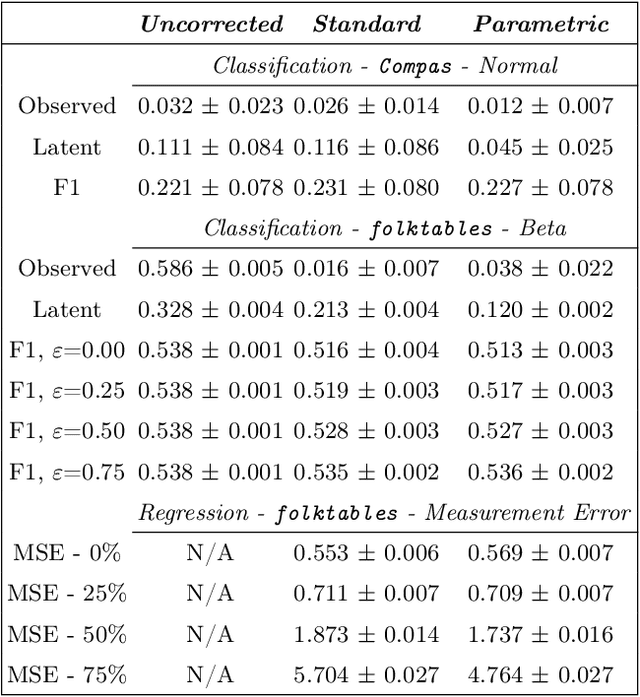

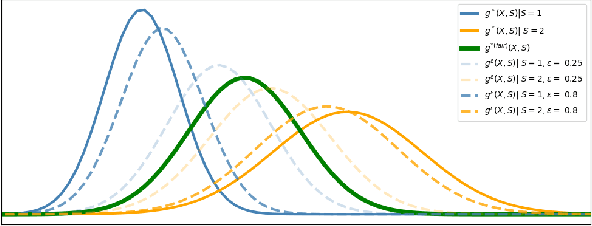

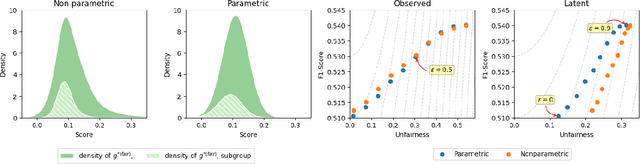

Abstract:Algorithmic fairness has gained prominence due to societal and regulatory concerns about biases in Machine Learning models. Common group fairness metrics like Equalized Odds for classification or Demographic Parity for both classification and regression are widely used and a host of computationally advantageous post-processing methods have been developed around them. However, these metrics often limit users from incorporating domain knowledge. Despite meeting traditional fairness criteria, they can obscure issues related to intersectional fairness and even replicate unwanted intra-group biases in the resulting fair solution. To avoid this narrow perspective, we extend the concept of Demographic Parity to incorporate distributional properties in the predictions, allowing expert knowledge to be used in the fair solution. We illustrate the use of this new metric through a practical example of wages, and develop a parametric method that efficiently addresses practical challenges like limited training data and constraints on total spending, offering a robust solution for real-life applications.

A Sequentially Fair Mechanism for Multiple Sensitive Attributes

Sep 12, 2023

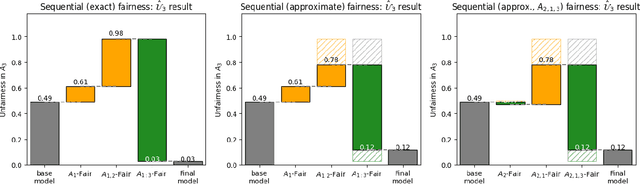

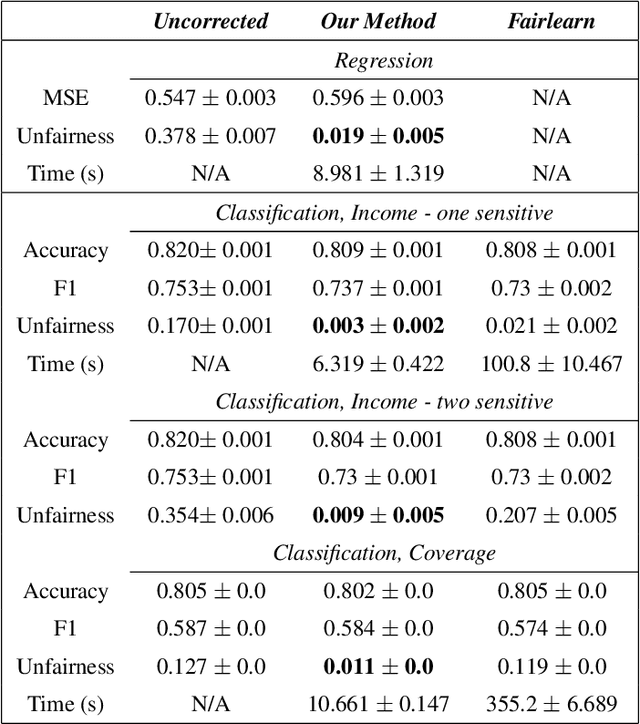

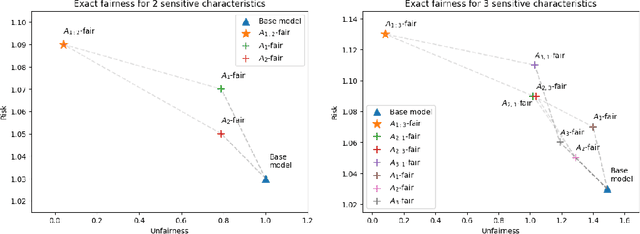

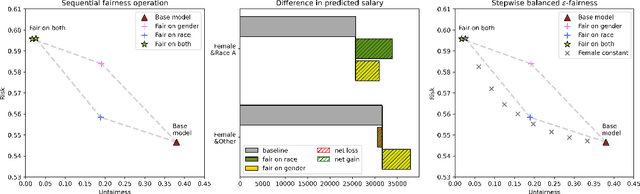

Abstract:In the standard use case of Algorithmic Fairness, the goal is to eliminate the relationship between a sensitive variable and a corresponding score. Throughout recent years, the scientific community has developed a host of definitions and tools to solve this task, which work well in many practical applications. However, the applicability and effectivity of these tools and definitions becomes less straightfoward in the case of multiple sensitive attributes. To tackle this issue, we propose a sequential framework, which allows to progressively achieve fairness across a set of sensitive features. We accomplish this by leveraging multi-marginal Wasserstein barycenters, which extends the standard notion of Strong Demographic Parity to the case with multiple sensitive characteristics. This method also provides a closed-form solution for the optimal, sequentially fair predictor, permitting a clear interpretation of inter-sensitive feature correlations. Our approach seamlessly extends to approximate fairness, enveloping a framework accommodating the trade-off between risk and unfairness. This extension permits a targeted prioritization of fairness improvements for a specific attribute within a set of sensitive attributes, allowing for a case specific adaptation. A data-driven estimation procedure for the derived solution is developed, and comprehensive numerical experiments are conducted on both synthetic and real datasets. Our empirical findings decisively underscore the practical efficacy of our post-processing approach in fostering fair decision-making.

Addressing Fairness and Explainability in Image Classification Using Optimal Transport

Aug 22, 2023Abstract:Algorithmic Fairness and the explainability of potentially unfair outcomes are crucial for establishing trust and accountability of Artificial Intelligence systems in domains such as healthcare and policing. Though significant advances have been made in each of the fields separately, achieving explainability in fairness applications remains challenging, particularly so in domains where deep neural networks are used. At the same time, ethical data-mining has become ever more relevant, as it has been shown countless times that fairness-unaware algorithms result in biased outcomes. Current approaches focus on mitigating biases in the outcomes of the model, but few attempts have been made to try to explain \emph{why} a model is biased. To bridge this gap, we propose a comprehensive approach that leverages optimal transport theory to uncover the causes and implications of biased regions in images, which easily extends to tabular data as well. Through the use of Wasserstein barycenters, we obtain scores that are independent of a sensitive variable but keep their marginal orderings. This step ensures predictive accuracy but also helps us to recover the regions most associated with the generation of the biases. Our findings hold significant implications for the development of trustworthy and unbiased AI systems, fostering transparency, accountability, and fairness in critical decision-making scenarios across diverse domains.

Mitigating Discrimination in Insurance with Wasserstein Barycenters

Jun 22, 2023Abstract:The insurance industry is heavily reliant on predictions of risks based on characteristics of potential customers. Although the use of said models is common, researchers have long pointed out that such practices perpetuate discrimination based on sensitive features such as gender or race. Given that such discrimination can often be attributed to historical data biases, an elimination or at least mitigation is desirable. With the shift from more traditional models to machine-learning based predictions, calls for greater mitigation have grown anew, as simply excluding sensitive variables in the pricing process can be shown to be ineffective. In this article, we first investigate why predictions are a necessity within the industry and why correcting biases is not as straightforward as simply identifying a sensitive variable. We then propose to ease the biases through the use of Wasserstein barycenters instead of simple scaling. To demonstrate the effects and effectiveness of the approach we employ it on real data and discuss its implications.

Fairness in Multi-Task Learning via Wasserstein Barycenters

Jun 16, 2023Abstract:Algorithmic Fairness is an established field in machine learning that aims to reduce biases in data. Recent advances have proposed various methods to ensure fairness in a univariate environment, where the goal is to de-bias a single task. However, extending fairness to a multi-task setting, where more than one objective is optimised using a shared representation, remains underexplored. To bridge this gap, we develop a method that extends the definition of \textit{Strong Demographic Parity} to multi-task learning using multi-marginal Wasserstein barycenters. Our approach provides a closed form solution for the optimal fair multi-task predictor including both regression and binary classification tasks. We develop a data-driven estimation procedure for the solution and run numerical experiments on both synthetic and real datasets. The empirical results highlight the practical value of our post-processing methodology in promoting fair decision-making.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge