Peter G. M. van der Heijden

Identification of NMF by choosing maximum-volume basis vectors

Mar 25, 2026Abstract:In nonnegative matrix factorization (NMF), minimum-volume-constrained NMF is a widely used framework for identifying the solution of NMF by making basis vectors as similar as possible. This typically induces sparsity in the coefficient matrix, with each row containing zero entries. Consequently, minimum-volume-constrained NMF may fail for highly mixed data, where such sparsity does not hold. Moreover, the estimated basis vectors in minimum-volume-constrained NMF may be difficult to interpret as they may be mixtures of the ground truth basis vectors. To address these limitations, in this paper we propose a new NMF framework, called maximum-volume-constrained NMF, which makes the basis vectors as distinct as possible. We further establish an identifiability theorem for maximum-volume-constrained NMF and provide an algorithm to estimate it. Experimental results demonstrate the effectiveness of the proposed method.

A review of NMF, PLSA, LBA, EMA, and LCA with a focus on the identifiability issue

Dec 25, 2025Abstract:Across fields such as machine learning, social science, geography, considerable attention has been given to models that factorize a nonnegative matrix into the product of two or three matrices, subject to nonnegative or row-sum-to-1 constraints. Although these models are to a large extend similar or even equivalent, they are presented under different names, and their similarity is not well known. This paper highlights similarities among five popular models, latent budget analysis (LBA), latent class analysis (LCA), end-member analysis (EMA), probabilistic latent semantic analysis (PLSA), and nonnegative matrix factorization (NMF). We focus on an essential issue-identifiability-of these models and prove that the solution of LBA, EMA, LCA, PLSA is unique if and only if the solution of NMF is unique. We also provide a brief review for algorithms of these models. We illustrate the models with a time budget dataset from social science, and end the paper with a discussion of closely related models such as archetypal analysis.

A comparison of correspondence analysis with PMI-based word embedding methods

May 31, 2024

Abstract:Popular word embedding methods such as GloVe and Word2Vec are related to the factorization of the pointwise mutual information (PMI) matrix. In this paper, we link correspondence analysis (CA) to the factorization of the PMI matrix. CA is a dimensionality reduction method that uses singular value decomposition (SVD), and we show that CA is mathematically close to the weighted factorization of the PMI matrix. In addition, we present variants of CA that turn out to be successful in the factorization of the word-context matrix, i.e. CA applied to a matrix where the entries undergo a square-root transformation (ROOT-CA) and a root-root transformation (ROOTROOT-CA). An empirical comparison among CA- and PMI-based methods shows that overall results of ROOT-CA and ROOTROOT-CA are slightly better than those of the PMI-based methods.

Using Chao's Estimator as a Stopping Criterion for Technology-Assisted Review

Apr 01, 2024

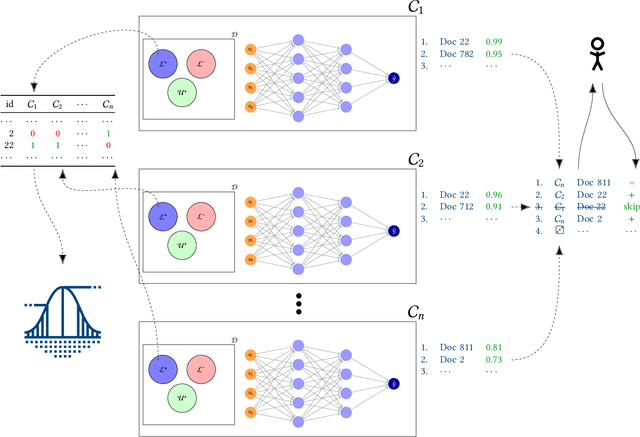

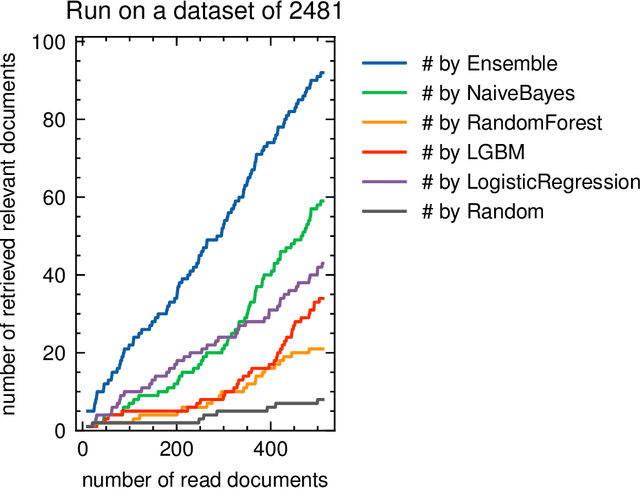

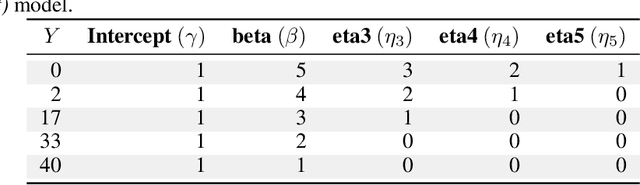

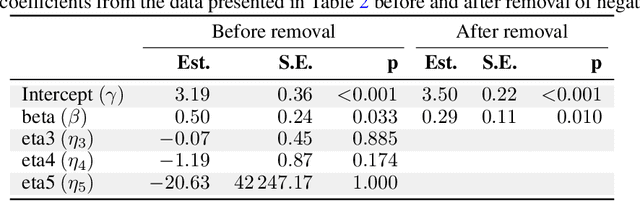

Abstract:Technology-Assisted Review (TAR) aims to reduce the human effort required for screening processes such as abstract screening for systematic literature reviews. Human reviewers label documents as relevant or irrelevant during this process, while the system incrementally updates a prediction model based on the reviewers' previous decisions. After each model update, the system proposes new documents it deems relevant, to prioritize relevant documentsover irrelevant ones. A stopping criterion is necessary to guide users in stopping the review process to minimize the number of missed relevant documents and the number of read irrelevant documents. In this paper, we propose and evaluate a new ensemble-based Active Learning strategy and a stopping criterion based on Chao's Population Size Estimator that estimates the prevalence of relevant documents in the dataset. Our simulation study demonstrates that this criterion performs well on several datasets and is compared to other methods presented in the literature.

Improving information retrieval through correspondence analysis instead of latent semantic analysis

Mar 14, 2023Abstract:Both latent semantic analysis (LSA) and correspondence analysis (CA) are dimensionality reduction techniques that use singular value decomposition (SVD) for information retrieval. Theoretically, the results of LSA display both the association between documents and terms, and marginal effects; in comparison, CA only focuses on the associations between documents and terms. Marginal effects are usually not relevant for information retrieval, and therefore, from a theoretical perspective CA is more suitable for information retrieval. In this paper, we empirically compare LSA and CA. The elements of the raw document-term matrix are weighted, and the weighting exponent of singular values is adjusted to improve the performance of LSA. We explore whether these two weightings also improve the performance of CA. In addition, we compare the optimal singular value weighting exponents for LSA and CA to identify what the initial dimensions in LSA correspond to. The results for four empirical datasets show that CA always performs better than LSA. Weighting the elements of the raw data matrix can improve CA; however, it is data dependent and the improvement is small. Adjusting the singular value weighting exponent usually improves the performance of CA; however, the extent of the improved performance depends on the dataset and number of dimensions. In general, CA needs a larger singular value weighting exponent than LSA to obtain the optimal performance. This indicates that CA emphasizes initial dimensions more than LSA, and thus, margins play an important role in the initial dimensions in LSA.

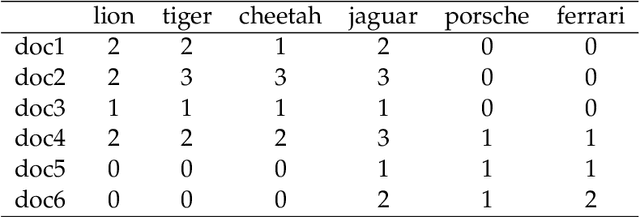

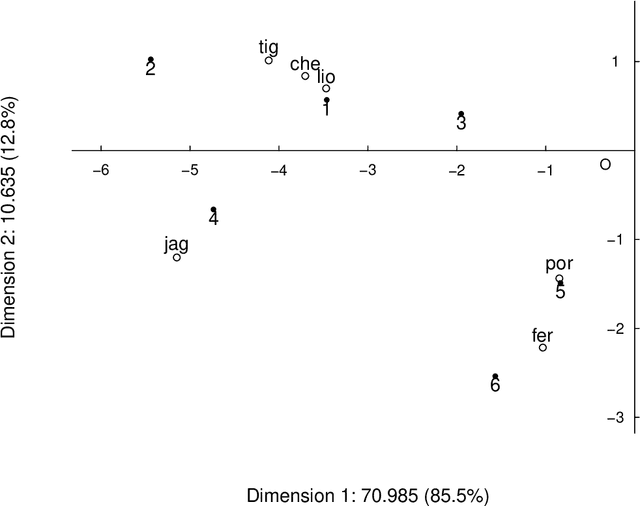

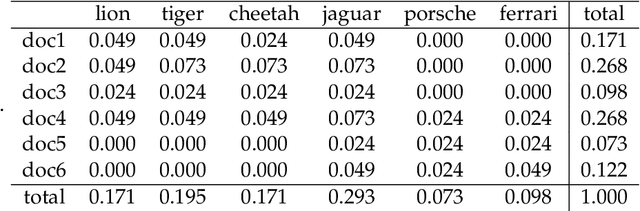

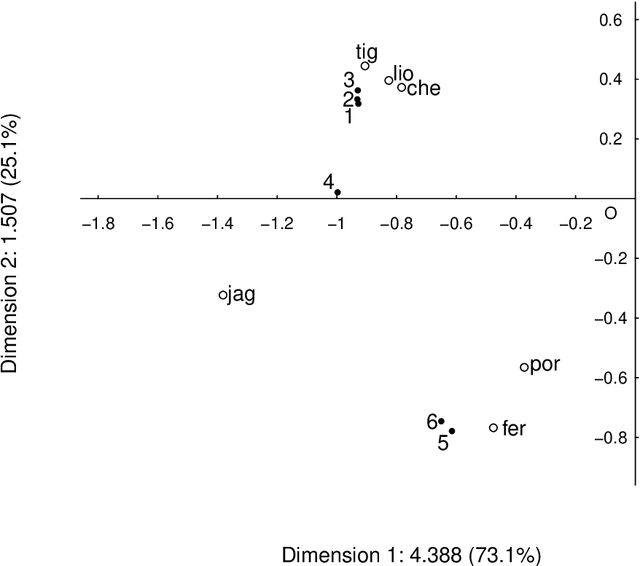

A Comparison of Latent Semantic Analysis and Correspondence Analysis for Text Mining

Jul 25, 2021

Abstract:Both latent semantic analysis (LSA) and correspondence analysis (CA) use a singular value decomposition (SVD) for dimensionality reduction. In this article, LSA and CA are compared from a theoretical point of view and applied in both a toy example and an authorship attribution example. In text mining interest goes out to the relationships among documents and terms: for example, what terms are more often used in what documents. However, the LSA solution displays a mix of marginal effects and these relationships. It appears that CA has more attractive properties than LSA. One such property is that, in CA, the effect of the margins is effectively eliminated, so that the CA solution is optimally suited to focus on the relationships among documents and terms. Three mechanisms are distinguished to weight documents and terms, and a unifying framework is proposed that includes these three mechanisms and includes both CA and LSA as special cases. In the authorship attribution example, the national anthem of the Netherlands, the application of the discussed methods is illustrated.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge