Pei-Kai Huanga

Enhancing Learnable Descriptive Convolutional Vision Transformer for Face Anti-Spoofing

Mar 29, 2025

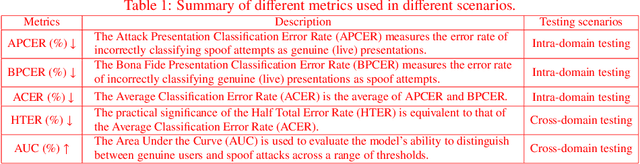

Abstract:Face anti-spoofing (FAS) heavily relies on identifying live/spoof discriminative features to counter face presentation attacks. Recently, we proposed LDCformer to successfully incorporate the Learnable Descriptive Convolution (LDC) into ViT, to model long-range dependency of locally descriptive features for FAS. In this paper, we propose three novel training strategies to effectively enhance the training of LDCformer to largely boost its feature characterization capability. The first strategy, dual-attention supervision, is developed to learn fine-grained liveness features guided by regional live/spoof attentions. The second strategy, self-challenging supervision, is designed to enhance the discriminability of the features by generating challenging training data. In addition, we propose a third training strategy, transitional triplet mining strategy, through narrowing the cross-domain gap while maintaining the transitional relationship between live and spoof features, to enlarge the domain-generalization capability of LDCformer. Extensive experiments show that LDCformer under joint supervision of the three novel training strategies outperforms previous methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge