Pedro Vera-Candeas

SynthSOD: Developing an Heterogeneous Dataset for Orchestra Music Source Separation

Sep 17, 2024

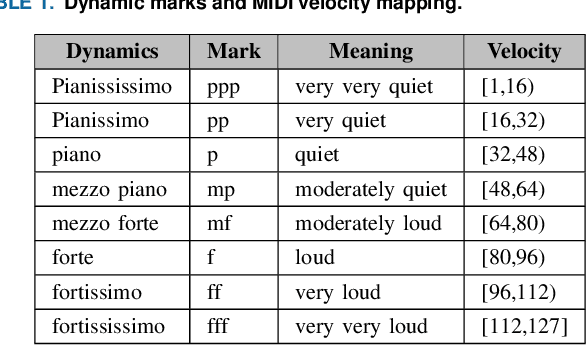

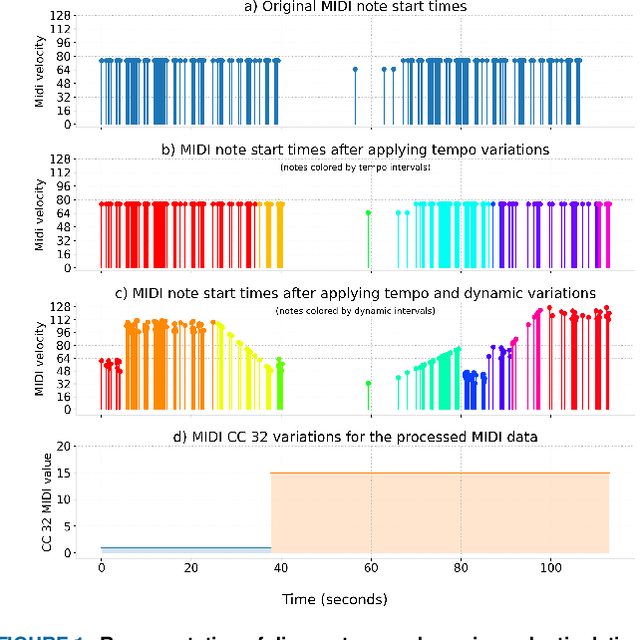

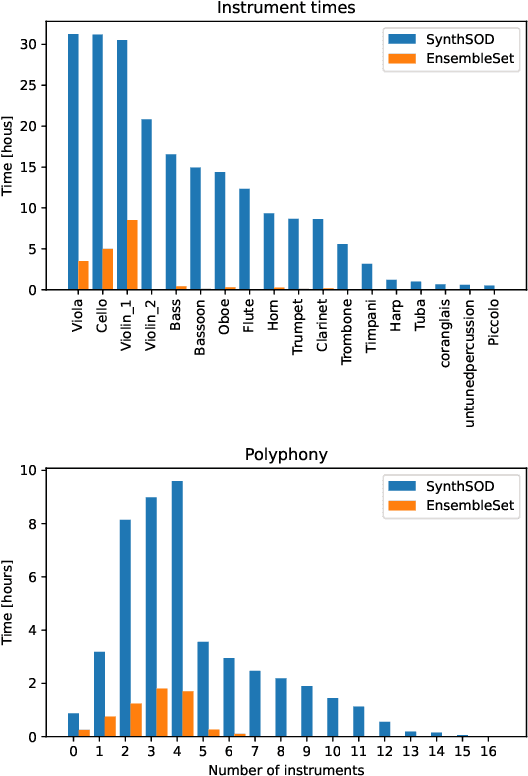

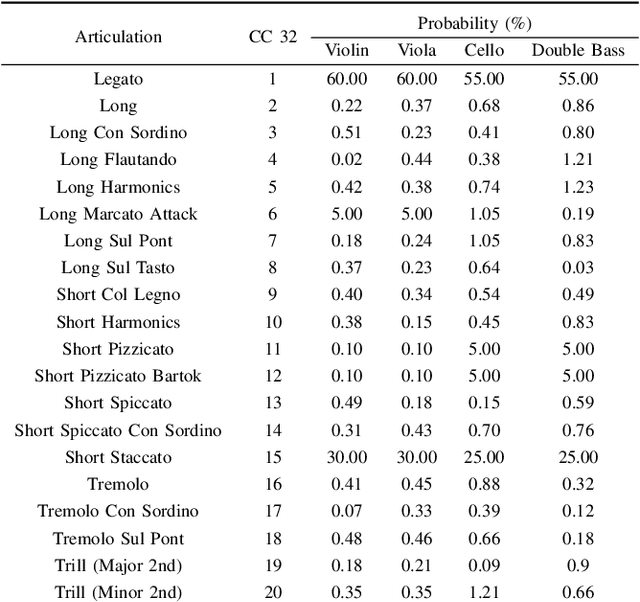

Abstract:Recent advancements in music source separation have significantly progressed, particularly in isolating vocals, drums, and bass elements from mixed tracks. These developments owe much to the creation and use of large-scale, multitrack datasets dedicated to these specific components. However, the challenge of extracting similarly sounding sources from orchestra recordings has not been extensively explored, largely due to a scarcity of comprehensive and clean (i.e bleed-free) multitrack datasets. In this paper, we introduce a novel multitrack dataset called SynthSOD, developed using a set of simulation techniques to create a realistic (i.e. using high-quality soundfonts), musically motivated, and heterogeneous training set comprising different dynamics, natural tempo changes, styles, and conditions. Moreover, we demonstrate the application of a widely used baseline music separation model trained on our synthesized dataset w.r.t to the well-known EnsembleSet, and evaluate its performance under both synthetic and real-world conditions.

Pre-trained Spatial Priors on Multichannel NMF for Music Source Separation

Oct 09, 2023Abstract:This paper presents a novel approach to sound source separation that leverages spatial information obtained during the recording setup. Our method trains a spatial mixing filter using solo passages to capture information about the room impulse response and transducer response at each sensor location. This pre-trained filter is then integrated into a multichannel non-negative matrix factorization (MNMF) scheme to better capture the variances of different sound sources. The recording setup used in our experiments is the typical setup for orchestra recordings, with a main microphone and a close "cardioid" or "supercardioid" microphone for each section of the orchestra. This makes the proposed method applicable to many existing recordings. Experiments on polyphonic ensembles demonstrate the effectiveness of the proposed framework in separating individual sound sources, improving performance compared to conventional MNMF methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge