Pedro Gonnet

IndyLSTMs: Independently Recurrent LSTMs

Mar 19, 2019

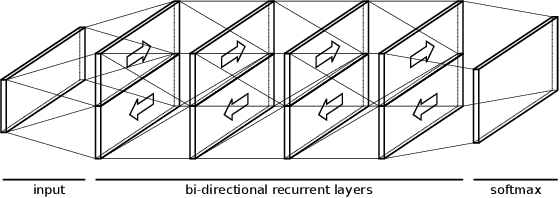

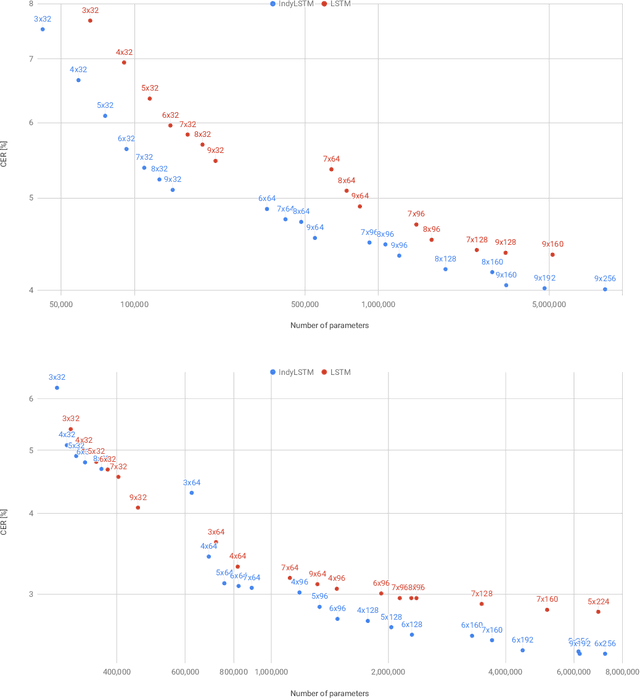

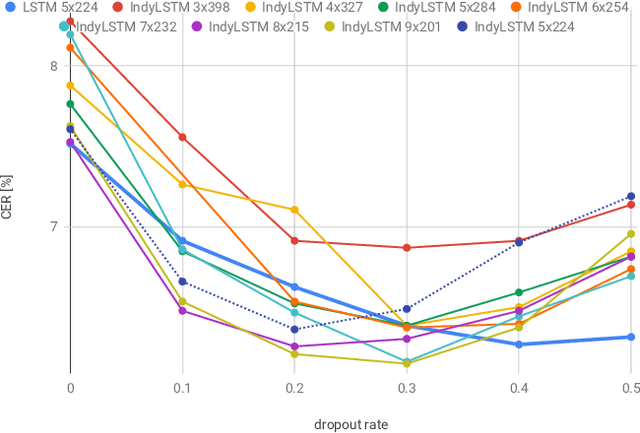

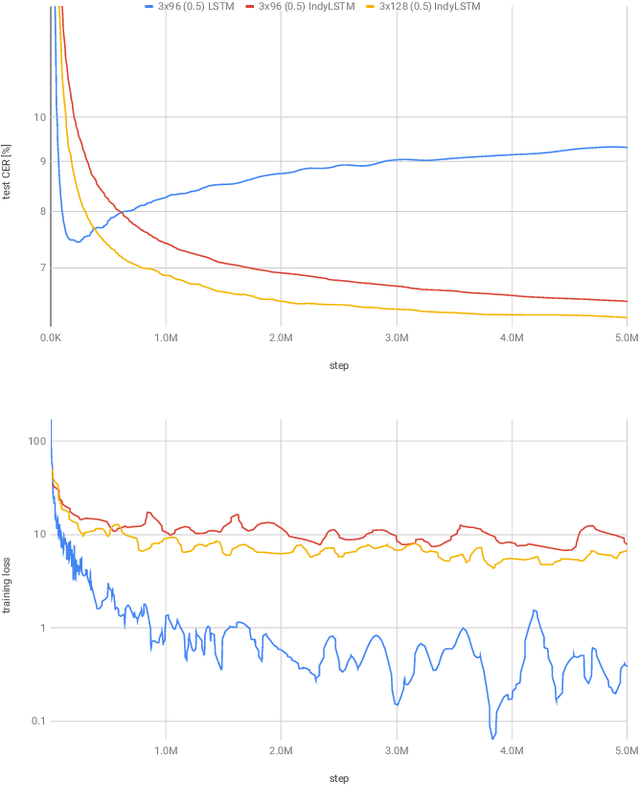

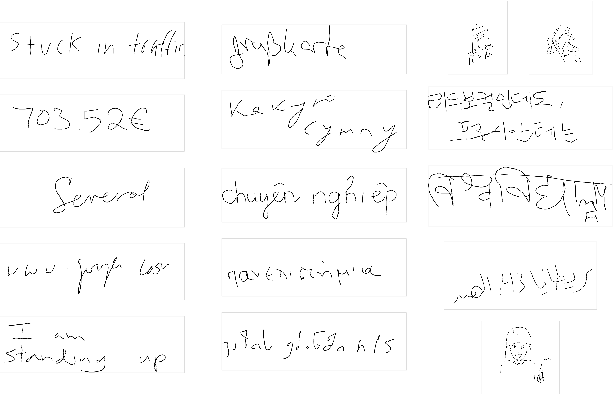

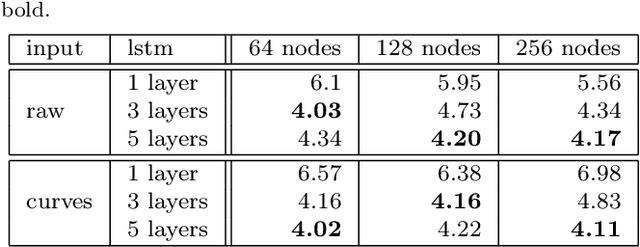

Abstract:We introduce Independently Recurrent Long Short-term Memory cells: IndyLSTMs. These differ from regular LSTM cells in that the recurrent weights are not modeled as a full matrix, but as a diagonal matrix, i.e.\ the output and state of each LSTM cell depends on the inputs and its own output/state, as opposed to the input and the outputs/states of all the cells in the layer. The number of parameters per IndyLSTM layer, and thus the number of FLOPS per evaluation, is linear in the number of nodes in the layer, as opposed to quadratic for regular LSTM layers, resulting in potentially both smaller and faster models. We evaluate their performance experimentally by training several models on the popular \iamondb and CASIA online handwriting datasets, as well as on several of our in-house datasets. We show that IndyLSTMs, despite their smaller size, consistently outperform regular LSTMs both in terms of accuracy per parameter, and in best accuracy overall. We attribute this improved performance to the IndyLSTMs being less prone to overfitting.

Fast Multi-language LSTM-based Online Handwriting Recognition

Feb 22, 2019

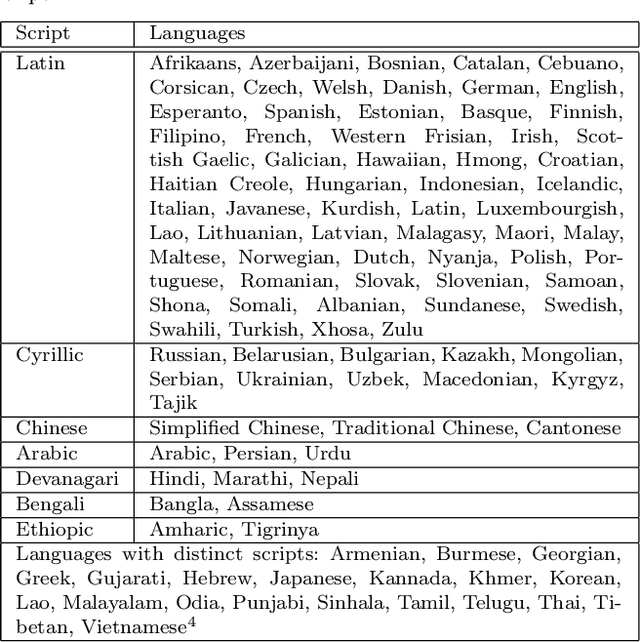

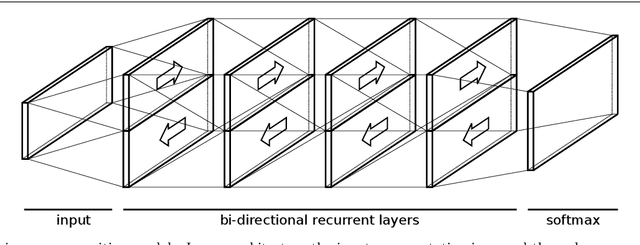

Abstract:We describe an online handwriting system that is able to support 102 languages using a deep neural network architecture. This new system has completely replaced our previous Segment-and-Decode-based system and reduced the error rate by 20%-40% relative for most languages. Further, we report new state-of-the-art results on IAM-OnDB for both the open and closed dataset setting. The system combines methods from sequence recognition with a new input encoding using B\'ezier curves. This leads to up to 10x faster recognition times compared to our previous system. Through a series of experiments we determine the optimal configuration of our models and report the results of our setup on a number of additional public datasets.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge