Pawel Mlynarski

Anatomically Consistent Segmentation of Organs at Risk in MRI with Convolutional Neural Networks

Jul 03, 2019

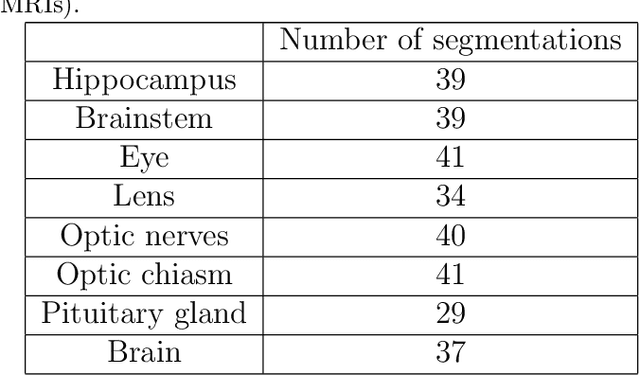

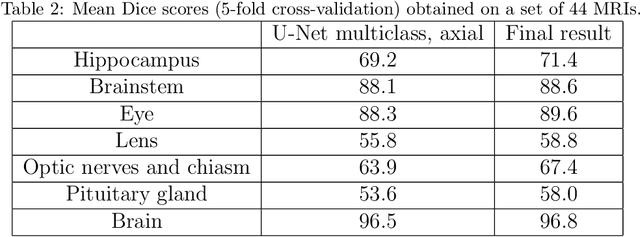

Abstract:Planning of radiotherapy involves accurate segmentation of a large number of organs at risk, i.e. organs for which irradiation doses should be minimized to avoid important side effects of the therapy. We propose a deep learning method for segmentation of organs at risk inside the brain region, from Magnetic Resonance (MR) images. Our system performs segmentation of eight structures: eye, lens, optic nerve, optic chiasm, pituitary gland, hippocampus, brainstem and brain. We propose an efficient algorithm to train neural networks for an end-to-end segmentation of multiple and non-exclusive classes, addressing problems related to computational costs and missing ground truth segmentations for a subset of classes. We enforce anatomical consistency of the result in a postprocessing step, in particular we introduce a graph-based algorithm for segmentation of the optic nerves, enforcing the connectivity between the eyes and the optic chiasm. We report cross-validated quantitative results on a database of 44 contrast-enhanced T1-weighted MRIs with provided segmentations of the considered organs at risk, which were originally used for radiotherapy planning. In addition, the segmentations produced by our model on an independent test set of 50 MRIs are evaluated by an experienced radiotherapist in order to qualitatively assess their accuracy. The mean distances between produced segmentations and the ground truth ranged from 0.1 mm to 0.7 mm across different organs. A vast majority (96 %) of the produced segmentations were found acceptable for radiotherapy planning.

Deep Learning with Mixed Supervision for Brain Tumor Segmentation

Dec 10, 2018

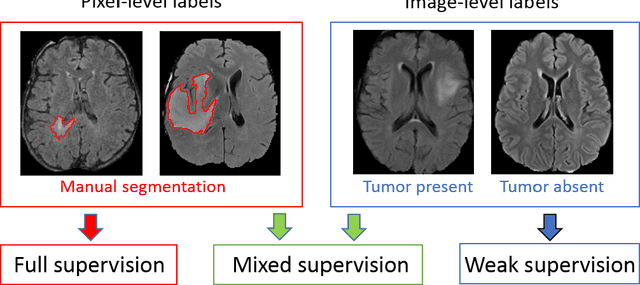

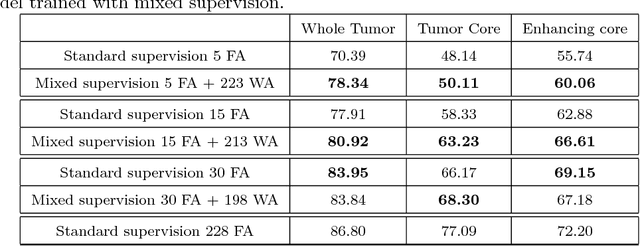

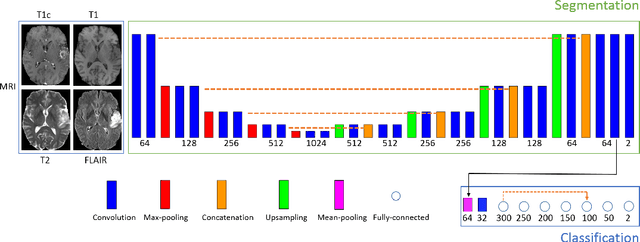

Abstract:Most of the current state-of-the-art methods for tumor segmentation are based on machine learning models trained on manually segmented images. This type of training data is particularly costly, as manual delineation of tumors is not only time-consuming but also requires medical expertise. On the other hand, images with a provided global label (indicating presence or absence of a tumor) are less informative but can be obtained at a substantially lower cost. In this paper, we propose to use both types of training data (fully-annotated and weakly-annotated) to train a deep learning model for segmentation. The idea of our approach is to extend segmentation networks with an additional branch performing image-level classification. The model is jointly trained for segmentation and classification tasks in order to exploit information contained in weakly-annotated images while preventing the network to learn features which are irrelevant for the segmentation task. We evaluate our method on the challenging task of brain tumor segmentation in Magnetic Resonance images from BRATS 2018 challenge. We show that the proposed approach provides a significant improvement of segmentation performance compared to the standard supervised learning. The observed improvement is proportional to the ratio between weakly-annotated and fully-annotated images available for training.

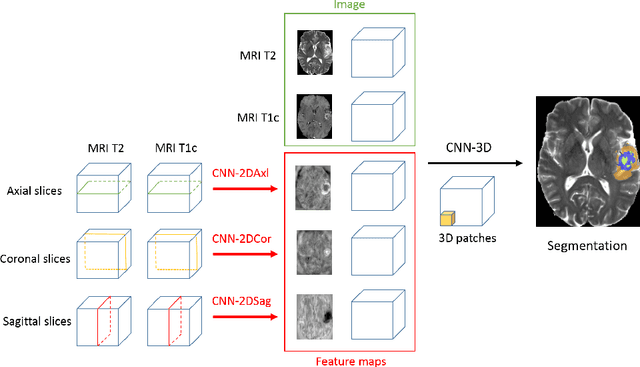

3D Convolutional Neural Networks for Tumor Segmentation using Long-range 2D Context

Jul 23, 2018

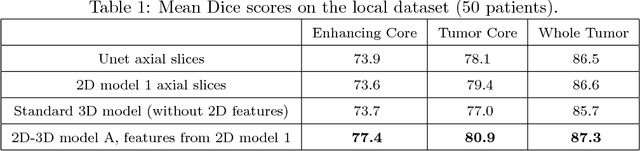

Abstract:We present an efficient deep learning approach for the challenging task of tumor segmentation in multisequence MR images. In recent years, Convolutional Neural Networks (CNN) have achieved state-of-the-art performances in a large variety of recognition tasks in medical imaging. Because of the considerable computational cost of CNNs, large volumes such as MRI are typically processed by subvolumes, for instance slices (axial, coronal, sagittal) or small 3D patches. In this paper we introduce a CNN-based model which efficiently combines the advantages of the short-range 3D context and the long-range 2D context. To overcome the limitations of specific choices of neural network architectures, we also propose to merge outputs of several cascaded 2D-3D models by a voxelwise voting strategy. Furthermore, we propose a network architecture in which the different MR sequences are processed by separate subnetworks in order to be more robust to the problem of missing MR sequences. Finally, a simple and efficient algorithm for training large CNN models is introduced. We evaluate our method on the public benchmark of the BRATS 2017 challenge on the task of multiclass segmentation of malignant brain tumors. Our method achieves good performances and produces accurate segmentations with median Dice scores of 0.918 (whole tumor), 0.883 (tumor core) and 0.854 (enhancing core). Our approach can be naturally applied to various tasks involving segmentation of lesions or organs.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge