Pascale Gourdeau

On the Computability of Multiclass PAC Learning

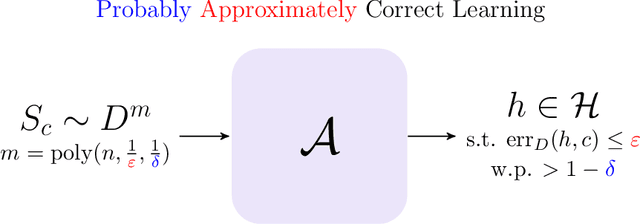

Feb 10, 2025Abstract:We study the problem of computable multiclass learnability within the Probably Approximately Correct (PAC) learning framework of Valiant (1984). In the recently introduced computable PAC (CPAC) learning framework of Agarwal et al. (2020), both learners and the functions they output are required to be computable. We focus on the case of finite label space and start by proposing a computable version of the Natarajan dimension and showing that it characterizes CPAC learnability in this setting. We further generalize this result by establishing a meta-characterization of CPAC learnability for a certain family of dimensions: computable distinguishers. Distinguishers were defined by Ben-David et al. (1992) as a certain family of embeddings of the label space, with each embedding giving rise to a dimension. It was shown that the finiteness of each such dimension characterizes multiclass PAC learnability for finite label space in the non-computable setting. We show that the corresponding computable dimensions for distinguishers characterize CPAC learning. We conclude our analysis by proving that the DS dimension, which characterizes PAC learnability for infinite label space, cannot be expressed as a distinguisher (even in the case of finite label space).

On the Computability of Robust PAC Learning

Jun 14, 2024Abstract:We initiate the study of computability requirements for adversarially robust learning. Adversarially robust PAC-type learnability is by now an established field of research. However, the effects of computability requirements in PAC-type frameworks are only just starting to emerge. We introduce the problem of robust computable PAC (robust CPAC) learning and provide some simple sufficient conditions for this. We then show that learnability in this setup is not implied by the combination of its components: classes that are both CPAC and robustly PAC learnable are not necessarily robustly CPAC learnable. Furthermore, we show that the novel framework exhibits some surprising effects: for robust CPAC learnability it is not required that the robust loss is computably evaluable! Towards understanding characterizing properties, we introduce a novel dimension, the computable robust shattering dimension. We prove that its finiteness is necessary, but not sufficient for robust CPAC learnability. This might yield novel insights for the corresponding phenomenon in the context of robust PAC learnability, where insufficiency of the robust shattering dimension for learnability has been conjectured, but so far a resolution has remained elusive.

Sample Complexity of Robust Learning against Evasion Attacks

Aug 23, 2023

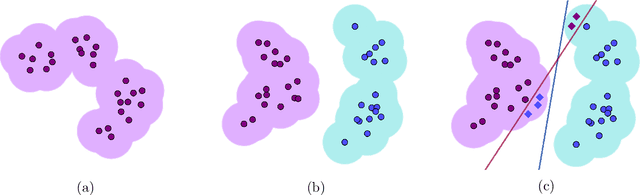

Abstract:It is becoming increasingly important to understand the vulnerability of machine learning models to adversarial attacks. One of the fundamental problems in adversarial machine learning is to quantify how much training data is needed in the presence of evasion attacks, where data is corrupted at test time. In this thesis, we work with the exact-in-the-ball notion of robustness and study the feasibility of adversarially robust learning from the perspective of learning theory, considering sample complexity. We first explore the setting where the learner has access to random examples only, and show that distributional assumptions are essential. We then focus on learning problems with distributions on the input data that satisfy a Lipschitz condition and show that robustly learning monotone conjunctions has sample complexity at least exponential in the adversary's budget (the maximum number of bits it can perturb on each input). However, if the adversary is restricted to perturbing $O(\log n)$ bits, then one can robustly learn conjunctions and decision lists w.r.t. log-Lipschitz distributions. We then study learning models where the learner is given more power. We first consider local membership queries, where the learner can query the label of points near the training sample. We show that, under the uniform distribution, the exponential dependence on the adversary's budget to robustly learn conjunctions remains inevitable. We then introduce a local equivalence query oracle, which returns whether the hypothesis and target concept agree in a given region around a point in the training sample, and a counterexample if it exists. We show that if the query radius is equal to the adversary's budget, we can develop robust empirical risk minimization algorithms in the distribution-free setting. We give general query complexity upper and lower bounds, as well as for concrete concept classes.

When are Local Queries Useful for Robust Learning?

Oct 12, 2022

Abstract:Distributional assumptions have been shown to be necessary for the robust learnability of concept classes when considering the exact-in-the-ball robust risk and access to random examples by Gourdeau et al. (2019). In this paper, we study learning models where the learner is given more power through the use of local queries, and give the first distribution-free algorithms that perform robust empirical risk minimization (ERM) for this notion of robustness. The first learning model we consider uses local membership queries (LMQ), where the learner can query the label of points near the training sample. We show that, under the uniform distribution, LMQs do not increase the robustness threshold of conjunctions and any superclass, e.g., decision lists and halfspaces. Faced with this negative result, we introduce the local equivalence query (LEQ) oracle, which returns whether the hypothesis and target concept agree in the perturbation region around a point in the training sample, as well as a counterexample if it exists. We show a separation result: on one hand, if the query radius $\lambda$ is strictly smaller than the adversary's perturbation budget $\rho$, then distribution-free robust learning is impossible for a wide variety of concept classes; on the other hand, the setting $\lambda=\rho$ allows us to develop robust ERM algorithms. We then bound the query complexity of these algorithms based on online learning guarantees and further improve these bounds for the special case of conjunctions. We finish by giving robust learning algorithms for halfspaces with margins on both $\{0,1\}^n$ and $\mathbb{R}^n$.

Sample Complexity Bounds for Robustly Learning Decision Lists against Evasion Attacks

May 12, 2022Abstract:A fundamental problem in adversarial machine learning is to quantify how much training data is needed in the presence of evasion attacks. In this paper we address this issue within the framework of PAC learning, focusing on the class of decision lists. Given that distributional assumptions are essential in the adversarial setting, we work with probability distributions on the input data that satisfy a Lipschitz condition: nearby points have similar probability. Our key results illustrate that the adversary's budget (that is, the number of bits it can perturb on each input) is a fundamental quantity in determining the sample complexity of robust learning. Our first main result is a sample-complexity lower bound: the class of monotone conjunctions (essentially the simplest non-trivial hypothesis class on the Boolean hypercube) and any superclass has sample complexity at least exponential in the adversary's budget. Our second main result is a corresponding upper bound: for every fixed $k$ the class of $k$-decision lists has polynomial sample complexity against a $\log(n)$-bounded adversary. This sheds further light on the question of whether an efficient PAC learning algorithm can always be used as an efficient $\log(n)$-robust learning algorithm under the uniform distribution.

On the Hardness of Robust Classification

Sep 12, 2019

Abstract:It is becoming increasingly important to understand the vulnerability of machine learning models to adversarial attacks. In this paper we study the feasibility of robust learning from the perspective of computational learning theory, considering both sample and computational complexity. In particular, our definition of robust learnability requires polynomial sample complexity. We start with two negative results. We show that no non-trivial concept class can be robustly learned in the distribution-free setting against an adversary who can perturb just a single input bit. We show moreover that the class of monotone conjunctions cannot be robustly learned under the uniform distribution against an adversary who can perturb $\omega(\log n)$ input bits. However if the adversary is restricted to perturbing $O(\log n)$ bits, then the class of monotone conjunctions can be robustly learned with respect to a general class of distributions (that includes the uniform distribution). Finally, we provide a simple proof of the computational hardness of robust learning on the boolean hypercube. Unlike previous results of this nature, our result does not rely on another computational model (e.g. the statistical query model) nor on any hardness assumption other than the existence of a hard learning problem in the PAC framework.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge