Parisa Fard Moshiri

Proactive SFC Provisioning with Forecast-Driven DRL in Data Centers

Jan 28, 2026Abstract:Service Function Chaining (SFC) requires efficient placement of Virtual Network Functions (VNFs) to satisfy diverse service requirements while maintaining high resource utilization in Data Centers (DCs). Conventional static resource allocation often leads to overprovisioning or underprovisioning due to the dynamic nature of traffic loads and application demands. To address this challenge, we propose a hybrid forecast-driven Deep reinforcement learning (DRL) framework that combines predictive intelligence with SFC provisioning. Specifically, we leverage DRL to generate datasets capturing DC resource utilization and service demands, which are then used to train deep learning forecasting models. Using Optuna-based hyperparameter optimization, the best-performing models, Spatio-Temporal Graph Neural Network, Temporal Graph Neural Network, and Long Short-Term Memory, are combined into an ensemble to enhance stability and accuracy. The ensemble predictions are integrated into the DC selection process, enabling proactive placement decisions that consider both current and future resource availability. Experimental results demonstrate that the proposed method not only sustains high acceptance ratios for resource-intensive services such as Cloud Gaming and VoIP but also significantly improves acceptance ratios for latency-critical categories such as Augmented Reality increases from 30% to 50%, while Industry 4.0 improves from 30% to 45%. Consequently, the prediction-based model achieves significantly lower E2E latencies of 20.5%, 23.8%, and 34.8% reductions for VoIP, Video Streaming, and Cloud Gaming, respectively. This strategy ensures more balanced resource allocation, and reduces contention.

Structure-Aware NL-to-SQL for SFC Provisioning via AST-Masking Empowered Language Models

Jan 24, 2026Abstract:Effective Service Function Chain (SFC) provisioning requires precise orchestration in dynamic and latency-sensitive networks. Reinforcement Learning (RL) improves adaptability but often ignores structured domain knowledge, which limits generalization and interpretability. Large Language Models (LLMs) address this gap by translating natural language (NL) specifications into executable Structured Query Language (SQL) commands for specification-driven SFC management. Conventional fine-tuning, however, can cause syntactic inconsistencies and produce inefficient queries. To overcome this, we introduce Abstract Syntax Tree (AST)-Masking, a structure-aware fine-tuning method that uses SQL ASTs to assign weights to key components and enforce syntax-aware learning without adding inference overhead. Experiments show that AST-Masking significantly improves SQL generation accuracy across multiple language models. FLAN-T5 reaches an Execution Accuracy (EA) of 99.6%, while Gemma achieves the largest absolute gain from 7.5% to 72.0%. These results confirm the effectiveness of structure-aware fine-tuning in ensuring syntactically correct and efficient SQL generation for interpretable SFC orchestration.

Partitioned Task Offloading for Low-Latency and Reliable Task Completion in 5G MEC

Mar 25, 2025Abstract:The demand for MEC has increased with the rise of data-intensive applications and 5G networks, while conventional cloud models struggle to satisfy low-latency requirements. While task offloading is crucial for minimizing latency on resource-constrained User Equipment (UE), fully offloading of all tasks to MEC servers may result in overload and possible task drops. Overlooking the effect of number of dropped tasks can significantly undermine system efficiency, as each dropped task results in unfulfilled service demands and reduced reliability, directly impacting user experience and overall network performance. In this paper, we employ task partitioning, enabling partitions of task to be processed locally while assigning the rest to MEC, thus balancing the load and ensuring no task drops. This methodology enhances efficiency via Mixed Integer Linear Programming (MILP) and Cuckoo Search, resulting in effective task assignment and minimum latency. Moreover, we ensure each user's RB allocation stays within the maximum limit while keeping latency low. Experimental results indicate that this strategy surpasses both full offloading and full local processing, providing significant improvements in latency and task completion rates across diverse number of users. In our scenario, MILP task partitioning results in 24% reduction in latency compared to MILP task offloading for the maximum number of users, whereas Cuckoo search task partitioning yields 18% latency reduction in comparison with Cuckoo search task offloading.

On the Interplay Between Network Metrics and Performance of Mobile Edge Offloading

Jan 23, 2024

Abstract:Multi-Access Edge Computing (MEC) emerged as a viable computing allocation method that facilitates offloading tasks to edge servers for efficient processing. The integration of MEC with 5G, referred to as 5G-MEC, provides real-time processing and data-driven decision-making in close proximity to the user. The 5G-MEC has gained significant recognition in task offloading as an essential tool for applications that require low delay. Nevertheless, few studies consider the dropped task ratio metric. Disregarding this metric might possibly undermine system efficiency. In this paper, the dropped task ratio and delay has been minimized in a realistic 5G-MEC task offloading scenario implemented in NS3. We utilize Mixed Integer Linear Programming (MILP) and Genetic Algorithm (GA) to optimize delay and dropped task ratio. We examined the effect of the number of tasks and users on the dropped task ratio and delay. Compared to two traditional offloading schemes, First Come First Serve (FCFS) and Shortest Task First (STF), our proposed method effectively works in 5G-MEC task offloading scenario. For MILP, the dropped task ratio and delay has been minimized by 20% and 2ms compared to GA.

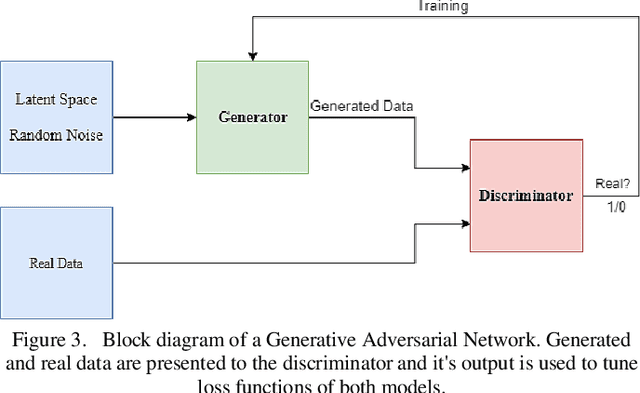

Generative Adversarial Networks (GANs) in Networking: A Comprehensive Survey & Evaluation

May 10, 2021

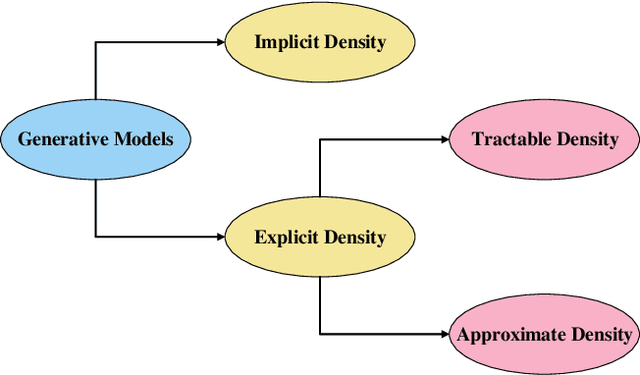

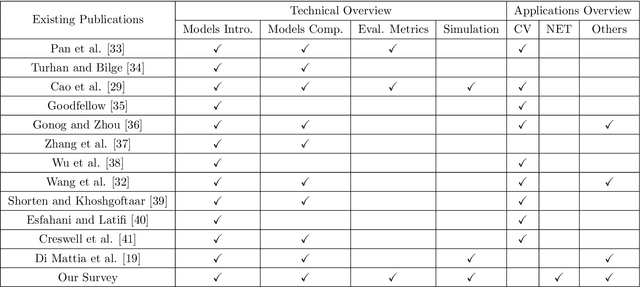

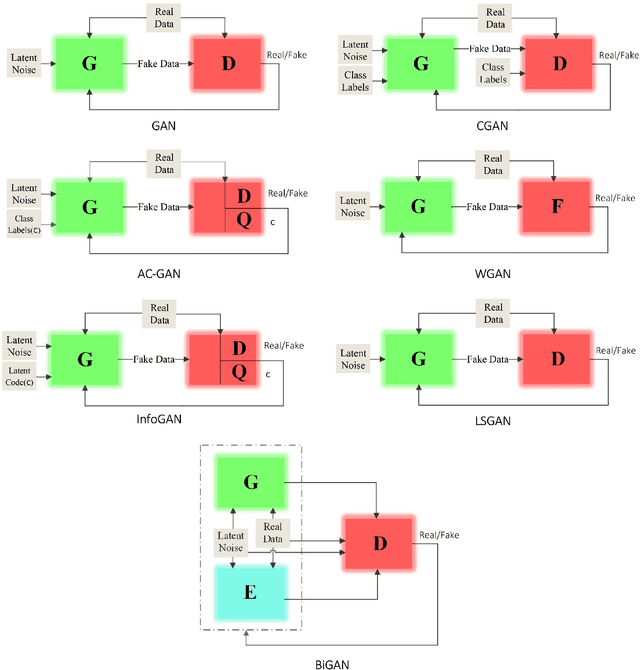

Abstract:Despite the recency of their conception, Generative Adversarial Networks (GANs) constitute an extensively researched machine learning sub-field for the creation of synthetic data through deep generative modeling. GANs have consequently been applied in a number of domains, most notably computer vision, in which they are typically used to generate or transform synthetic images. Given their relative ease of use, it is therefore natural that researchers in the field of networking (which has seen extensive application of deep learning methods) should take an interest in GAN-based approaches. The need for a comprehensive survey of such activity is therefore urgent. In this paper, we demonstrate how this branch of machine learning can benefit multiple aspects of computer and communication networks, including mobile networks, network analysis, internet of things, physical layer, and cybersecurity. In doing so, we shall provide a novel evaluation framework for comparing the performance of different models in non-image applications, applying this to a number of reference network datasets.

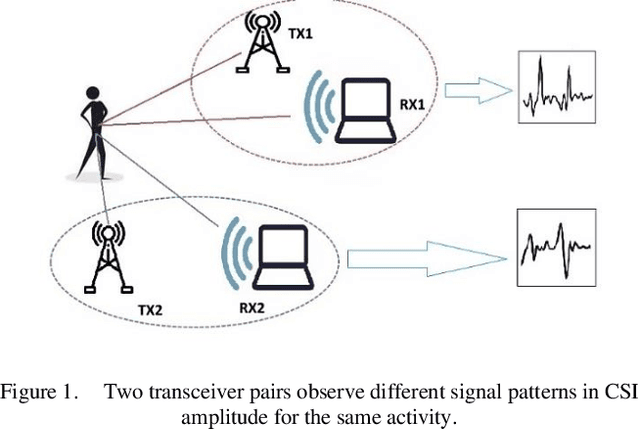

Using GAN to Enhance the Accuracy of Indoor Human Activity Recognition

Apr 23, 2020

Abstract:Indoor human activity recognition (HAR) explores the correlation between human body movements and the reflected WiFi signals to classify different activities. By analyzing WiFi signal patterns, especially the dynamics of channel state information (CSI), different activities can be distinguished. Gathering CSI data is expensive both from the timing and equipment perspective. In this paper, we use synthetic data to reduce the need for real measured CSI. We present a semi-supervised learning method for CSI-based activity recognition systems in which long short-term memory (LSTM) is employed to learn features and recognize seven different actions. We apply principal component analysis (PCA) on CSI amplitude data, while short-time Fourier transform (STFT) extracts the features in the frequency domain. At first, we train the LSTM network with entirely raw CSI data, which takes much more processing time. To this end, we aim to generate data by using 50% of raw data in conjunction with a generative adversarial network (GAN). Our experimental results confirm that this model can increase classification accuracy by 3.4% and reduce the Log loss by almost 16% in the considered scenario.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge