Param Vir Singh

Algorithmic Transparency with Strategic Users

Aug 21, 2020

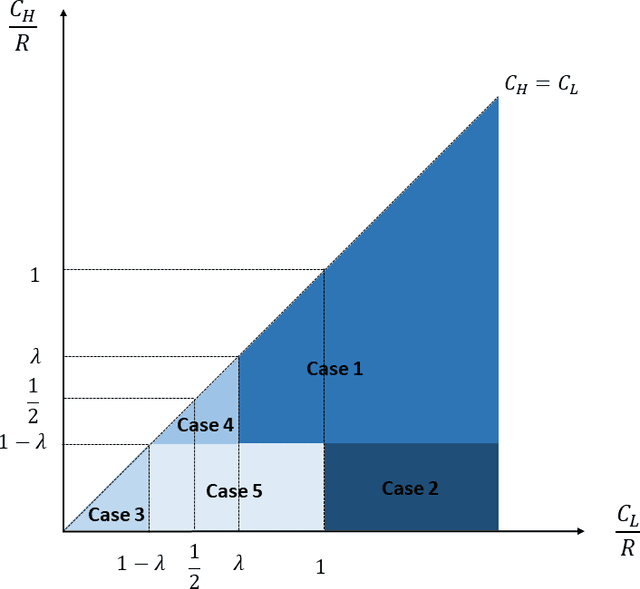

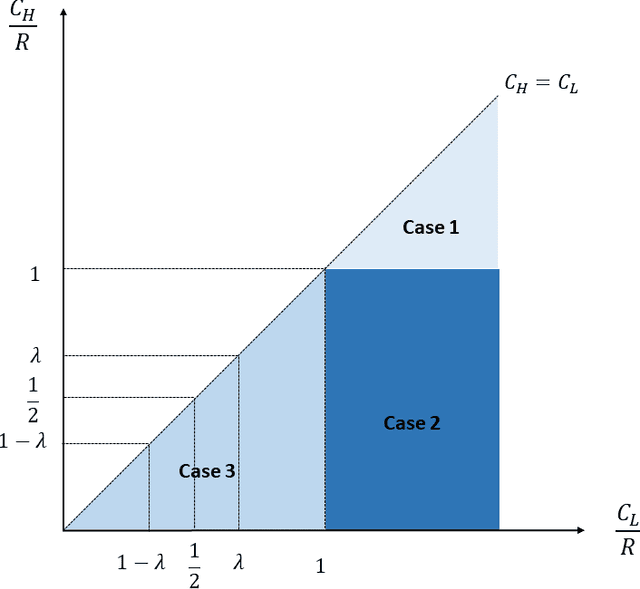

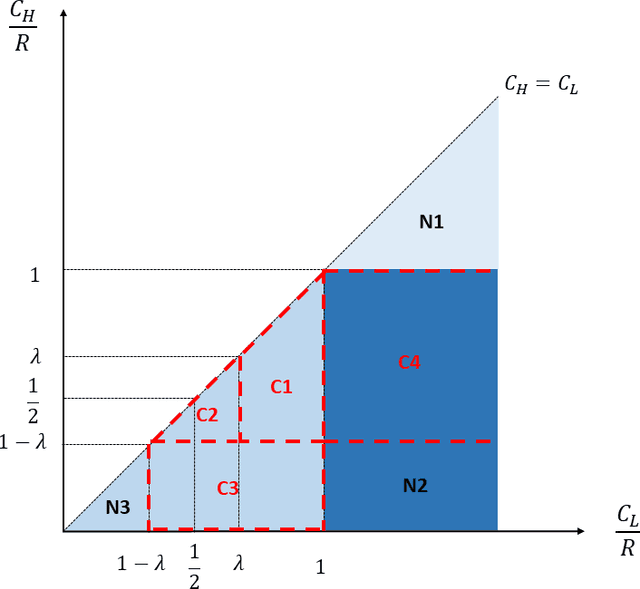

Abstract:Should firms that apply machine learning algorithms in their decision-making make their algorithms transparent to the users they affect? Despite growing calls for algorithmic transparency, most firms have kept their algorithms opaque, citing potential gaming by users that may negatively affect the algorithm's predictive power. We develop an analytical model to compare firm and user surplus with and without algorithmic transparency in the presence of strategic users and present novel insights. We identify a broad set of conditions under which making the algorithm transparent benefits the firm. We show that, in some cases, even the predictive power of machine learning algorithms may increase if the firm makes them transparent. By contrast, users may not always be better off under algorithmic transparency. The results hold even when the predictive power of the opaque algorithm comes largely from correlational features and the cost for users to improve on them is close to zero. Overall, our results show that firms should not view manipulation by users as bad. Rather, they should use algorithmic transparency as a lever to motivate users to invest in more desirable features.

Crowd, Lending, Machine, and Bias

Jul 20, 2020

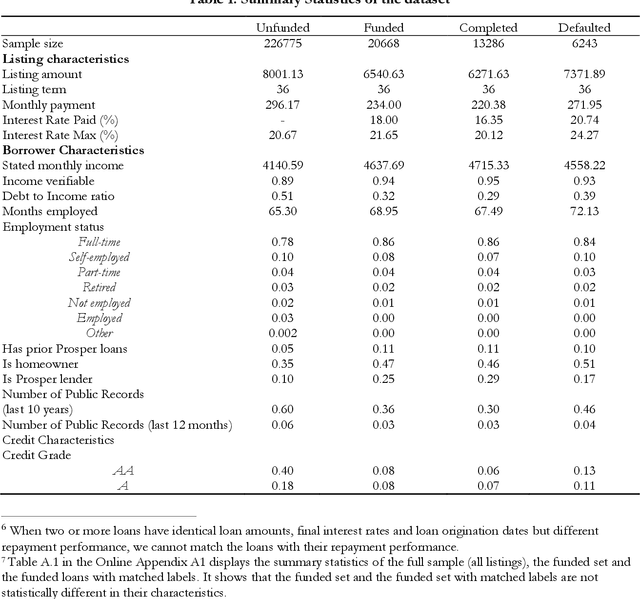

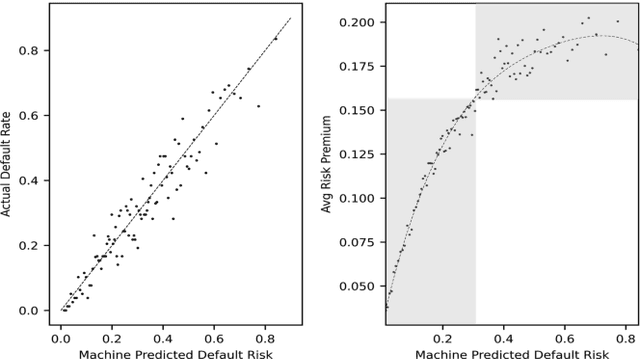

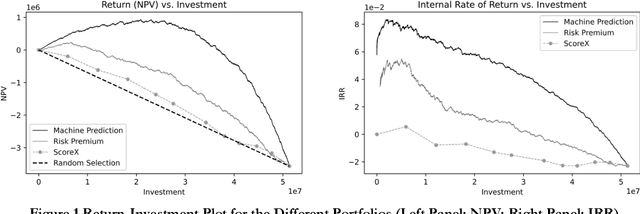

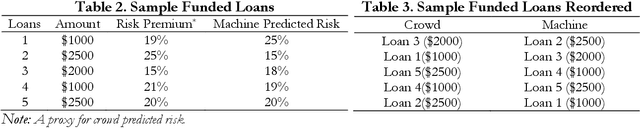

Abstract:Big data and machine learning (ML) algorithms are key drivers of many fintech innovations. While it may be obvious that replacing humans with machine would increase efficiency, it is not clear whether and where machines can make better decisions than humans. We answer this question in the context of crowd lending, where decisions are traditionally made by a crowd of investors. Using data from Prosper.com, we show that a reasonably sophisticated ML algorithm predicts listing default probability more accurately than crowd investors. The dominance of the machine over the crowd is more pronounced for highly risky listings. We then use the machine to make investment decisions, and find that the machine benefits not only the lenders but also the borrowers. When machine prediction is used to select loans, it leads to a higher rate of return for investors and more funding opportunities for borrowers with few alternative funding options. We also find suggestive evidence that the machine is biased in gender and race even when it does not use gender and race information as input. We propose a general and effective "debasing" method that can be applied to any prediction focused ML applications, and demonstrate its use in our context. We show that the debiased ML algorithm, which suffers from lower prediction accuracy, still leads to better investment decisions compared with the crowd. These results indicate that ML can help crowd lending platforms better fulfill the promise of providing access to financial resources to otherwise underserved individuals and ensure fairness in the allocation of these resources.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge