Pamely Zantou

HumekaFL: Automated Detection of Neonatal Asphyxia Using Federated Learning

Dec 02, 2024

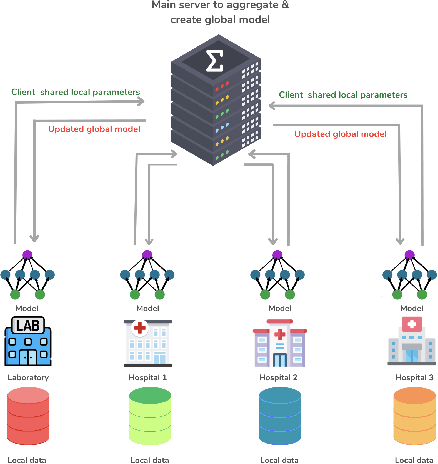

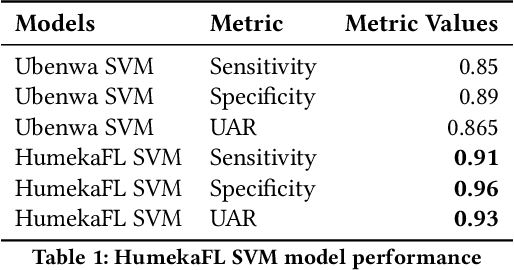

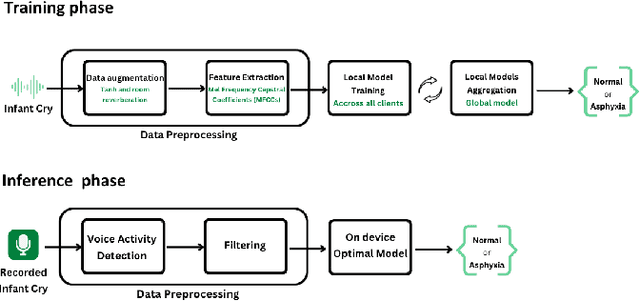

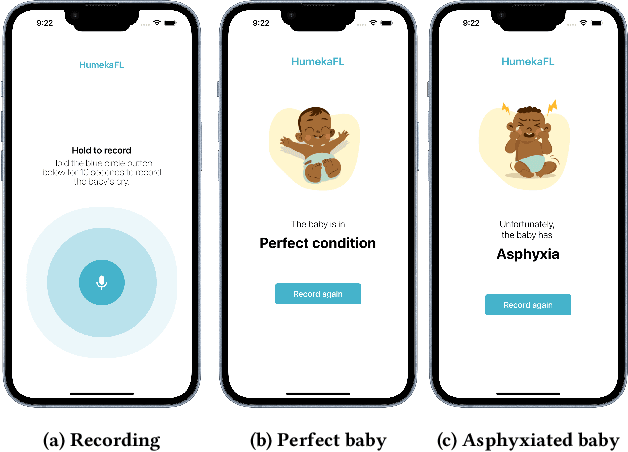

Abstract:Birth Apshyxia (BA) is a severe condition characterized by insufficient supply of oxygen to a newborn during the delivery. BA is one of the primary causes of neonatal death in the world. Although there has been a decline in neonatal deaths over the past two decades, the developing world, particularly sub-Saharan Africa, continues to experience the highest under-five (<5) mortality rates. While evidence-based methods are commonly used to detect BA in African healthcare settings, they can be subject to physician errors or delays in diagnosis, preventing timely interventions. Centralized Machine Learning (ML) methods demonstrated good performance in early detection of BA but require sensitive health data to leave their premises before training, which does not guarantee privacy and security. Healthcare institutions are therefore reluctant to adopt such solutions in Africa. To address this challenge, we suggest a federated learning (FL)-based software architecture, a distributed learning method that prioritizes privacy and security by design. We have developed a user-friendly and cost-effective mobile application embedding the FL pipeline for early detection of BA. Our Federated SVM model outperformed centralized SVM pipelines and Neural Networks (NN)-based methods in the existing literature

FonMTL: Towards Multitask Learning for the Fon Language

Sep 11, 2023

Abstract:The Fon language, spoken by an average 2 million of people, is a truly low-resourced African language, with a limited online presence, and existing datasets (just to name but a few). Multitask learning is a learning paradigm that aims to improve the generalization capacity of a model by sharing knowledge across different but related tasks: this could be prevalent in very data-scarce scenarios. In this paper, we present the first explorative approach to multitask learning, for model capabilities enhancement in Natural Language Processing for the Fon language. Specifically, we explore the tasks of Named Entity Recognition (NER) and Part of Speech Tagging (POS) for Fon. We leverage two language model heads as encoders to build shared representations for the inputs, and we use linear layers blocks for classification relative to each task. Our results on the NER and POS tasks for Fon, show competitive (or better) performances compared to several multilingual pretrained language models finetuned on single tasks. Additionally, we perform a few ablation studies to leverage the efficiency of two different loss combination strategies and find out that the equal loss weighting approach works best in our case. Our code is open-sourced at https://github.com/bonaventuredossou/multitask_fon.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge