Pak Kin Wong

Ultrasound Report Generation with Multimodal Large Language Models for Standardized Texts

May 13, 2025Abstract:Ultrasound (US) report generation is a challenging task due to the variability of US images, operator dependence, and the need for standardized text. Unlike X-ray and CT, US imaging lacks consistent datasets, making automation difficult. In this study, we propose a unified framework for multi-organ and multilingual US report generation, integrating fragment-based multilingual training and leveraging the standardized nature of US reports. By aligning modular text fragments with diverse imaging data and curating a bilingual English-Chinese dataset, the method achieves consistent and clinically accurate text generation across organ sites and languages. Fine-tuning with selective unfreezing of the vision transformer (ViT) further improves text-image alignment. Compared to the previous state-of-the-art KMVE method, our approach achieves relative gains of about 2\% in BLEU scores, approximately 3\% in ROUGE-L, and about 15\% in CIDEr, while significantly reducing errors such as missing or incorrect content. By unifying multi-organ and multi-language report generation into a single, scalable framework, this work demonstrates strong potential for real-world clinical workflows.

Broad Critic Deep Actor Reinforcement Learning for Continuous Control

Nov 24, 2024

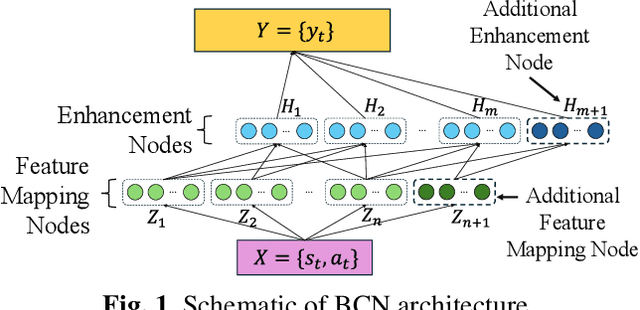

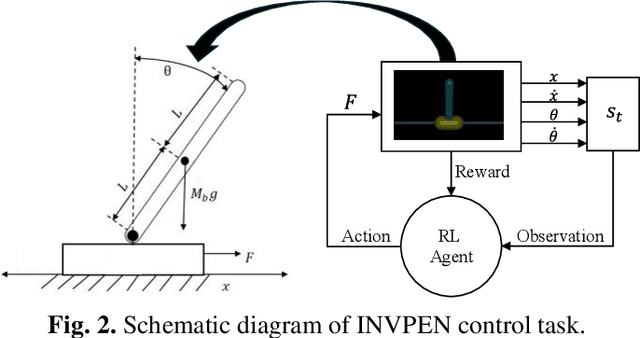

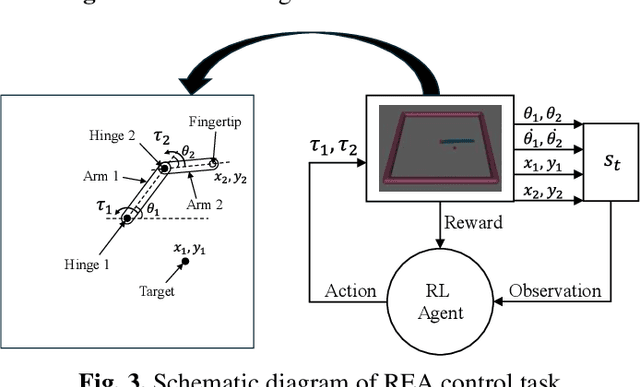

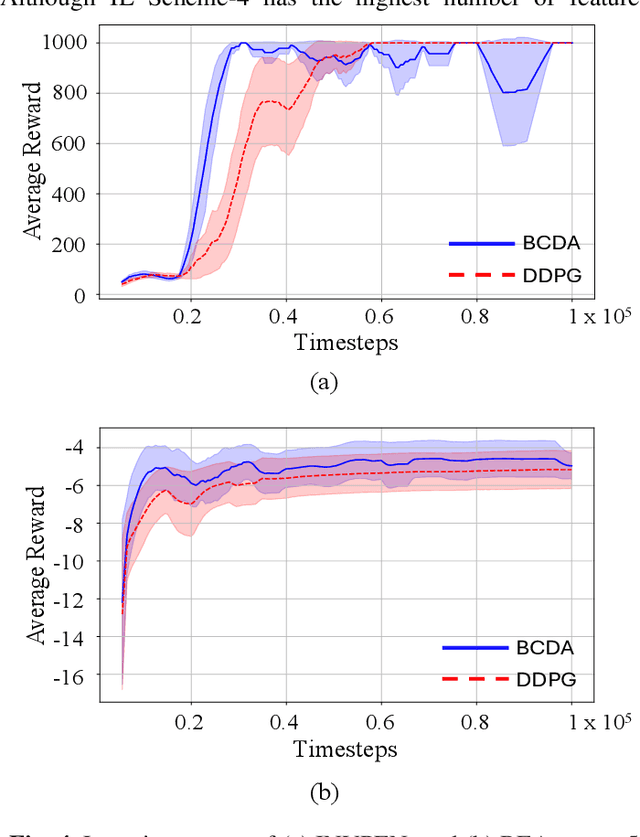

Abstract:In the domain of continuous control, deep reinforcement learning (DRL) demonstrates promising results. However, the dependence of DRL on deep neural networks (DNNs) results in the demand for extensive data and increased computational complexity. To address this issue, a novel hybrid architecture for actor-critic reinforcement learning (RL) algorithms is introduced. The proposed architecture integrates the broad learning system (BLS) with DNN, aiming to merge the strengths of both distinct architectural paradigms. Specifically, the critic network is implemented using BLS, while the actor network is constructed with a DNN. For the estimations of the critic network parameters, ridge regression is employed, and the parameters of the actor network are optimized through gradient descent. The effectiveness of the proposed algorithm is evaluated by applying it to two classic continuous control tasks, and its performance is compared with the widely recognized deep deterministic policy gradient (DDPG) algorithm. Numerical results show that the proposed algorithm is superior to the DDPG algorithm in terms of computational efficiency, along with an accelerated learning trajectory. Application of the proposed algorithm in other actor-critic RL algorithms is suggested for investigation in future studies.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge