P. M. Krafft

Bias amplification in experimental social networks is reduced by resampling

Aug 15, 2022

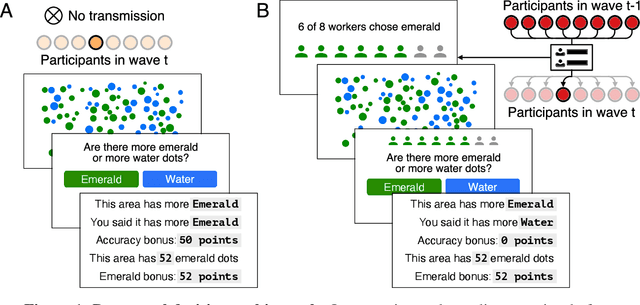

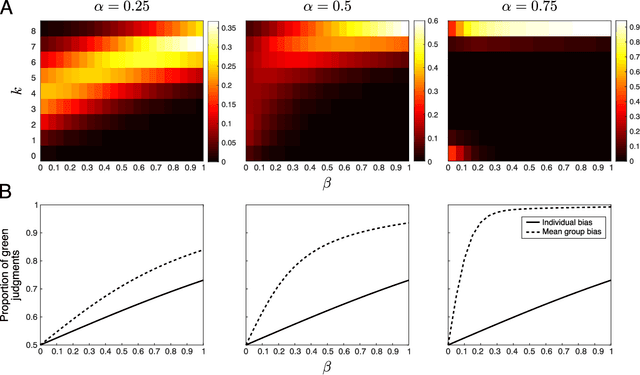

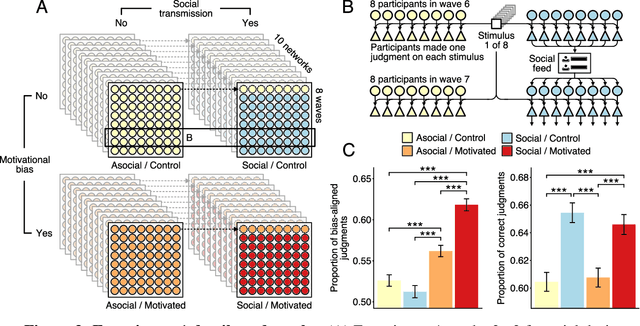

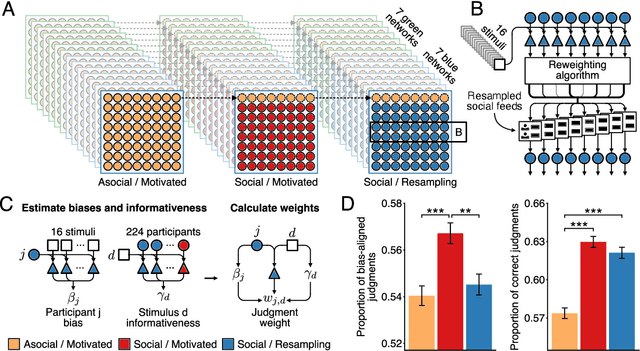

Abstract:Large-scale social networks are thought to contribute to polarization by amplifying people's biases. However, the complexity of these technologies makes it difficult to identify the mechanisms responsible and to evaluate mitigation strategies. Here we show under controlled laboratory conditions that information transmission through social networks amplifies motivational biases on a simple perceptual decision-making task. Participants in a large behavioral experiment showed increased rates of biased decision-making when part of a social network relative to asocial participants, across 40 independently evolving populations. Drawing on techniques from machine learning and Bayesian statistics, we identify a simple adjustment to content-selection algorithms that is predicted to mitigate bias amplification. This algorithm generates a sample of perspectives from within an individual's network that is more representative of the population as a whole. In a second large experiment, this strategy reduced bias amplification while maintaining the benefits of information sharing.

Defining AI in Policy versus Practice

Dec 23, 2019

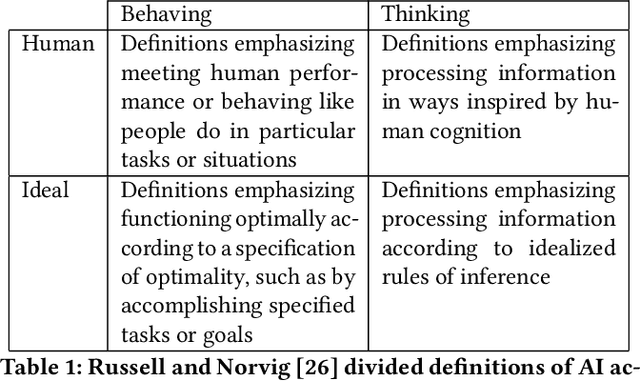

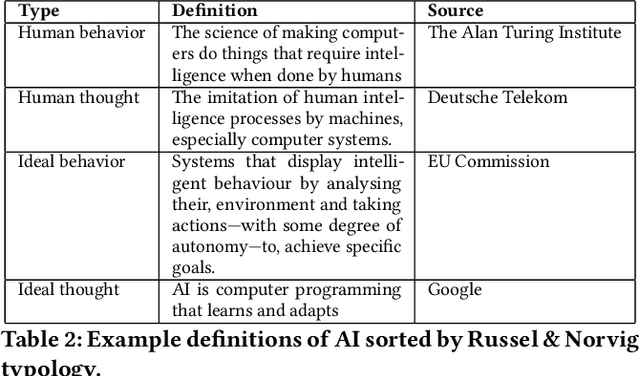

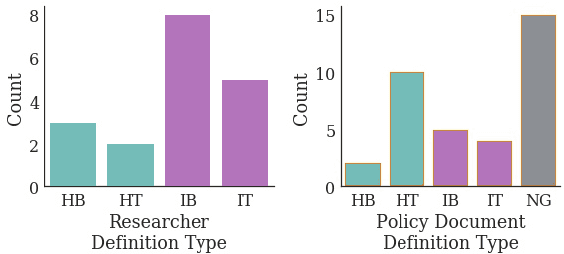

Abstract:Recent concern about harms of information technologies motivate consideration of regulatory action to forestall or constrain certain developments in the field of artificial intelligence (AI). However, definitional ambiguity hampers the possibility of conversation about this urgent topic of public concern. Legal and regulatory interventions require agreed-upon definitions, but consensus around a definition of AI has been elusive, especially in policy conversations. With an eye towards practical working definitions and a broader understanding of positions on these issues, we survey experts and review published policy documents to examine researcher and policy-maker conceptions of AI. We find that while AI researchers favor definitions of AI that emphasize technical functionality, policy-makers instead use definitions that compare systems to human thinking and behavior. We point out that definitions adhering closely to the functionality of AI systems are more inclusive of technologies in use today, whereas definitions that emphasize human-like capabilities are most applicable to hypothetical future technologies. As a result of this gap, ethical and regulatory efforts may overemphasize concern about future technologies at the expense of pressing issues with existing deployed technologies.

An Algorithmic Equity Toolkit for Technology Audits by Community Advocates and Activists

Dec 06, 2019

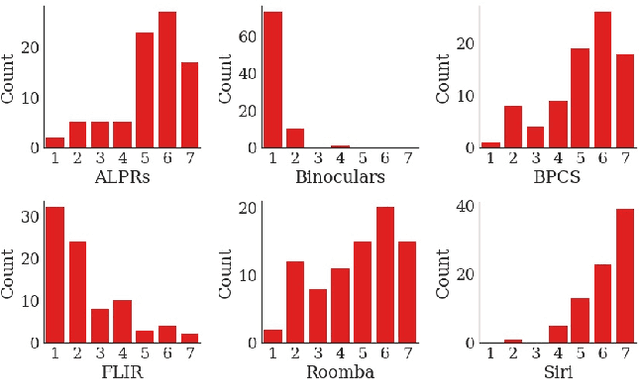

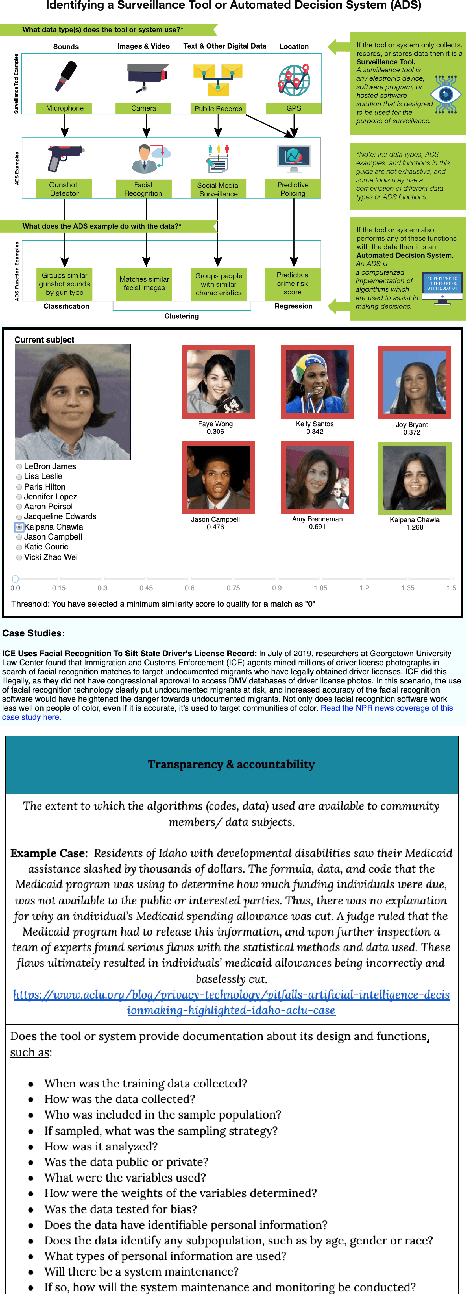

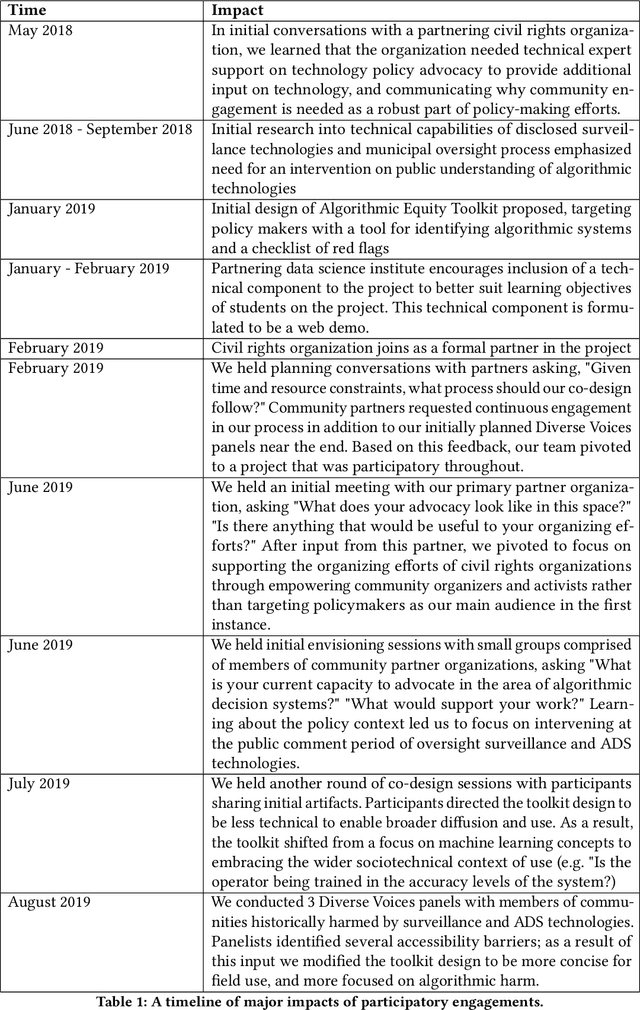

Abstract:A wave of recent scholarship documenting the discriminatory harms of algorithmic systems has spurred widespread interest in algorithmic accountability and regulation. Yet effective accountability and regulation is stymied by a persistent lack of resources supporting public understanding of algorithms and artificial intelligence. Through interactions with a US-based civil rights organization and their coalition of community organizations, we identify a need for (i) heuristics that aid stakeholders in distinguishing between types of analytic and information systems in lay language, and (ii) risk assessment tools for such systems that begin by making algorithms more legible. The present work delivers a toolkit to achieve these aims. This paper both presents the Algorithmic Equity Toolkit (AEKit) Equity as an artifact, and details how our participatory process shaped its design. Our work fits within human-computer interaction scholarship as a demonstration of the value of HCI methods and approaches to problems in the area of algorithmic transparency and accountability.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge