Defining AI in Policy versus Practice

Paper and Code

Dec 23, 2019

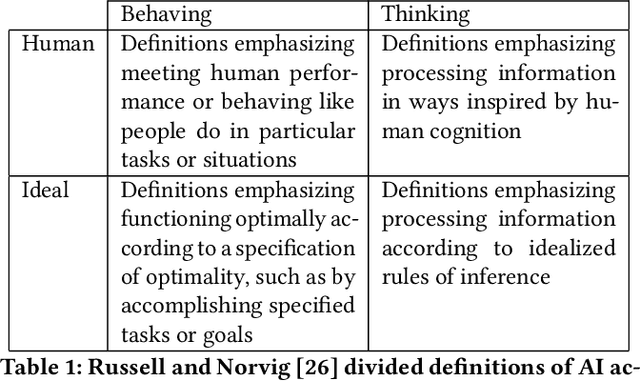

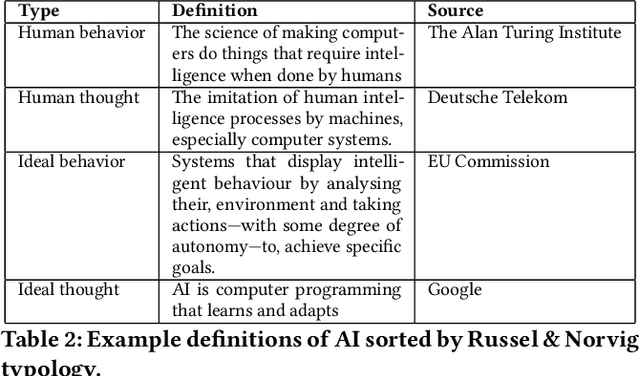

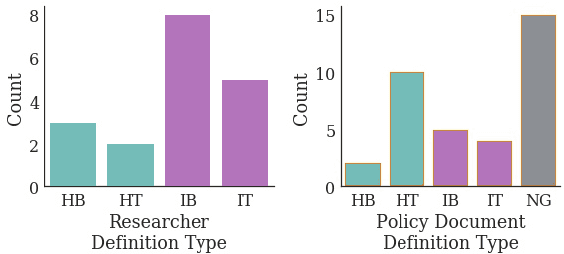

Recent concern about harms of information technologies motivate consideration of regulatory action to forestall or constrain certain developments in the field of artificial intelligence (AI). However, definitional ambiguity hampers the possibility of conversation about this urgent topic of public concern. Legal and regulatory interventions require agreed-upon definitions, but consensus around a definition of AI has been elusive, especially in policy conversations. With an eye towards practical working definitions and a broader understanding of positions on these issues, we survey experts and review published policy documents to examine researcher and policy-maker conceptions of AI. We find that while AI researchers favor definitions of AI that emphasize technical functionality, policy-makers instead use definitions that compare systems to human thinking and behavior. We point out that definitions adhering closely to the functionality of AI systems are more inclusive of technologies in use today, whereas definitions that emphasize human-like capabilities are most applicable to hypothetical future technologies. As a result of this gap, ethical and regulatory efforts may overemphasize concern about future technologies at the expense of pressing issues with existing deployed technologies.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge