Oliver Tessmann

Building a Library of Tactile Skills Based on FingerVision

Sep 20, 2019

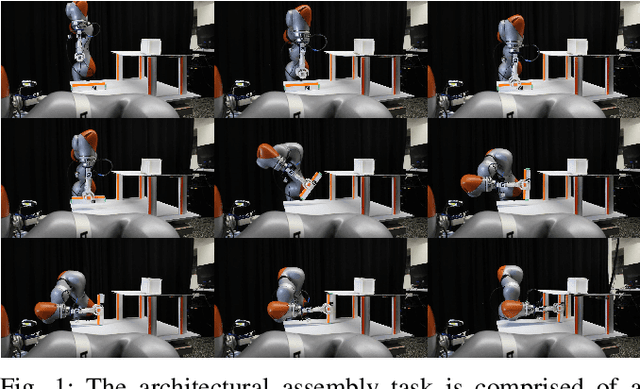

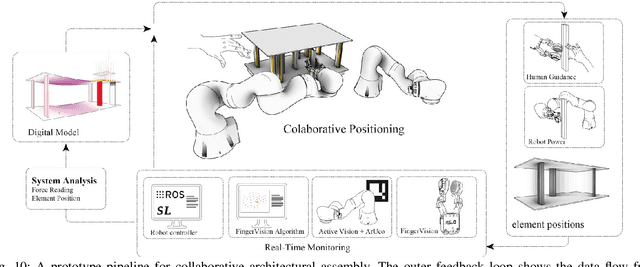

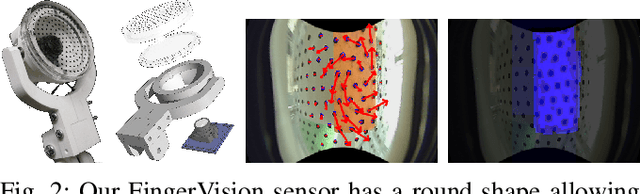

Abstract:Camera-based tactile sensors are emerging as a promising inexpensive solution for tactile-enhanced manipulation tasks. A recently introduced FingerVision sensor was shown capable of generating reliable signals for force estimation, object pose estimation, and slip detection. In this paper, we build upon the FingerVision design, improving already existing control algorithms, and, more importantly, expanding its range of applicability to more challenging tasks by utilizing raw skin deformation data for control. In contrast to previous approaches that rely on the average deformation of the whole sensor surface, we directly employ local deviations of each spherical marker immersed in the silicone body of the sensor for feedback control and as input to learning tasks. We show that with such input, substances of varying texture and viscosity can be distinguished on the basis of tactile sensations evoked while stirring them. As another application, we learn a mapping between skin deformation and force applied to an object. To demonstrate the full range of capabilities of the proposed controllers, we deploy them in a challenging architectural assembly task that involves inserting a load-bearing element underneath a bendable plate at the point of maximum load.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge