Nikolay Zherdev

SwipeBot: DNN-based Autonomous Robot Navigation among Movable Obstacles in Cluttered Environments

May 08, 2023Abstract:In this paper, we propose a novel approach to wheeled robot navigation through an environment with movable obstacles. A robot exploits knowledge about different obstacle classes and selects the minimally invasive action to perform to clear the path. We trained a convolutional neural network (CNN), so the robot can classify an RGB-D image and decide whether to push a blocking object and which force to apply. After known objects are segmented, they are being projected to a cost-map, and a robot calculates an optimal path to the goal. If the blocking objects are allowed to be moved, a robot drives through them while pushing them away. We implemented our algorithm in ROS, and an extensive set of simulations showed that the robot successfully overcomes the blocked regions. Our approach allows a robot to successfully build a path through regions, where it would have stuck with traditional path-planning techniques.

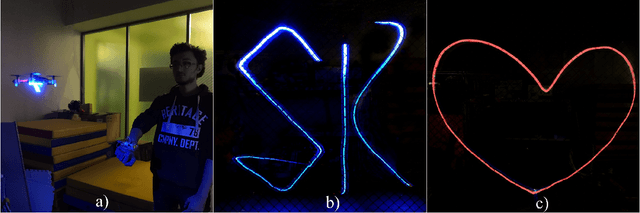

DroneLight: Drone Draws in the Air using Long Exposure Light Painting and ML

Jul 30, 2020

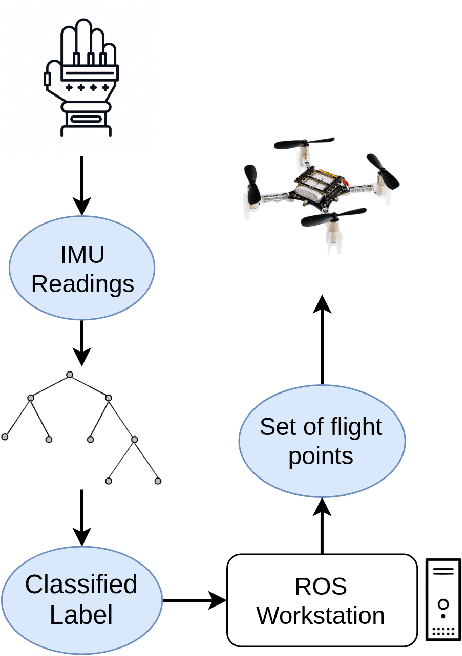

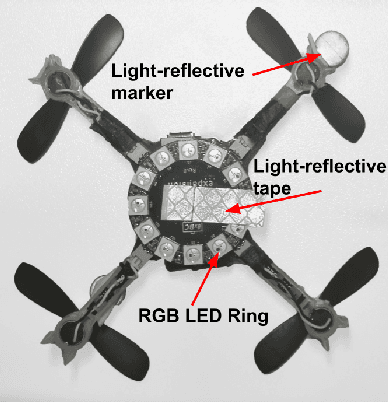

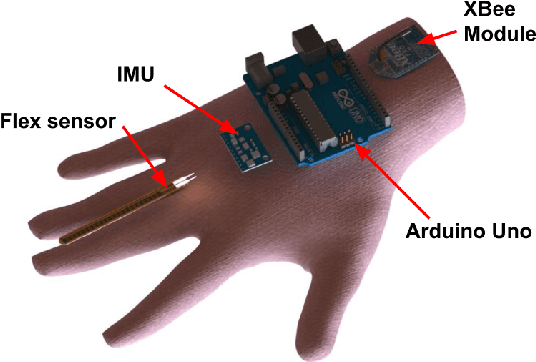

Abstract:We propose a novel human-drone interaction paradigm where a user directly interacts with a drone to light-paint predefined patterns or letters through hand gestures. The user wears a glove which is equipped with an IMU sensor to draw letters or patterns in the midair. The developed ML algorithm detects the drawn pattern and the drone light-paints each pattern in midair in the real time. The proposed classification model correctly predicts all of the input gestures. The DroneLight system can be applied in drone shows, advertisements, distant communication through text or pattern, rescue, and etc. To our knowledge, it would be the world's first human-centric robotic system that people can use to send messages based on light-painting over distant locations (drone-based instant messaging). Another unique application of the system would be the development of vision-driven rescue system that reads light-painting by person who is in distress and triggers rescue alarm.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge