Nicholas Prayogo

Interpretable Time Series Clustering Using Local Explanations

Aug 01, 2022

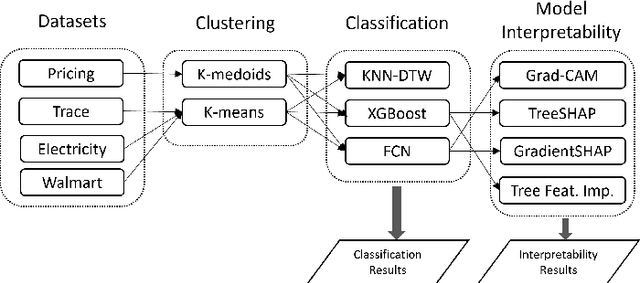

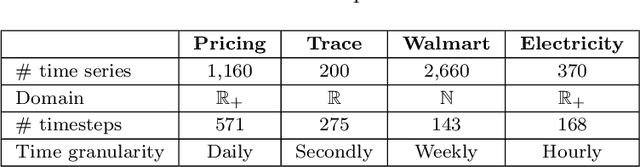

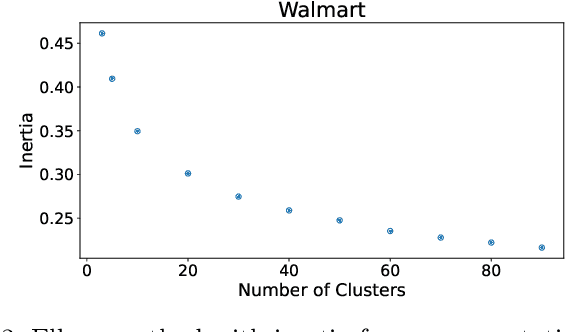

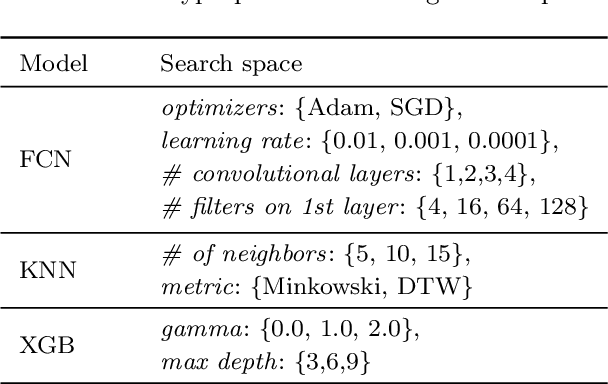

Abstract:This study focuses on exploring the use of local interpretability methods for explaining time series clustering models. Many of the state-of-the-art clustering models are not directly explainable. To provide explanations for these clustering algorithms, we train classification models to estimate the cluster labels. Then, we use interpretability methods to explain the decisions of the classification models. The explanations are used to obtain insights into the clustering models. We perform a detailed numerical study to test the proposed approach on multiple datasets, clustering models, and classification models. The analysis of the results shows that the proposed approach can be used to explain time series clustering models, specifically when the underlying classification model is accurate. Lastly, we provide a detailed analysis of the results, discussing how our approach can be used in a real-life scenario.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge