Nicholas Antipa

Leveraging 6DoF Pose Foundation Models For Mapping Marine Sediment Burial

Jun 12, 2025Abstract:The burial state of anthropogenic objects on the seafloor provides insight into localized sedimentation dynamics and is also critical for assessing ecological risks, potential pollutant transport, and the viability of recovery or mitigation strategies for hazardous materials such as munitions. Accurate burial depth estimation from remote imagery remains difficult due to partial occlusion, poor visibility, and object degradation. This work introduces a computer vision pipeline, called PoseIDON, which combines deep foundation model features with multiview photogrammetry to estimate six degrees of freedom object pose and the orientation of the surrounding seafloor from ROV video. Burial depth is inferred by aligning CAD models of the objects with observed imagery and fitting a local planar approximation of the seafloor. The method is validated using footage of 54 objects, including barrels and munitions, recorded at a historic ocean dumpsite in the San Pedro Basin. The model achieves a mean burial depth error of approximately 10 centimeters and resolves spatial burial patterns that reflect underlying sediment transport processes. This approach enables scalable, non-invasive mapping of seafloor burial and supports environmental assessment at contaminated sites.

Repurposing Marigold for Zero-Shot Metric Depth Estimation via Defocus Blur Cues

May 23, 2025Abstract:Recent monocular metric depth estimation (MMDE) methods have made notable progress towards zero-shot generalization. However, they still exhibit a significant performance drop on out-of-distribution datasets. We address this limitation by injecting defocus blur cues at inference time into Marigold, a \textit{pre-trained} diffusion model for zero-shot, scale-invariant monocular depth estimation (MDE). Our method effectively turns Marigold into a metric depth predictor in a training-free manner. To incorporate defocus cues, we capture two images with a small and a large aperture from the same viewpoint. To recover metric depth, we then optimize the metric depth scaling parameters and the noise latents of Marigold at inference time using gradients from a loss function based on the defocus-blur image formation model. We compare our method against existing state-of-the-art zero-shot MMDE methods on a self-collected real dataset, showing quantitative and qualitative improvements.

A Differentiable Wave Optics Model for End-to-End Computational Imaging System Optimization

Dec 13, 2024

Abstract:End-to-end optimization, which simultaneously optimizes optics and algorithms, has emerged as a powerful data-driven method for computational imaging system design. This method achieves joint optimization through backpropagation by incorporating differentiable optics simulators to generate measurements and algorithms to extract information from measurements. However, due to high computational costs, it is challenging to model both aberration and diffraction in light transport for end-to-end optimization of compound optics. Therefore, most existing methods compromise physical accuracy by neglecting wave optics effects or off-axis aberrations, which raises concerns about the robustness of the resulting designs. In this paper, we propose a differentiable optics simulator that efficiently models both aberration and diffraction for compound optics. Using the simulator, we conduct end-to-end optimization on scene reconstruction and classification. Experimental results demonstrate that both lenses and algorithms adopt different configurations depending on whether wave optics is modeled. We also show that systems optimized without wave optics suffer from performance degradation when wave optics effects are introduced during testing. These findings underscore the importance of accurate wave optics modeling in optimizing imaging systems for robust, high-performance applications.

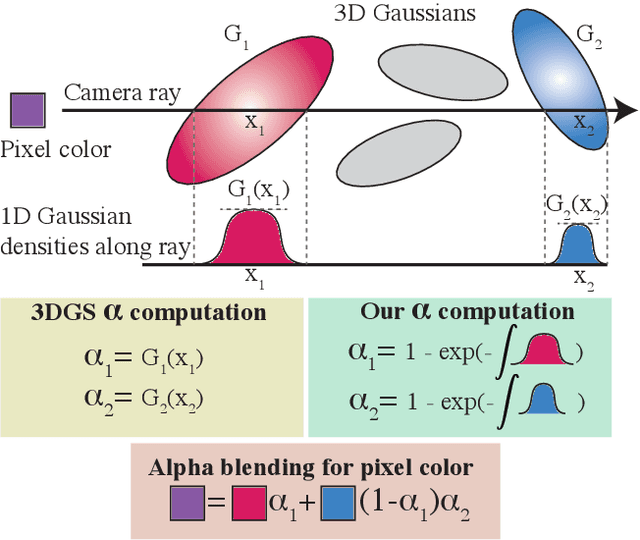

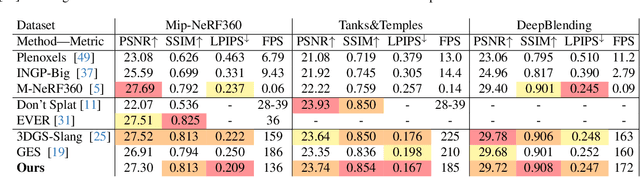

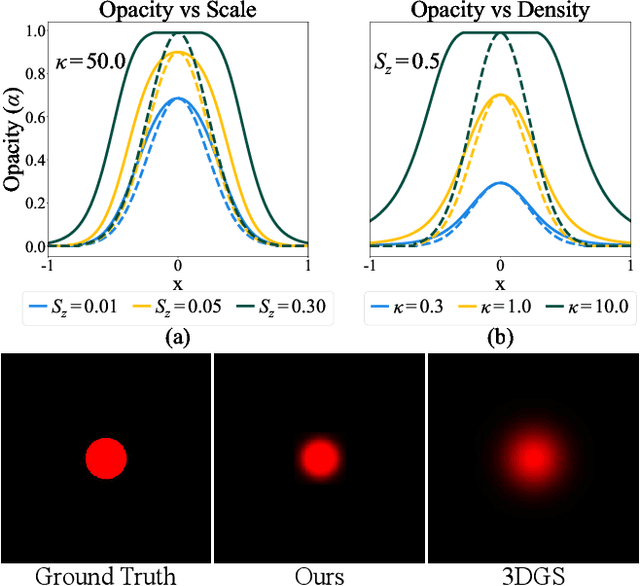

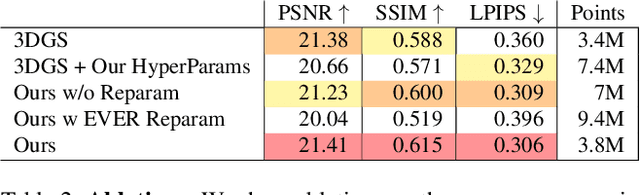

Volumetrically Consistent 3D Gaussian Rasterization

Dec 04, 2024

Abstract:Recently, 3D Gaussian Splatting (3DGS) has enabled photorealistic view synthesis at high inference speeds. However, its splatting-based rendering model makes several approximations to the rendering equation, reducing physical accuracy. We show that splatting and its approximations are unnecessary, even within a rasterizer; we instead volumetrically integrate 3D Gaussians directly to compute the transmittance across them analytically. We use this analytic transmittance to derive more physically-accurate alpha values than 3DGS, which can directly be used within their framework. The result is a method that more closely follows the volume rendering equation (similar to ray-tracing) while enjoying the speed benefits of rasterization. Our method represents opaque surfaces with higher accuracy and fewer points than 3DGS. This enables it to outperform 3DGS for view synthesis (measured in SSIM and LPIPS). Being volumetrically consistent also enables our method to work out of the box for tomography. We match the state-of-the-art 3DGS-based tomography method with fewer points. Being volumetrically consistent also enables our method to work out of the box for tomography. We match the state-of-the-art 3DGS-based tomography method with fewer points.

Pose Estimation of Buried Deep-Sea Objects using 3D Vision Deep Learning Models

Oct 01, 2024

Abstract:We present an approach for pose and burial fraction estimation of debris field barrels found on the seabed in the Southern California San Pedro Basin. Our computational workflow leverages recent advances in foundation models for segmentation and a vision transformer-based approach to estimate the point cloud which defines the geometry of the barrel. We propose BarrelNet for estimating the 6-DOF pose and radius of buried barrels from the barrel point clouds as input. We train BarrelNet using synthetically generated barrel point clouds, and qualitatively demonstrate the potential of our approach using remotely operated vehicle (ROV) video footage of barrels found at a historic dump site. We compare our method to a traditional least squares fitting approach and show significant improvement according to our defined benchmarks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge