Navid Anjum Aadit

Probabilistic Computers for Neural Quantum States

Dec 31, 2025Abstract:Neural quantum states efficiently represent many-body wavefunctions with neural networks, but the cost of Monte Carlo sampling limits their scaling to large system sizes. Here we address this challenge by combining sparse Boltzmann machine architectures with probabilistic computing hardware. We implement a probabilistic computer on field programmable gate arrays (FPGAs) and use it as a fast sampler for energy-based neural quantum states. For the two-dimensional transverse-field Ising model at criticality, we obtain accurate ground-state energies for lattices up to 80 $\times$ 80 (6400 spins) using a custom multi-FPGA cluster. Furthermore, we introduce a dual-sampling algorithm to train deep Boltzmann machines, replacing intractable marginalization with conditional sampling over auxiliary layers. This enables the training of sparse deep models and improves parameter efficiency relative to shallow networks. Using this algorithm, we train deep Boltzmann machines for a system with 35 $\times$ 35 (1225 spins). Together, these results demonstrate that probabilistic hardware can overcome the sampling bottleneck in variational simulation of quantum many-body systems, opening a path to larger system sizes and deeper variational architectures.

CMOS + stochastic nanomagnets: heterogeneous computers for probabilistic inference and learning

Apr 18, 2023Abstract:Extending Moore's law by augmenting complementary-metal-oxide semiconductor (CMOS) transistors with emerging nanotechnologies (X) has become increasingly important. Accelerating Monte Carlo algorithms that rely on random sampling with such CMOS+X technologies could have significant impact on a large number of fields from probabilistic machine learning, optimization to quantum simulation. In this paper, we show the combination of stochastic magnetic tunnel junction (sMTJ)-based probabilistic bits (p-bits) with versatile Field Programmable Gate Arrays (FPGA) to design a CMOS + X (X = sMTJ) prototype. Our approach enables high-quality true randomness that is essential for Monte Carlo based probabilistic sampling and learning. Our heterogeneous computer successfully performs probabilistic inference and asynchronous Boltzmann learning, despite device-to-device variations in sMTJs. A comprehensive comparison using a CMOS predictive process design kit (PDK) reveals that compact sMTJ-based p-bits replace 10,000 transistors while dissipating two orders of magnitude of less energy (2 fJ per random bit), compared to digital CMOS p-bits. Scaled and integrated versions of our CMOS + stochastic nanomagnet approach can significantly advance probabilistic computing and its applications in various domains by providing massively parallel and truly random numbers with extremely high throughput and energy-efficiency.

Training Deep Boltzmann Networks with Sparse Ising Machines

Mar 19, 2023Abstract:The slowing down of Moore's law has driven the development of unconventional computing paradigms, such as specialized Ising machines tailored to solve combinatorial optimization problems. In this paper, we show a new application domain for probabilistic bit (p-bit) based Ising machines by training deep generative AI models with them. Using sparse, asynchronous, and massively parallel Ising machines we train deep Boltzmann networks in a hybrid probabilistic-classical computing setup. We use the full MNIST dataset without any downsampling or reduction in hardware-aware network topologies implemented in moderately sized Field Programmable Gate Arrays (FPGA). Our machine, which uses only 4,264 nodes (p-bits) and about 30,000 parameters, achieves the same classification accuracy (90%) as an optimized software-based restricted Boltzmann Machine (RBM) with approximately 3.25 million parameters. Additionally, the sparse deep Boltzmann network can generate new handwritten digits, a task the 3.25 million parameter RBM fails at despite achieving the same accuracy. Our hybrid computer takes a measured 50 to 64 billion probabilistic flips per second, which is at least an order of magnitude faster than superficially similar Graphics and Tensor Processing Unit (GPU/TPU) based implementations. The massively parallel architecture can comfortably perform the contrastive divergence algorithm (CD-n) with up to n = 10 million sweeps per update, beyond the capabilities of existing software implementations. These results demonstrate the potential of using Ising machines for traditionally hard-to-train deep generative Boltzmann networks, with further possible improvement in nanodevice-based realizations.

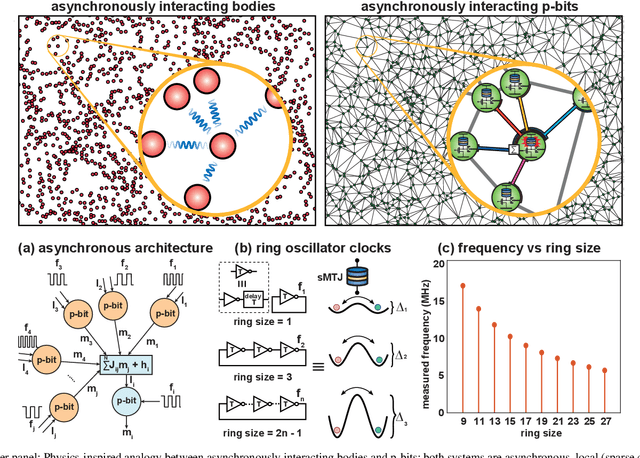

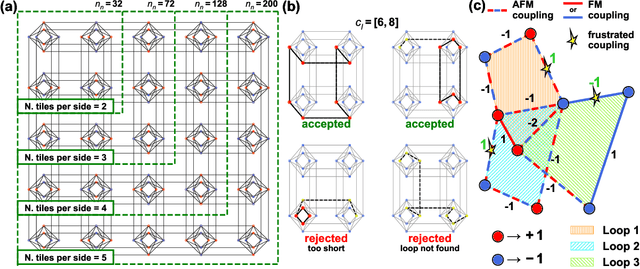

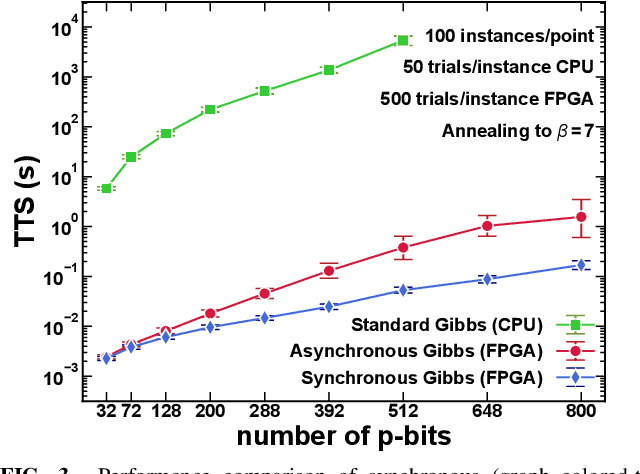

Physics-inspired Ising Computing with Ring Oscillator Activated p-bits

May 15, 2022

Abstract:The nearing end of Moore's Law has been driving the development of domain-specific hardware tailored to solve a special set of problems. Along these lines, probabilistic computing with inherently stochastic building blocks (p-bits) have shown significant promise, particularly in the context of hard optimization and statistical sampling problems. p-bits have been proposed and demonstrated in different hardware substrates ranging from small-scale stochastic magnetic tunnel junctions (sMTJs) in asynchronous architectures to large-scale CMOS in synchronous architectures. Here, we design and implement a truly asynchronous and medium-scale p-computer (with $\approx$ 800 p-bits) that closely emulates the asynchronous dynamics of sMTJs in Field Programmable Gate Arrays (FPGAs). Using hard instances of the planted Ising glass problem on the Chimera lattice, we evaluate the performance of the asynchronous architecture against an ideal, synchronous design that performs parallelized (chromatic) exact Gibbs sampling. We find that despite the lack of any careful synchronization, the asynchronous design achieves parallelism with comparable algorithmic scaling in the ideal, carefully tuned and parallelized synchronous design. Our results highlight the promise of massively scaled p-computers with millions of free-running p-bits made out of nanoscale building blocks such as stochastic magnetic tunnel junctions.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge