Nasim Yahyasoltani

Adversary-Robust Graph-Based Learning of WSIs

Mar 21, 2024

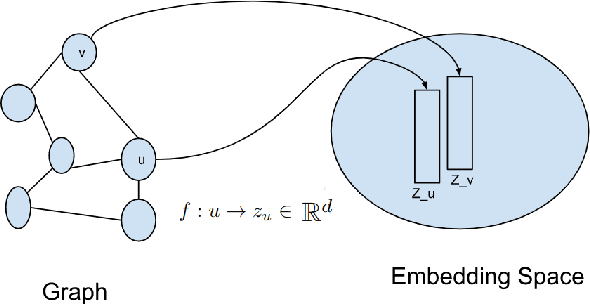

Abstract:Enhancing the robustness of deep learning models against adversarial attacks is crucial, especially in critical domains like healthcare where significant financial interests heighten the risk of such attacks. Whole slide images (WSIs) are high-resolution, digitized versions of tissue samples mounted on glass slides, scanned using sophisticated imaging equipment. The digital analysis of WSIs presents unique challenges due to their gigapixel size and multi-resolution storage format. In this work, we aim at improving the robustness of cancer Gleason grading classification systems against adversarial attacks, addressing challenges at both the image and graph levels. As regards the proposed algorithm, we develop a novel and innovative graph-based model which utilizes GNN to extract features from the graph representation of WSIs. A denoising module, along with a pooling layer is incorporated to manage the impact of adversarial attacks on the WSIs. The process concludes with a transformer module that classifies various grades of prostate cancer based on the processed data. To assess the effectiveness of the proposed method, we conducted a comparative analysis using two scenarios. Initially, we trained and tested the model without the denoiser using WSIs that had not been exposed to any attack. We then introduced a range of attacks at either the image or graph level and processed them through the proposed network. The performance of the model was evaluated in terms of accuracy and kappa scores. The results from this comparison showed a significant improvement in cancer diagnosis accuracy, highlighting the robustness and efficiency of the proposed method in handling adversarial challenges in the context of medical imaging.

Artifact-Robust Graph-Based Learning in Digital Pathology

Oct 27, 2023

Abstract:Whole slide images~(WSIs) are digitized images of tissues placed in glass slides using advanced scanners. The digital processing of WSIs is challenging as they are gigapixel images and stored in multi-resolution format. A common challenge with WSIs is that perturbations/artifacts are inevitable during storing the glass slides and digitizing them. These perturbations include motion, which often arises from slide movement during placement, and changes in hue and brightness due to variations in staining chemicals and the quality of digitizing scanners. In this work, a novel robust learning approach to account for these artifacts is presented. Due to the size and resolution of WSIs and to account for neighborhood information, graph-based methods are called for. We use graph convolutional network~(GCN) to extract features from the graph representing WSI. Through a denoiser {and pooling layer}, the effects of perturbations in WSIs are controlled and the output is followed by a transformer for the classification of different grades of prostate cancer. To compare the efficacy of the proposed approach, the model without denoiser is trained and tested with WSIs without any perturbation and then different perturbations are introduced in WSIs and passed through the network with the denoiser. The accuracy and kappa scores of the proposed model with prostate cancer dataset compared with non-robust algorithms show significant improvement in cancer diagnosis.

Explainable and Position-Aware Learning in Digital Pathology

Jun 14, 2023

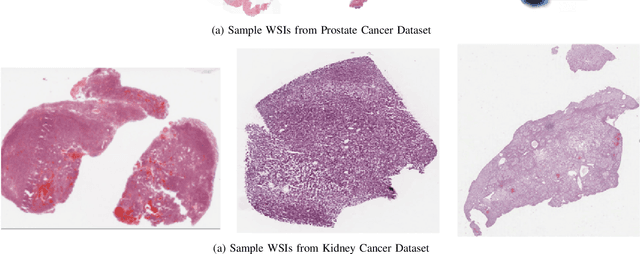

Abstract:Encoding whole slide images (WSI) as graphs is well motivated since it makes it possible for the gigapixel resolution WSI to be represented in its entirety for the purpose of graph learning. To this end, WSIs can be broken into smaller patches that represent the nodes of the graph. Then, graph-based learning methods can be utilized for the grading and classification of cancer. Message passing among neighboring nodes is the foundation of graph-based learning methods. However, they do not take into consideration any positional information for any of the patches, and if two patches are found in topologically isomorphic neighborhoods, their embeddings are nearly similar to one another. In this work, classification of cancer from WSIs is performed with positional embedding and graph attention. In order to represent the positional embedding of the nodes in graph classification, the proposed method makes use of spline convolutional neural networks (CNN). The algorithm is then tested with the WSI dataset for grading prostate cancer and kidney cancer. A comparison of the proposed method with leading approaches in cancer diagnosis and grading verify improved performance. The identification of cancerous regions in WSIs is another critical task in cancer diagnosis. In this work, the explainability of the proposed model is also addressed. A gradient-based explainbility approach is used to generate the saliency mapping for the WSIs. This can be used to look into regions of WSI that are responsible for cancer diagnosis thus rendering the proposed model explainable.

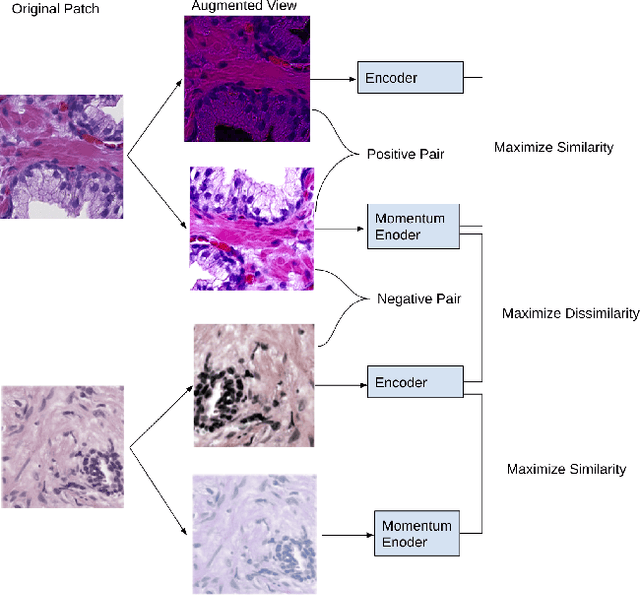

Context-Aware Self-Supervised Learning of Whole Slide Images

Jun 07, 2023

Abstract:Presenting whole slide images (WSIs) as graph will enable a more efficient and accurate learning framework for cancer diagnosis. Due to the fact that a single WSI consists of billions of pixels and there is a lack of vast annotated datasets required for computational pathology, the problem of learning from WSIs using typical deep learning approaches such as convolutional neural network (CNN) is challenging. Additionally, WSIs down-sampling may lead to the loss of data that is essential for cancer detection. A novel two-stage learning technique is presented in this work. Since context, such as topological features in the tumor surroundings, may hold important information for cancer grading and diagnosis, a graph representation capturing all dependencies among regions in the WSI is very intuitive. Graph convolutional network (GCN) is deployed to include context from the tumor and adjacent tissues, and self-supervised learning is used to enhance training through unlabeled data. More specifically, the entire slide is presented as a graph, where the nodes correspond to the patches from the WSI. The proposed framework is then tested using WSIs from prostate and kidney cancers. To assess the performance improvement through self-supervised mechanism, the proposed context-aware model is tested with and without use of pre-trained self-supervised layer. The overall model is also compared with multi-instance learning (MIL) based and other existing approaches.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge