Napat Thumwanit

Trainable Discrete Feature Embeddings for Variational Quantum Classifier

Jun 17, 2021

Abstract:Quantum classifiers provide sophisticated embeddings of input data in Hilbert space promising quantum advantage. The advantage stems from quantum feature maps encoding the inputs into quantum states with variational quantum circuits. A recent work shows how to map discrete features with fewer quantum bits using Quantum Random Access Coding (QRAC), an important primitive to encode binary strings into quantum states. We propose a new method to embed discrete features with trainable quantum circuits by combining QRAC and a recently proposed strategy for training quantum feature map called quantum metric learning. We show that the proposed trainable embedding requires not only as few qubits as QRAC but also overcomes the limitations of QRAC to classify inputs whose classes are based on hard Boolean functions. We numerically demonstrate its use in variational quantum classifiers to achieve better performances in classifying real-world datasets, and thus its possibility to leverage near-term quantum computers for quantum machine learning.

Embracing Ambiguity: Shifting the Training Target of NLI Models

Jun 06, 2021

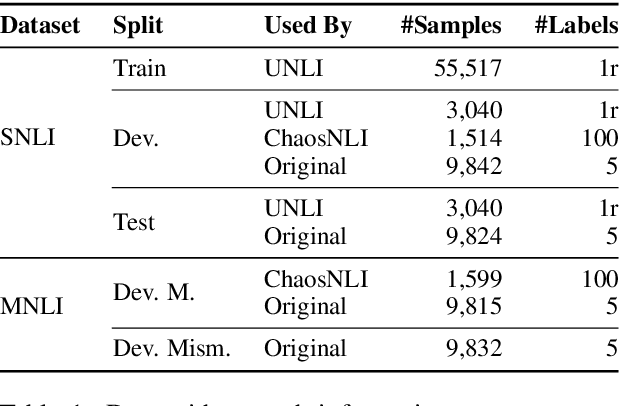

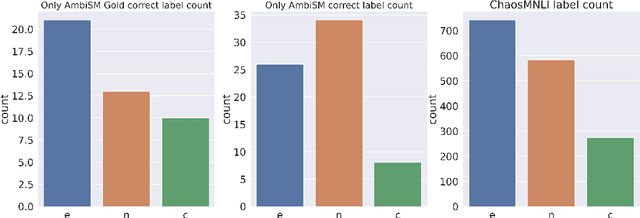

Abstract:Natural Language Inference (NLI) datasets contain examples with highly ambiguous labels. While many research works do not pay much attention to this fact, several recent efforts have been made to acknowledge and embrace the existence of ambiguity, such as UNLI and ChaosNLI. In this paper, we explore the option of training directly on the estimated label distribution of the annotators in the NLI task, using a learning loss based on this ambiguity distribution instead of the gold-labels. We prepare AmbiNLI, a trial dataset obtained from readily available sources, and show it is possible to reduce ChaosNLI divergence scores when finetuning on this data, a promising first step towards learning how to capture linguistic ambiguity. Additionally, we show that training on the same amount of data but targeting the ambiguity distribution instead of gold-labels can result in models that achieve higher performance and learn better representations for downstream tasks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge