Naifan Zhuang

Differential Recurrent Neural Network and its Application for Human Activity Recognition

May 09, 2019

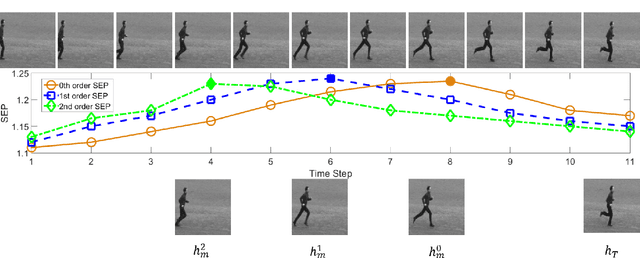

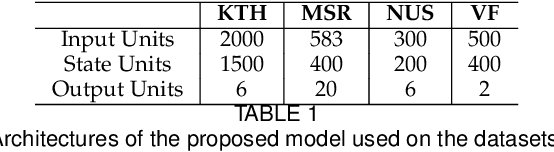

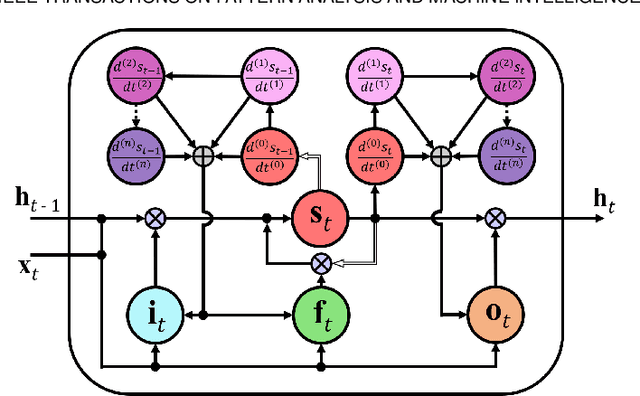

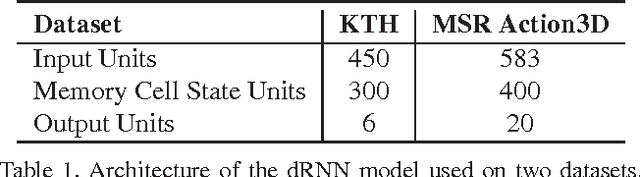

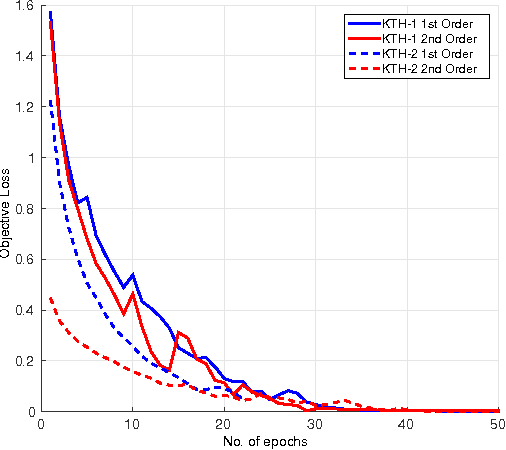

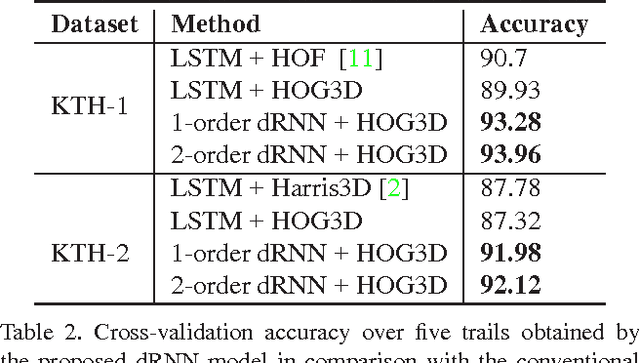

Abstract:The Long Short-Term Memory (LSTM) recurrent neural network is capable of processing complex sequential information since it utilizes special gating schemes for learning representations from long input sequences. It has the potential to model any sequential time-series data, where the current hidden state has to be considered in the context of the past hidden states. This property makes LSTM an ideal choice to learn the complex dynamics present in long sequences. Unfortunately, the conventional LSTMs do not consider the impact of spatio-temporal dynamics corresponding to the given salient motion patterns, when they gate the information that ought to be memorized through time. To address this problem, we propose a differential gating scheme for the LSTM neural network, which emphasizes on the change in information gain caused by the salient motions between the successive video frames. This change in information gain is quantified by Derivative of States (DoS), and thus the proposed LSTM model is termed as differential Recurrent Neural Network (dRNN). In addition, the original work used the hidden state at the last time-step to model the entire video sequence. Based on the energy profiling of DoS, we further propose to employ the State Energy Profile (SEP) to search for salient dRNN states and construct more informative representations. The effectiveness of the proposed model was demonstrated by automatically recognizing human actions from the real-world 2D and 3D single-person action datasets. We point out that LSTM is a special form of dRNN. As a result, we have introduced a new family of LSTMs. Our study is one of the first works towards demonstrating the potential of learning complex time-series representations via high-order derivatives of states.

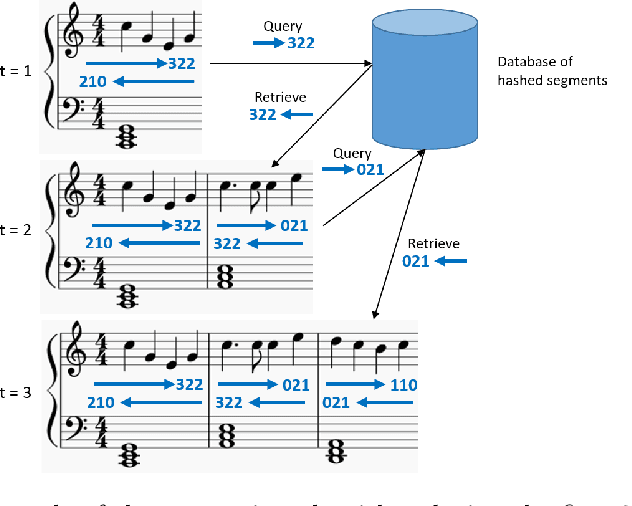

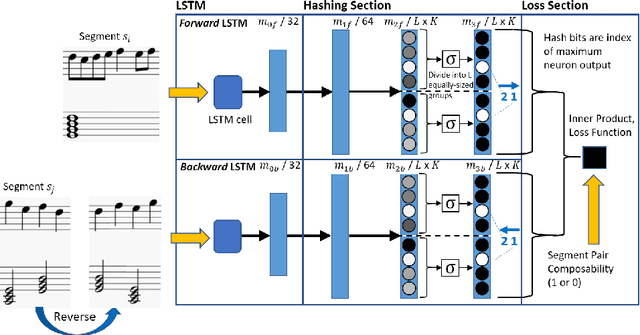

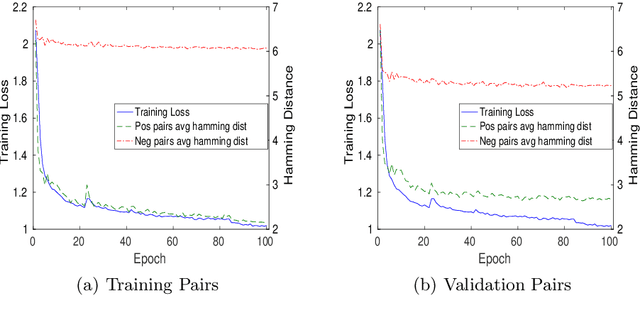

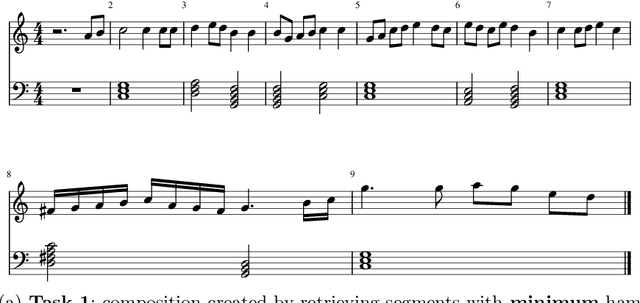

Deep Segment Hash Learning for Music Generation

May 30, 2018

Abstract:Music generation research has grown in popularity over the past decade, thanks to the deep learning revolution that has redefined the landscape of artificial intelligence. In this paper, we propose a novel approach to music generation inspired by musical segment concatenation methods and hash learning algorithms. Given a segment of music, we use a deep recurrent neural network and ranking-based hash learning to assign a forward hash code to the segment to retrieve candidate segments for continuation with matching backward hash codes. The proposed method is thus called Deep Segment Hash Learning (DSHL). To the best of our knowledge, DSHL is the first end-to-end segment hash learning method for music generation, and the first to use pair-wise training with segments of music. We demonstrate that this method is capable of generating music which is both original and enjoyable, and that DSHL offers a promising new direction for music generation research.

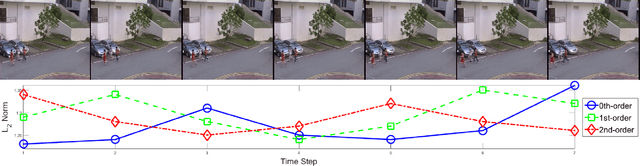

Deep Differential Recurrent Neural Networks

Apr 11, 2018

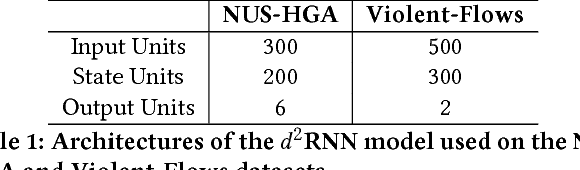

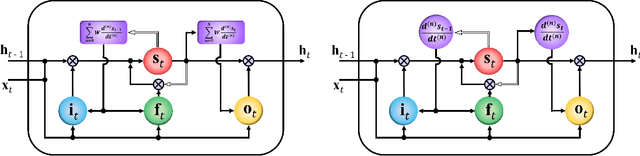

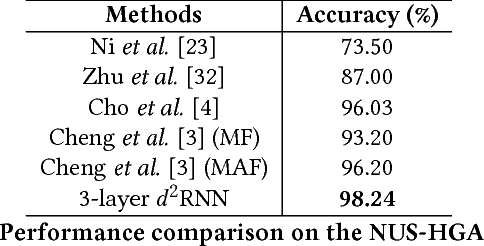

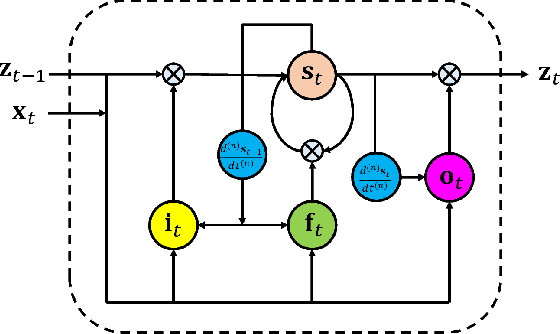

Abstract:Due to the special gating schemes of Long Short-Term Memory (LSTM), LSTMs have shown greater potential to process complex sequential information than the traditional Recurrent Neural Network (RNN). The conventional LSTM, however, fails to take into consideration the impact of salient spatio-temporal dynamics present in the sequential input data. This problem was first addressed by the differential Recurrent Neural Network (dRNN), which uses a differential gating scheme known as Derivative of States (DoS). DoS uses higher orders of internal state derivatives to analyze the change in information gain caused by the salient motions between the successive frames. The weighted combination of several orders of DoS is then used to modulate the gates in dRNN. While each individual order of DoS is good at modeling a certain level of salient spatio-temporal sequences, the sum of all the orders of DoS could distort the detected motion patterns. To address this problem, we propose to control the LSTM gates via individual orders of DoS and stack multiple levels of LSTM cells in an increasing order of state derivatives. The proposed model progressively builds up the ability of the LSTM gates to detect salient dynamical patterns in deeper stacked layers modeling higher orders of DoS, and thus the proposed LSTM model is termed deep differential Recurrent Neural Network (d2RNN). The effectiveness of the proposed model is demonstrated on two publicly available human activity datasets: NUS-HGA and Violent-Flows. The proposed model outperforms both LSTM and non-LSTM based state-of-the-art algorithms.

Differential Recurrent Neural Networks for Action Recognition

Apr 25, 2015

Abstract:The long short-term memory (LSTM) neural network is capable of processing complex sequential information since it utilizes special gating schemes for learning representations from long input sequences. It has the potential to model any sequential time-series data, where the current hidden state has to be considered in the context of the past hidden states. This property makes LSTM an ideal choice to learn the complex dynamics of various actions. Unfortunately, the conventional LSTMs do not consider the impact of spatio-temporal dynamics corresponding to the given salient motion patterns, when they gate the information that ought to be memorized through time. To address this problem, we propose a differential gating scheme for the LSTM neural network, which emphasizes on the change in information gain caused by the salient motions between the successive frames. This change in information gain is quantified by Derivative of States (DoS), and thus the proposed LSTM model is termed as differential Recurrent Neural Network (dRNN). We demonstrate the effectiveness of the proposed model by automatically recognizing actions from the real-world 2D and 3D human action datasets. Our study is one of the first works towards demonstrating the potential of learning complex time-series representations via high-order derivatives of states.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge