Motoki Kawanabe

Cross3DVG: Baseline and Dataset for Cross-Dataset 3D Visual Grounding on Different RGB-D Scans

May 23, 2023Abstract:We present Cross3DVG, a novel task for cross-dataset visual grounding in 3D scenes, revealing the limitations of existing 3D visual grounding models using restricted 3D resources and thus easily overfit to a specific 3D dataset. To facilitate Cross3DVG, we have created a large-scale 3D visual grounding dataset containing more than 63k diverse descriptions of 3D objects within 1,380 indoor RGB-D scans from 3RScan with human annotations, paired with the existing 52k descriptions on ScanRefer. We perform Cross3DVG by training a model on the source 3D visual grounding dataset and then evaluating it on the target dataset constructed in different ways (e.g., different sensors, 3D reconstruction methods, and language annotators) without using target labels. We conduct comprehensive experiments using established visual grounding models, as well as a CLIP-based 2D-3D integration method, designed to bridge the gaps between 3D datasets. By performing Cross3DVG tasks, we found that (i) cross-dataset 3D visual grounding has significantly lower performance than learning and evaluation with a single dataset, suggesting much room for improvement in cross-dataset generalization of 3D visual grounding, (ii) better detectors and transformer-based localization modules for 3D grounding are beneficial for enhancing 3D grounding performance and (iii) fusing 2D-3D data using CLIP demonstrates further performance improvements. Our Cross3DVG task will provide a benchmark for developing robust 3D visual grounding models capable of handling diverse 3D scenes while leveraging deep language understanding.

ScanQA: 3D Question Answering for Spatial Scene Understanding

Dec 20, 2021

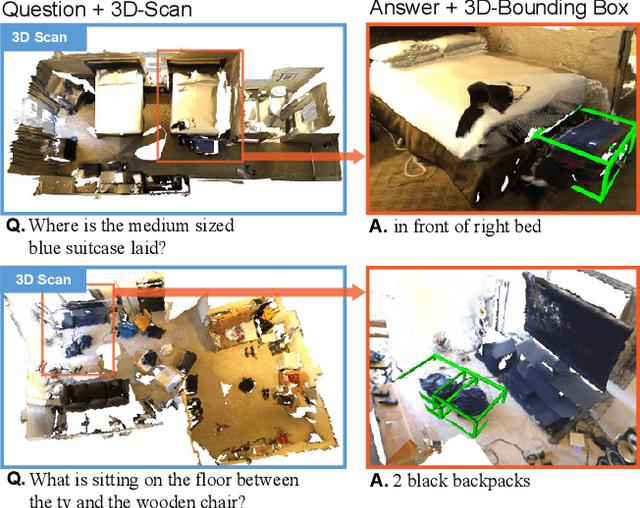

Abstract:We propose a new 3D spatial understanding task of 3D Question Answering (3D-QA). In the 3D-QA task, models receive visual information from the entire 3D scene of the rich RGB-D indoor scan and answer the given textual questions about the 3D scene. Unlike the 2D-question answering of VQA, the conventional 2D-QA models suffer from problems with spatial understanding of object alignment and directions and fail the object localization from the textual questions in 3D-QA. We propose a baseline model for 3D-QA, named ScanQA model, where the model learns a fused descriptor from 3D object proposals and encoded sentence embeddings. This learned descriptor correlates the language expressions with the underlying geometric features of the 3D scan and facilitates the regression of 3D bounding boxes to determine described objects in textual questions. We collected human-edited question-answer pairs with free-form answers that are grounded to 3D objects in each 3D scene. Our new ScanQA dataset contains over 41K question-answer pairs from the 800 indoor scenes drawn from the ScanNet dataset. To the best of our knowledge, ScanQA is the first large-scale effort to perform object-grounded question-answering in 3D environments.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge