Moon Jeong Park

MetaSSD: Meta-Learned Self-Supervised Detection

May 30, 2022

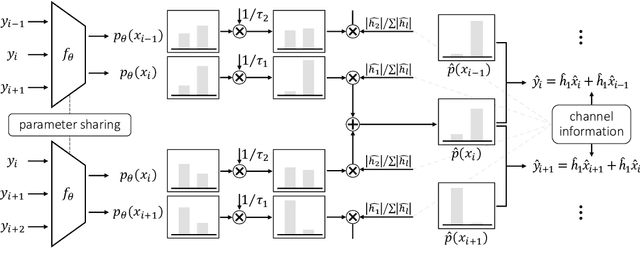

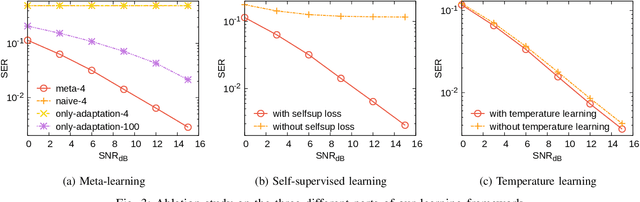

Abstract:Deep learning-based symbol detector gains increasing attention due to the simple algorithm design than the traditional model-based algorithms such as Viterbi and BCJR. The supervised learning framework is often employed to predict the input symbols, where training symbols are used to train the model. There are two major limitations in the supervised approaches: a) a model needs to be retrained from scratch when new train symbols come to adapt to a new channel status, and b) the length of the training symbols needs to be longer than a certain threshold to make the model generalize well on unseen symbols. To overcome these challenges, we propose a meta-learning-based self-supervised symbol detector named MetaSSD. Our contribution is two-fold: a) meta-learning helps the model adapt to a new channel environment based on experience with various meta-training environments, and b) self-supervised learning helps the model to use relatively less supervision than the previously suggested learning-based detectors. In experiments, MetaSSD outperforms OFDM-MMSE with noisy channel information and shows comparable results with BCJR. Further ablation studies show the necessity of each component in our framework.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge