Moiz Rauf

Meta Learning for Code Summarization

Jan 20, 2022

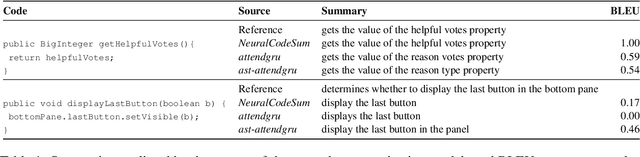

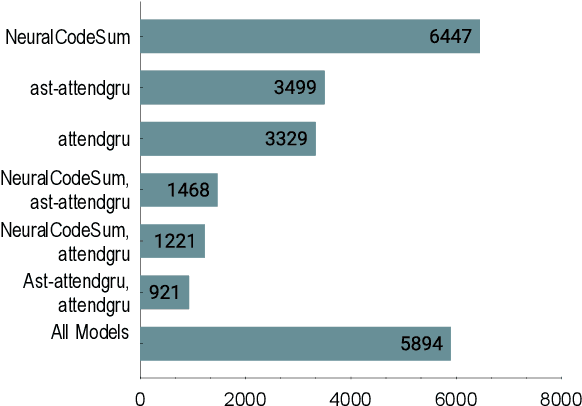

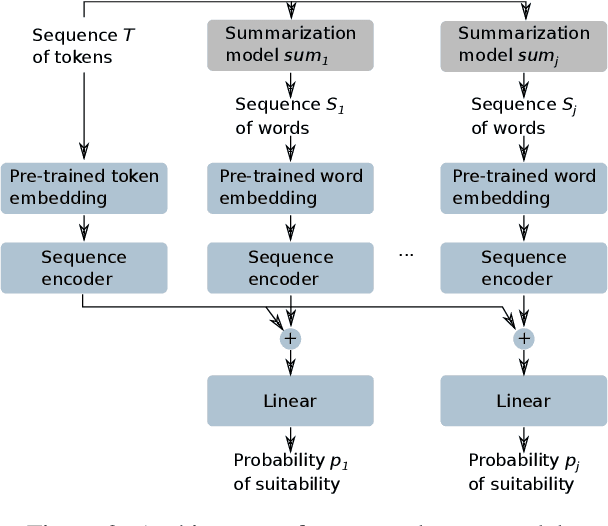

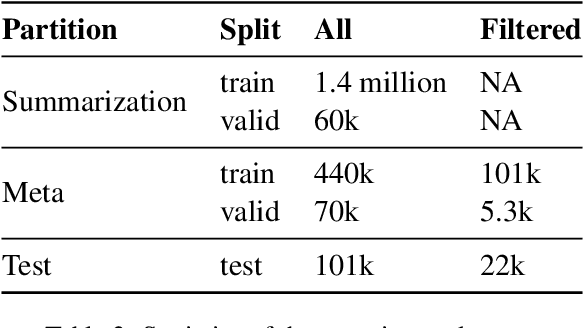

Abstract:Source code summarization is the task of generating a high-level natural language description for a segment of programming language code. Current neural models for the task differ in their architecture and the aspects of code they consider. In this paper, we show that three SOTA models for code summarization work well on largely disjoint subsets of a large code-base. This complementarity motivates model combination: We propose three meta-models that select the best candidate summary for a given code segment. The two neural models improve significantly over the performance of the best individual model, obtaining an improvement of 2.1 BLEU points on a dataset of code segments where at least one of the individual models obtains a non-zero BLEU.

Evaluating Semantic Representations of Source Code

Oct 11, 2019

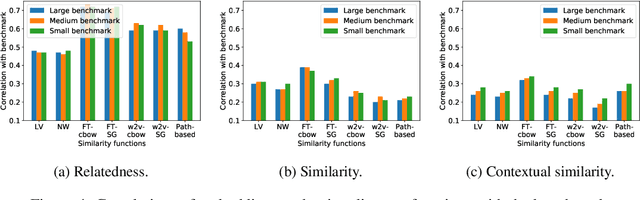

Abstract:Learned representations of source code enable various software developer tools, e.g., to detect bugs or to predict program properties. At the core of code representations often are word embeddings of identifier names in source code, because identifiers account for the majority of source code vocabulary and convey important semantic information. Unfortunately, there currently is no generally accepted way of evaluating the quality of word embeddings of identifiers, and current evaluations are biased toward specific downstream tasks. This paper presents IdBench, the first benchmark for evaluating to what extent word embeddings of identifiers represent semantic relatedness and similarity. The benchmark is based on thousands of ratings gathered by surveying 500 software developers. We use IdBench to evaluate state-of-the-art embedding techniques proposed for natural language, an embedding technique specifically designed for source code, and lexical string distance functions, as these are often used in current developer tools. Our results show that the effectiveness of embeddings varies significantly across different embedding techniques and that the best available embeddings successfully represent semantic relatedness. On the downside, no existing embedding provides a satisfactory representation of semantic similarities, e.g., because embeddings consider identifiers with opposing meanings as similar, which may lead to fatal mistakes in downstream developer tools. IdBench provides a gold standard to guide the development of novel embeddings that address the current limitations.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge