Mohammadhamed Tangestanizadeh

Attention-based U-Net Method for Autonomous Lane Detection

Nov 16, 2024

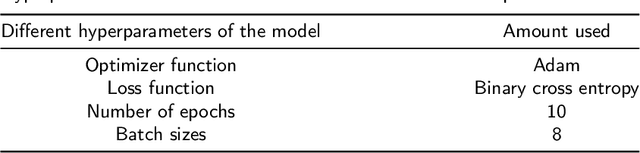

Abstract:Lane detection involves identifying lanes on the road and accurately determining their location and shape. This is a crucial technique for modern assisted and autonomous driving systems. However, several unique properties of lanes pose challenges for detection methods. The lack of distinctive features can cause lane detection algorithms to be confused by other objects with similar appearances. Additionally, the varying number of lanes and the diversity in lane line patterns, such as solid, broken, single, double, merging, and splitting lines, further complicate the task. To address these challenges, Deep Learning (DL) approaches can be employed in various ways. Merging DL models with an attention mechanism has recently surfaced as a new approach. In this context, two deep learning-based lane recognition methods are proposed in this study. The first method employs the Feature Pyramid Network (FPN) model, delivering an impressive 87.59% accuracy in detecting road lanes. The second method, which incorporates attention layers into the U-Net model, significantly boosts the performance of semantic segmentation tasks. The advanced model, achieving an extraordinary 98.98% accuracy and far surpassing the basic U-Net model, clearly showcases its superiority over existing methods in a comparative analysis. The groundbreaking findings of this research pave the way for the development of more effective and reliable road lane detection methods, significantly advancing the capabilities of modern assisted and autonomous driving systems.

A Survey on Reinforcement Learning Applications in SLAM

Aug 26, 2024Abstract:The emergence of mobile robotics, particularly in the automotive industry, introduces a promising era of enriched user experiences and adept handling of complex navigation challenges. The realization of these advancements necessitates a focused technological effort and the successful execution of numerous intricate tasks, particularly in the critical domain of Simultaneous Localization and Mapping (SLAM). Various artificial intelligence (AI) methodologies, such as deep learning and reinforcement learning, present viable solutions to address the challenges in SLAM. This study specifically explores the application of reinforcement learning in the context of SLAM. By enabling the agent (the robot) to iteratively interact with and receive feedback from its environment, reinforcement learning facilitates the acquisition of navigation and mapping skills, thereby enhancing the robot's decision-making capabilities. This approach offers several advantages, including improved navigation proficiency, increased resilience, reduced dependence on sensor precision, and refinement of the decision-making process. The findings of this study, which provide an overview of reinforcement learning's utilization in SLAM, reveal significant advancements in the field. The investigation also highlights the evolution and innovative integration of these techniques.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge