Milapji Singh Gill

Leveraging LLM Agents and Digital Twins for Fault Handling in Process Plants

May 04, 2025Abstract:Advances in Automation and Artificial Intelligence continue to enhance the autonomy of process plants in handling various operational scenarios. However, certain tasks, such as fault handling, remain challenging, as they rely heavily on human expertise. This highlights the need for systematic, knowledge-based methods. To address this gap, we propose a methodological framework that integrates Large Language Model (LLM) agents with a Digital Twin environment. The LLM agents continuously interpret system states and initiate control actions, including responses to unexpected faults, with the goal of returning the system to normal operation. In this context, the Digital Twin acts both as a structured repository of plant-specific engineering knowledge for agent prompting and as a simulation platform for the systematic validation and verification of the generated corrective control actions. The evaluation using a mixing module of a process plant demonstrates that the proposed framework is capable not only of autonomously controlling the mixing module, but also of generating effective corrective actions to mitigate a pipe clogging with only a few reprompts.

Chatbot-Based Ontology Interaction Using Large Language Models and Domain-Specific Standards

Jul 22, 2024

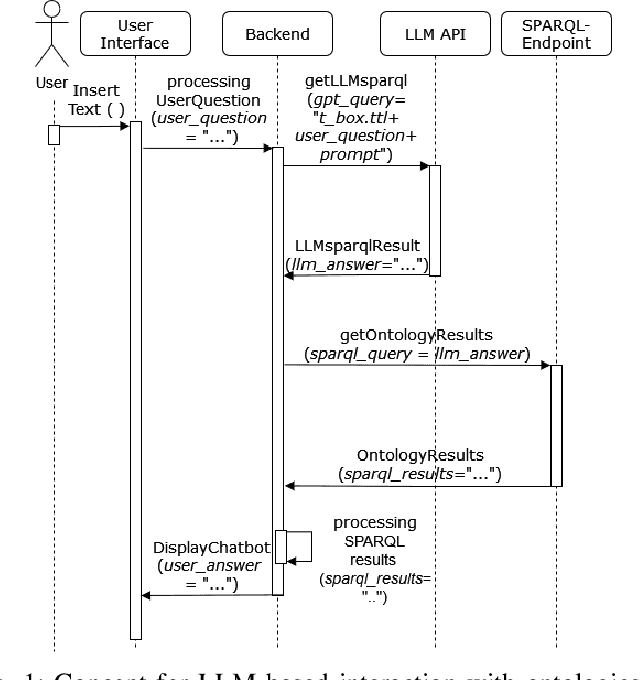

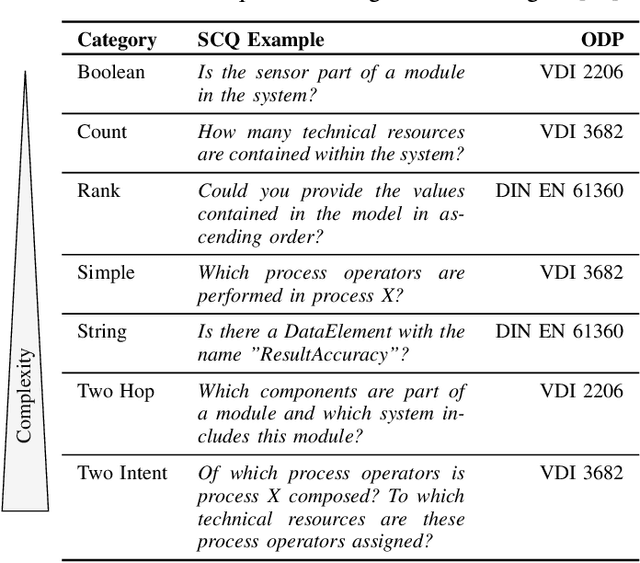

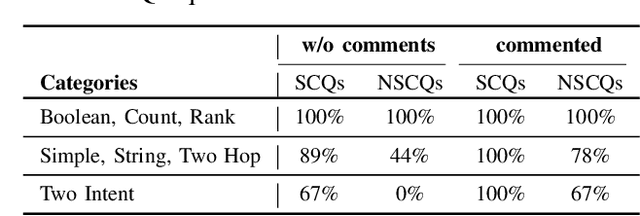

Abstract:The following contribution introduces a concept that employs Large Language Models (LLMs) and a chatbot interface to enhance SPARQL query generation for ontologies, thereby facilitating intuitive access to formalized knowledge. Utilizing natural language inputs, the system converts user inquiries into accurate SPARQL queries that strictly query the factual content of the ontology, effectively preventing misinformation or fabrication by the LLM. To enhance the quality and precision of outcomes, additional textual information from established domain-specific standards is integrated into the ontology for precise descriptions of its concepts and relationships. An experimental study assesses the accuracy of generated SPARQL queries, revealing significant benefits of using LLMs for querying ontologies and highlighting areas for future research.

Integrating Ontology Design with the CRISP-DM in the context of Cyber-Physical Systems Maintenance

Jul 09, 2024

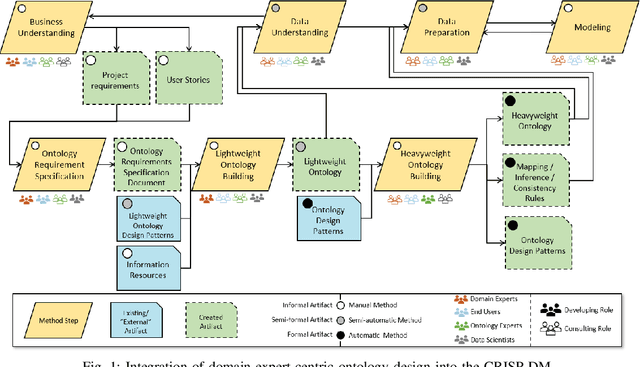

Abstract:In the following contribution, a method is introduced that integrates domain expert-centric ontology design with the Cross-Industry Standard Process for Data Mining (CRISP-DM). This approach aims to efficiently build an application-specific ontology tailored to the corrective maintenance of Cyber-Physical Systems (CPS). The proposed method is divided into three phases. In phase one, ontology requirements are systematically specified, defining the relevant knowledge scope. Accordingly, CPS life cycle data is contextualized in phase two using domain-specific ontological artifacts. This formalized domain knowledge is then utilized in the CRISP-DM to efficiently extract new insights from the data. Finally, the newly developed data-driven model is employed to populate and expand the ontology. Thus, information extracted from this model is semantically annotated and aligned with the existing ontology in phase three. The applicability of this method has been evaluated in an anomaly detection case study for a modular process plant.

Representing Timed Automata and Timing Anomalies of Cyber-Physical Production Systems in Knowledge Graphs

Aug 25, 2023

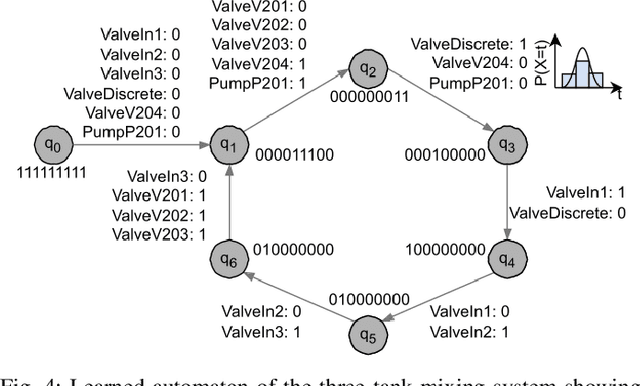

Abstract:Model-Based Anomaly Detection has been a successful approach to identify deviations from the expected behavior of Cyber-Physical Production Systems. Since manual creation of these models is a time-consuming process, it is advantageous to learn them from data and represent them in a generic formalism like timed automata. However, these models - and by extension, the detected anomalies - can be challenging to interpret due to a lack of additional information about the system. This paper aims to improve model-based anomaly detection in CPPS by combining the learned timed automaton with a formal knowledge graph about the system. Both the model and the detected anomalies are described in the knowledge graph in order to allow operators an easier interpretation of the model and the detected anomalies. The authors additionally propose an ontology of the necessary concepts. The approach was validated on a five-tank mixing CPPS and was able to formally define both automata model as well as timing anomalies in automata execution.

Integration of Domain Expert-Centric Ontology Design into the CRISP-DM for Cyber-Physical Production Systems

Jul 21, 2023

Abstract:In the age of Industry 4.0 and Cyber-Physical Production Systems (CPPSs) vast amounts of potentially valuable data are being generated. Methods from Machine Learning (ML) and Data Mining (DM) have proven to be promising in extracting complex and hidden patterns from the data collected. The knowledge obtained can in turn be used to improve tasks like diagnostics or maintenance planning. However, such data-driven projects, usually performed with the Cross-Industry Standard Process for Data Mining (CRISP-DM), often fail due to the disproportionate amount of time needed for understanding and preparing the data. The application of domain-specific ontologies has demonstrated its advantageousness in a wide variety of Industry 4.0 application scenarios regarding the aforementioned challenges. However, workflows and artifacts from ontology design for CPPSs have not yet been systematically integrated into the CRISP-DM. Accordingly, this contribution intends to present an integrated approach so that data scientists are able to more quickly and reliably gain insights into the CPPS. The result is exemplarily applied to an anomaly detection use case.

Systematic Comparison of Software Agents and Digital Twins: Differences, Similarities, and Synergies in Industrial Production

Jul 17, 2023Abstract:To achieve a highly agile and flexible production, it is envisioned that industrial production systems gradually become more decentralized, interconnected, and intelligent. Within this vision, production assets collaborate with each other, exhibiting a high degree of autonomy. Furthermore, knowledge about individual production assets is readily available throughout their entire life-cycles. To realize this vision, adequate use of information technology is required. Two commonly applied software paradigms in this context are Software Agents (referred to as Agents) and Digital Twins (DTs). This work presents a systematic comparison of Agents and DTs in industrial applications. The goal of the study is to determine the differences, similarities, and potential synergies between the two paradigms. The comparison is based on the purposes for which Agents and DTs are applied, the properties and capabilities exhibited by these software paradigms, and how they can be allocated within the Reference Architecture Model Industry 4.0. The comparison reveals that Agents are commonly employed in the collaborative planning and execution of production processes, while DTs typically play a more passive role in monitoring production resources and processing information. Although these observations imply characteristic sets of capabilities and properties for both Agents and DTs, a clear and definitive distinction between the two paradigms cannot be made. Instead, the analysis indicates that production assets utilizing a combination of Agents and DTs would demonstrate high degrees of intelligence, autonomy, sociability, and fidelity. To achieve this, further standardization is required, particularly in the field of DTs.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge