Mikiya Shibuya

Privacy Preserving Visual SLAM

Jul 27, 2020

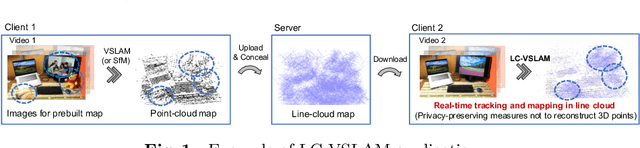

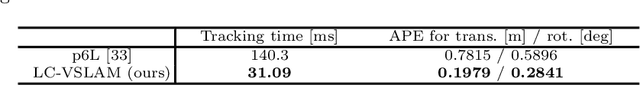

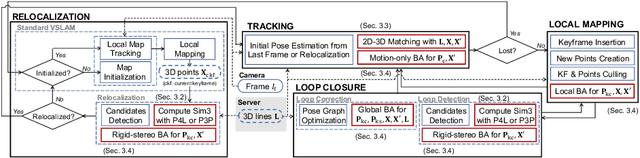

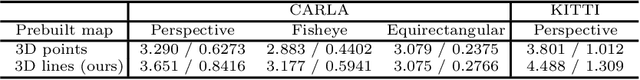

Abstract:This study proposes a privacy-preserving Visual SLAM framework for estimating camera poses and performing bundle adjustment with mixed line and point clouds in real time. Previous studies have proposed localization methods to estimate a camera pose using a line-cloud map for a single image or a reconstructed point cloud. These methods offer a scene privacy protection against the inversion attacks by converting a point cloud to a line cloud, which reconstruct the scene images from the point cloud. However, they are not directly applicable to a video sequence because they do not address computational efficiency. This is a critical issue to solve for estimating camera poses and performing bundle adjustment with mixed line and point clouds in real time. Moreover, there has been no study on a method to optimize a line-cloud map of a server with a point cloud reconstructed from a client video because any observation points on the image coordinates are not available to prevent the inversion attacks, namely the reversibility of the 3D lines. The experimental results with synthetic and real data show that our Visual SLAM framework achieves the intended privacy-preserving formation and real-time performance using a line-cloud map.

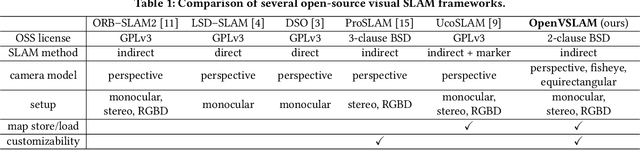

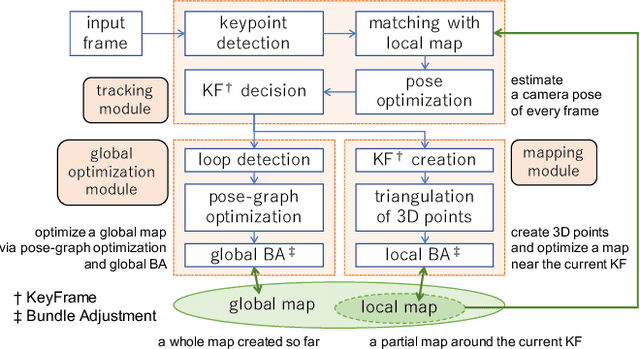

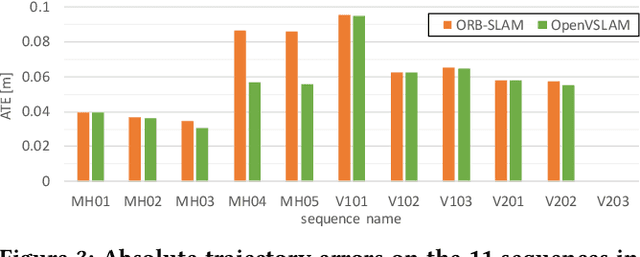

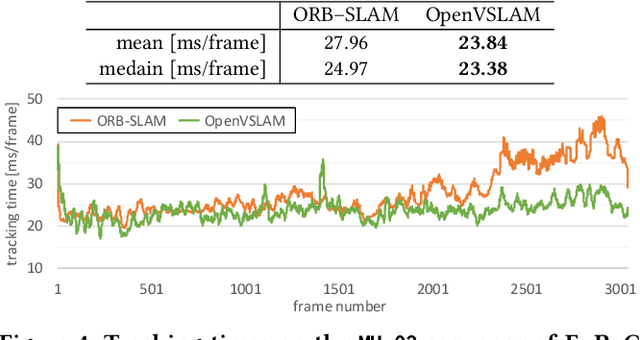

OpenVSLAM: A Versatile Visual SLAM Framework

Oct 10, 2019

Abstract:In this paper, we introduce OpenVSLAM, a visual SLAM framework with high usability and extensibility. Visual SLAM systems are essential for AR devices, autonomous control of robots and drones, etc. However, conventional open-source visual SLAM frameworks are not appropriately designed as libraries called from third-party programs. To overcome this situation, we have developed a novel visual SLAM framework. This software is designed to be easily used and extended. It incorporates several useful features and functions for research and development. OpenVSLAM is released at https://github.com/xdspacelab/openvslam under the 2-clause BSD license.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge