Michael Kraus

IPP

Neural non-canonical Hamiltonian dynamics for long-time simulations

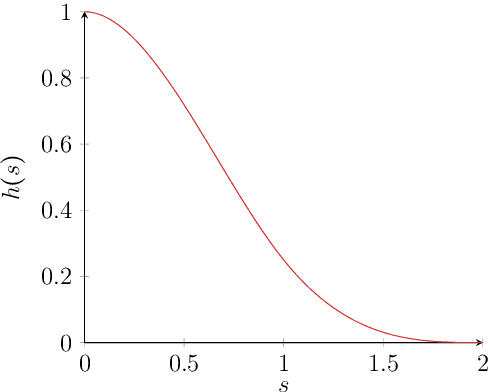

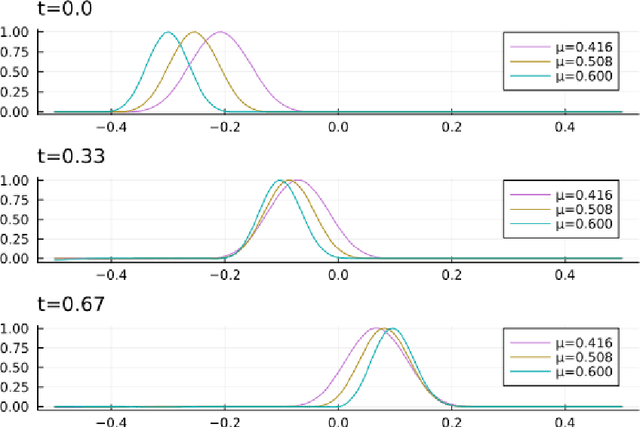

Oct 02, 2025Abstract:This work focuses on learning non-canonical Hamiltonian dynamics from data, where long-term predictions require the preservation of structure both in the learned model and in numerical schemes. Previous research focused on either facet, respectively with a potential-based architecture and with degenerate variational integrators, but new issues arise when combining both. In experiments, the learnt model is sometimes numerically unstable due to the gauge dependency of the scheme, rendering long-time simulations impossible. In this paper, we identify this problem and propose two different training strategies to address it, either by directly learning the vector field or by learning a time-discrete dynamics through the scheme. Several numerical test cases assess the ability of the methods to learn complex physical dynamics, like the guiding center from gyrokinetic plasma physics.

Structure-Preserving Transformers for Learning Parametrized Hamiltonian Systems

Dec 18, 2023

Abstract:Two of the many trends in neural network research of the past few years have been (i) the learning of dynamical systems, especially with recurrent neural networks such as long short-term memory networks (LSTMs) and (ii) the introduction of transformer neural networks for natural language processing (NLP) tasks. Both of these trends have created enormous amounts of traction, particularly the second one: transformer networks now dominate the field of NLP. Even though some work has been performed on the intersection of these two trends, this work was largely limited to using the vanilla transformer directly without adjusting its architecture for the setting of a physical system. In this work we use a transformer-inspired neural network to learn a complicated non-linear dynamical system and furthermore (for the first time) imbue it with structure-preserving properties to improve long-term stability. This is shown to be extremely important when applying the neural network to real world applications.

Symplectic Autoencoders for Model Reduction of Hamiltonian Systems

Dec 15, 2023

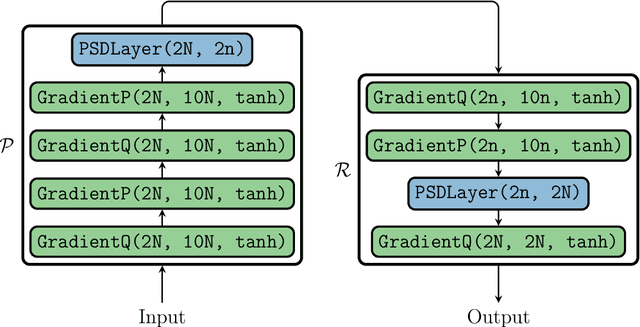

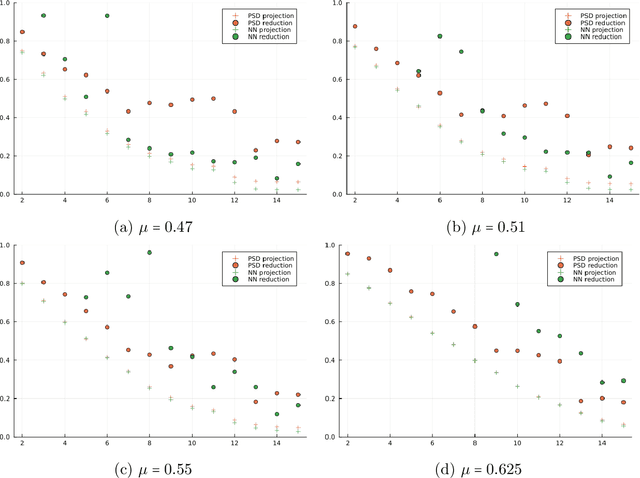

Abstract:Many applications, such as optimization, uncertainty quantification and inverse problems, require repeatedly performing simulations of large-dimensional physical systems for different choices of parameters. This can be prohibitively expensive. In order to save computational cost, one can construct surrogate models by expressing the system in a low-dimensional basis, obtained from training data. This is referred to as model reduction. Past investigations have shown that, when performing model reduction of Hamiltonian systems, it is crucial to preserve the symplectic structure associated with the system in order to ensure long-term numerical stability. Up to this point structure-preserving reductions have largely been limited to linear transformations. We propose a new neural network architecture in the spirit of autoencoders, which are established tools for dimension reduction and feature extraction in data science, to obtain more general mappings. In order to train the network, a non-standard gradient descent approach is applied that leverages the differential-geometric structure emerging from the network design. The new architecture is shown to significantly outperform existing designs in accuracy.

Multi-Objective Loss Balancing for Physics-Informed Deep Learning

Oct 19, 2021

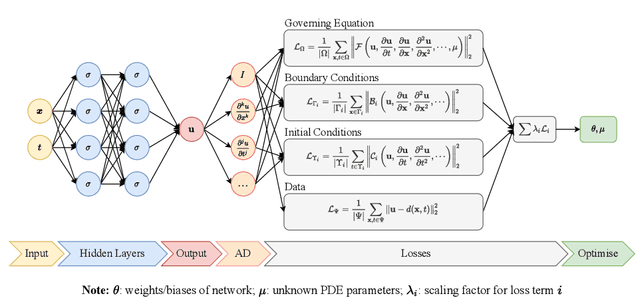

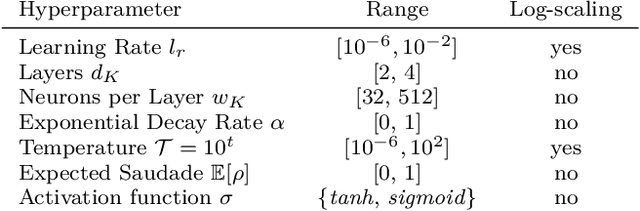

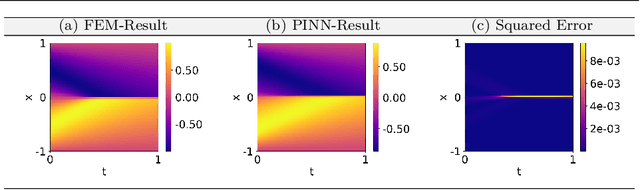

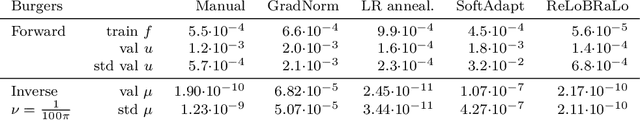

Abstract:Physics Informed Neural Networks (PINN) are algorithms from deep learning leveraging physical laws by including partial differential equations (PDE) together with a respective set of boundary and initial conditions (BC / IC) as penalty terms into their loss function. As the PDE, BC and IC loss function parts can significantly differ in magnitudes, due to their underlying physical units or stochasticity of initialisation, training of PINNs may suffer from severe convergence and efficiency problems, causing PINNs to stay beyond desirable approximation quality. In this work, we observe the significant role of correctly weighting the combination of multiple competitive loss functions for training PINNs effectively. To that end, we implement and evaluate different methods aiming at balancing the contributions of multiple terms of the PINNs loss function and their gradients. After review of three existing loss scaling approaches (Learning Rate Annealing, GradNorm as well as SoftAdapt), we propose a novel self-adaptive loss balancing of PINNs called ReLoBRaLo (Relative Loss Balancing with Random Lookback). Finally, the performance of ReLoBRaLo is compared and verified against these approaches by solving both forward as well as inverse problems on three benchmark PDEs for PINNs: Burgers' equation, Kirchhoff's plate bending equation and Helmholtz's equation. Our simulation studies show that ReLoBRaLo training is much faster and achieves higher accuracy than training PINNs with other balancing methods and hence is very effective and increases sustainability of PINNs algorithms. The adaptability of ReLoBRaLo illustrates robustness across different PDE problem settings. The proposed method can also be employed to the wider class of penalised optimisation problems, including PDE-constrained and Sobolev training apart from the studied PINNs examples.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge