Michael DeLucia

Automatic Tuning of Federated Learning Hyper-Parameters from System Perspective

Oct 06, 2021

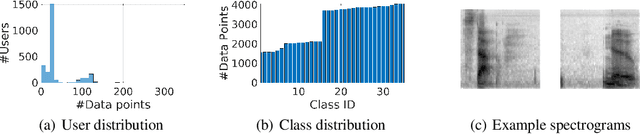

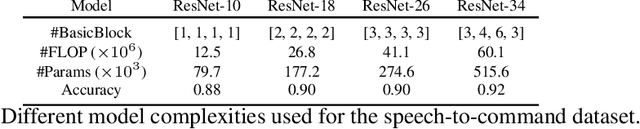

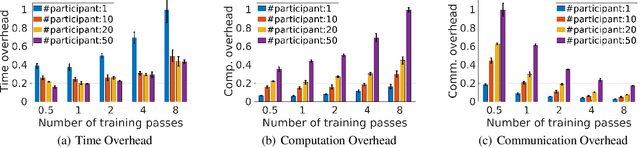

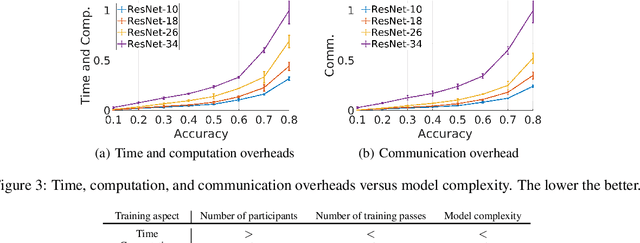

Abstract:Federated learning (FL) is a distributed model training paradigm that preserves clients' data privacy. FL hyper-parameters significantly affect the training overheads in terms of time, computation, and communication. However, the current practice of manually selecting FL hyper-parameters puts a high burden on FL practitioners since various applications prefer different training preferences. In this paper, we propose FedTuning, an automatic FL hyper-parameter tuning algorithm tailored to applications' diverse system requirements of FL training. FedTuning is lightweight and flexible, achieving an average of 41% improvement for different training preferences on time, computation, and communication compared to fixed FL hyper-parameters. FedTuning is available at https://github.com/dtczhl/FedTuning.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge