Meir Halachmi

Physics and semantic informed multi-sensor calibration via optimization theory and self-supervised learning

Jun 06, 2022

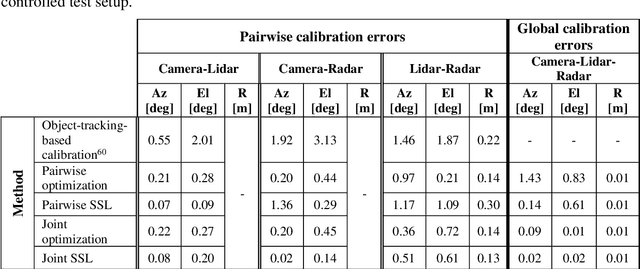

Abstract:Achieving safe and reliable autonomous driving relies greatly on the ability to achieve an accurate and robust perception system; however, this cannot be fully realized without precisely calibrated sensors. Environmental and operational conditions as well as improper maintenance can produce calibration errors inhibiting sensor fusion and, consequently, degrading the perception performance. Traditionally, sensor calibration is performed in a controlled environment with one or more known targets. Such a procedure can only be carried out in between drives and requires manual operation; a tedious task if needed to be conducted on a regular basis. This sparked a recent interest in online targetless methods, capable of yielding a set of geometric transformations based on perceived environmental features, however, the required redundancy in sensing modalities makes this task even more challenging, as the features captured by each modality and their distinctiveness may vary. We present a holistic approach to performing joint calibration of a camera-lidar-radar trio. Leveraging prior knowledge and physical properties of these sensing modalities together with semantic information, we propose two targetless calibration methods within a cost minimization framework once via direct online optimization, and second via self-supervised learning (SSL).

Coherent, super resolved radar beamforming using self-supervised learning

Jun 21, 2021

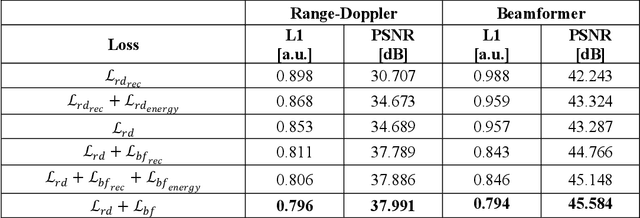

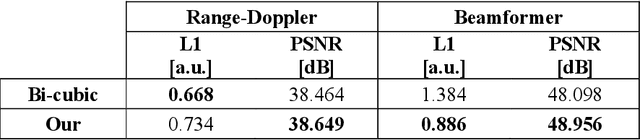

Abstract:High resolution automotive radar sensors are required in order to meet the high bar of autonomous vehicles needs and regulations. However, current radar systems are limited in their angular resolution causing a technological gap. An industry and academic trend to improve angular resolution by increasing the number of physical channels, also increases system complexity, requires sensitive calibration processes, lowers robustness to hardware malfunctions and drives higher costs. We offer an alternative approach, named Radar signal Reconstruction using Self Supervision (R2-S2), which significantly improves the angular resolution of a given radar array without increasing the number of physical channels. R2-S2 is a family of algorithms which use a Deep Neural Network (DNN) with complex range-Doppler radar data as input and trained in a self-supervised method using a loss function which operates in multiple data representation spaces. Improvement of 4x in angular resolution was demonstrated using a real-world dataset collected in urban and highway environments during clear and rainy weather conditions.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge