Mayank Singh Chauhan

CEAR: Cross-Entity Aware Reranker for Knowledge Base Completion

Apr 18, 2021

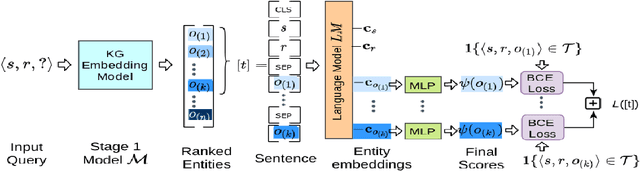

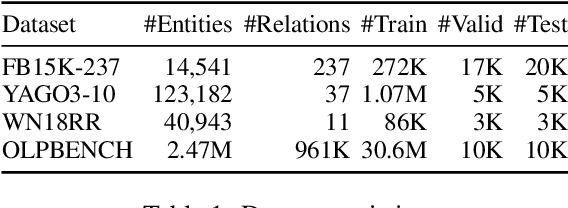

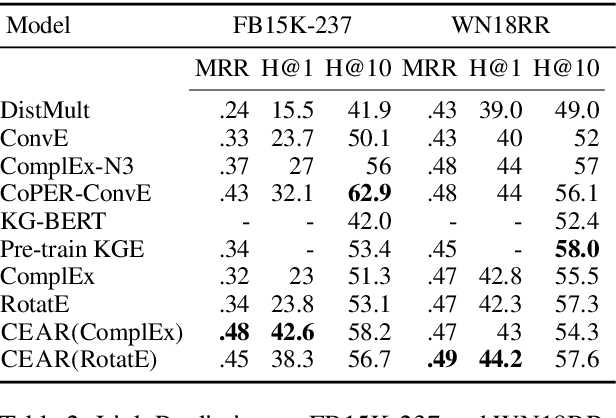

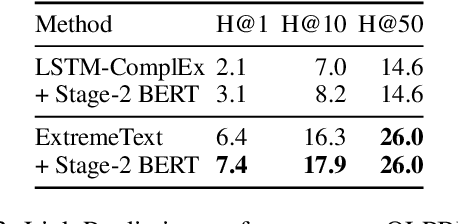

Abstract:Pre-trained language models (LMs) like BERT have shown to store factual knowledge about the world. This knowledge can be used to augment the information present in Knowledge Bases, which tend to be incomplete. However, prior attempts at using BERT for task of Knowledge Base Completion (KBC) resulted in performance worse than embedding based techniques that rely only on the graph structure. In this work we develop a novel model, Cross-Entity Aware Reranker (CEAR), that uses BERT to re-rank the output of existing KBC models with cross-entity attention. Unlike prior work that scores each entity independently, CEAR uses BERT to score the entities together, which is effective for exploiting its factual knowledge. CEAR establishes a new state of the art performance with 42.6 HITS@1 in FB15k-237 (32.7% relative improvement) and 5.3 pt improvement in HITS@1 for Open Link Prediction.

Embedded CNN based vehicle classification and counting in non-laned road traffic

Jan 18, 2019

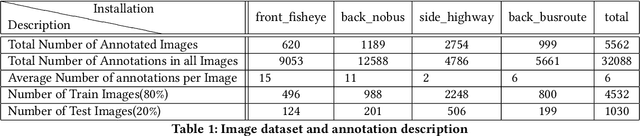

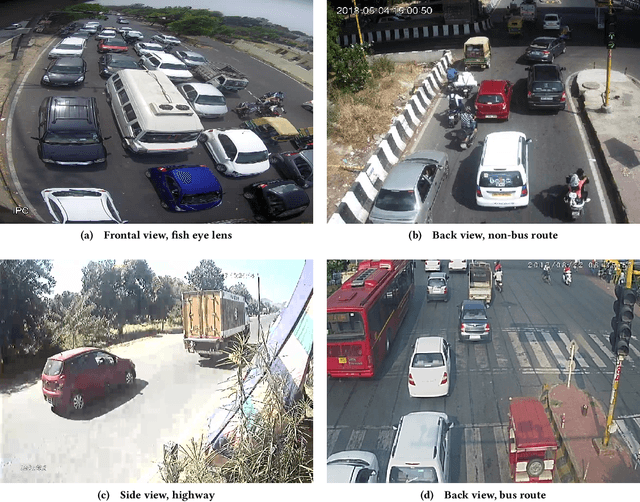

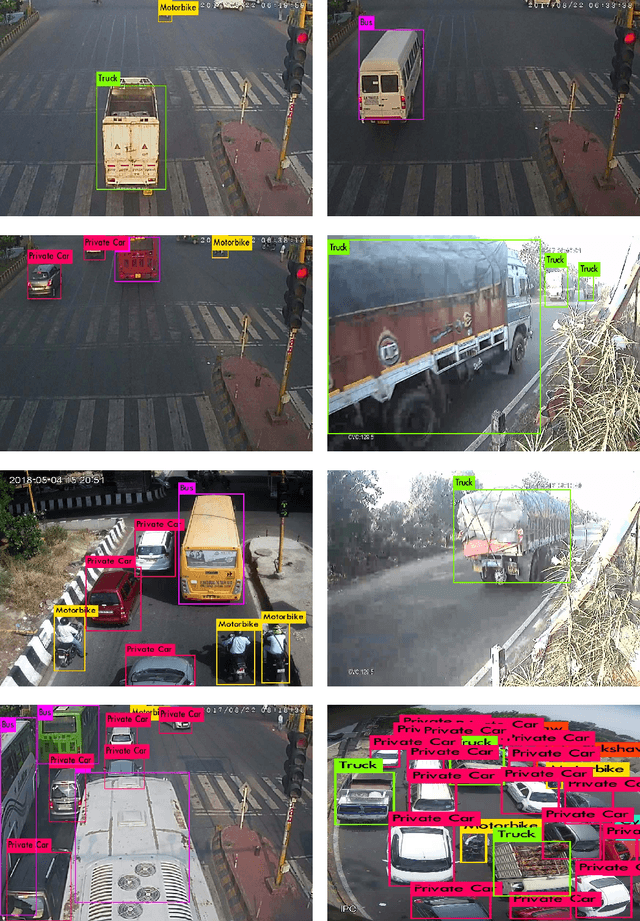

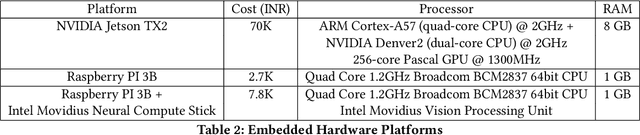

Abstract:Classifying and counting vehicles in road traffic has numerous applications in the transportation engineering domain. However, the wide variety of vehicles (two-wheelers, three-wheelers, cars, buses, trucks etc.) plying on roads of developing regions without any lane discipline, makes vehicle classification and counting a hard problem to automate. In this paper, we use state of the art Convolutional Neural Network (CNN) based object detection models and train them for multiple vehicle classes using data from Delhi roads. We get upto 75% MAP on an 80-20 train-test split using 5562 video frames from four different locations. As robust network connectivity is scarce in developing regions for continuous video transmissions from the road to cloud servers, we also evaluate the latency, energy and hardware cost of embedded implementations of our CNN model based inferences.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge