Markus Zopf

Graph Reasoning Networks

Jul 08, 2024Abstract:Graph neural networks (GNNs) are the predominant approach for graph-based machine learning. While neural networks have shown great performance at learning useful representations, they are often criticized for their limited high-level reasoning abilities. In this work, we present Graph Reasoning Networks (GRNs), a novel approach to combine the strengths of fixed and learned graph representations and a reasoning module based on a differentiable satisfiability solver. While results on real-world datasets show comparable performance to GNN, experiments on synthetic datasets demonstrate the potential of the newly proposed method.

Effective and Interpretable Information Aggregation with Capacity Networks

Jul 25, 2022

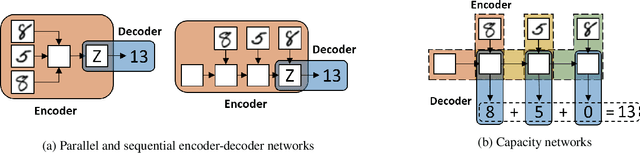

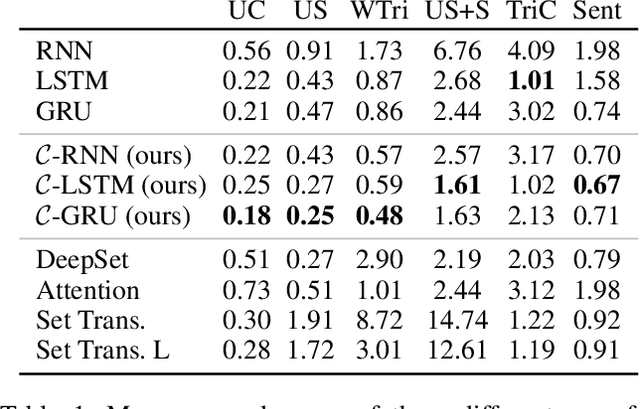

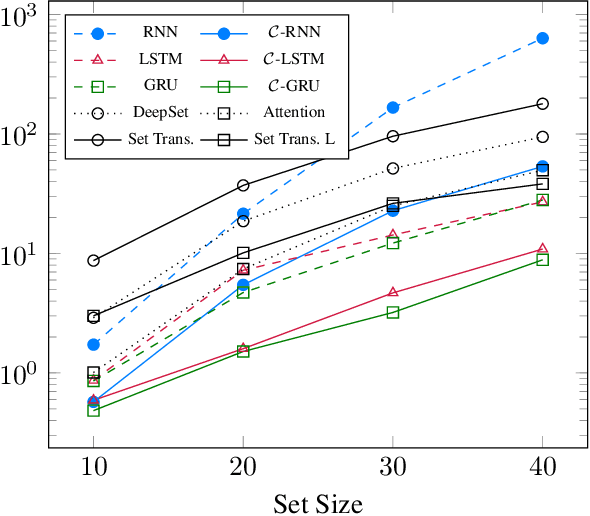

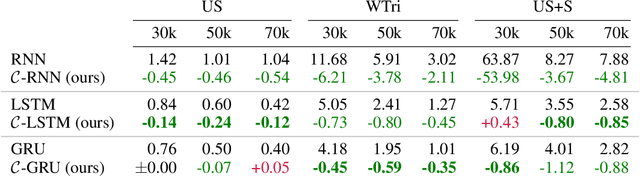

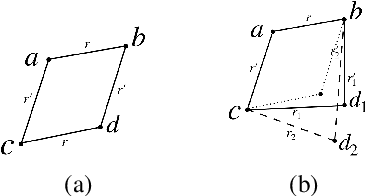

Abstract:How to aggregate information from multiple instances is a key question multiple instance learning. Prior neural models implement different variants of the well-known encoder-decoder strategy according to which all input features are encoded a single, high-dimensional embedding which is then decoded to generate an output. In this work, inspired by Choquet capacities, we propose Capacity networks. Unlike encoder-decoders, Capacity networks generate multiple interpretable intermediate results which can be aggregated in a semantically meaningful space to obtain the final output. Our experiments show that implementing this simple inductive bias leads to improvements over different encoder-decoder architectures in a wide range of experiments. Moreover, the interpretable intermediate results make Capacity networks interpretable by design, which allows a semantically meaningful inspection, evaluation, and regularization of the network internals.

1-WL Expressiveness Is All You Need

Feb 21, 2022

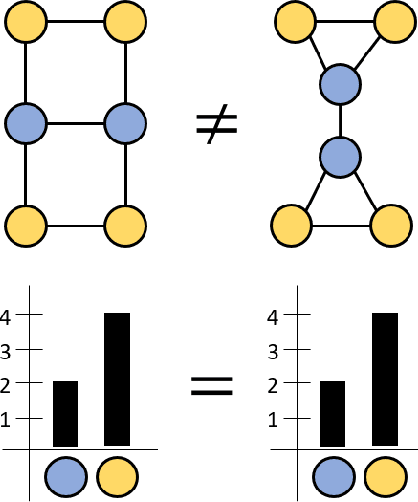

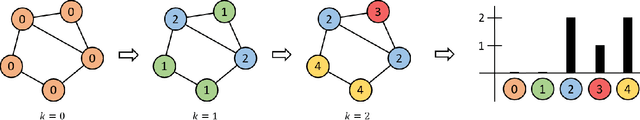

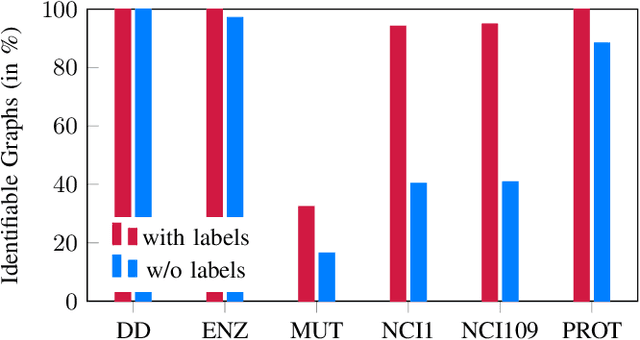

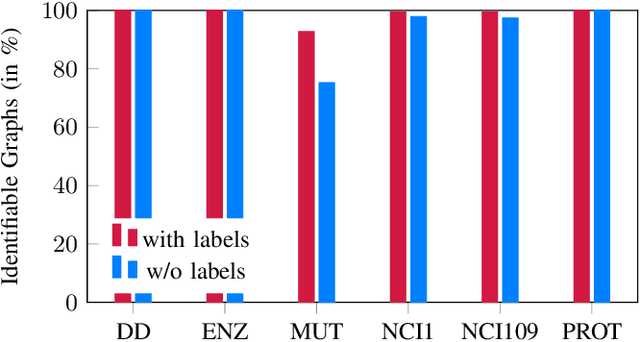

Abstract:It has been shown that a message passing neural networks (MPNNs), a popular family of neural networks for graph-structured data, are at most as expressive as the first-order Weisfeiler-Leman (1-WL) graph isomorphism test, which has motivated the development of more expressive architectures. In this work, we analyze if the limited expressiveness is actually a limiting factor for MPNNs and other WL-based models in standard graph datasets. Interestingly, we find that the expressiveness of WL is sufficient to identify almost all graphs in most datasets. Moreover, we find that the classification accuracy upper bounds are often close to 100\%. Furthermore, we find that simple WL-based neural networks and several MPNNs can be fitted to several datasets. In sum, we conclude that the performance of WL/MPNNs is not limited by their expressiveness in practice.

Learning Analogy-Preserving Sentence Embeddings for Answer Selection

Oct 11, 2019

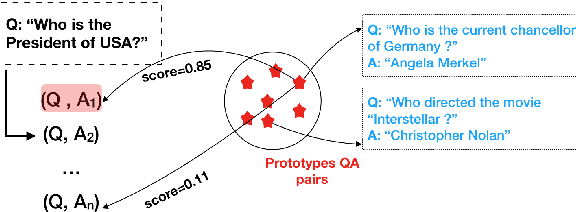

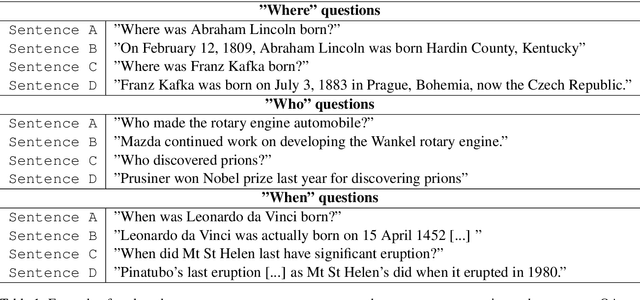

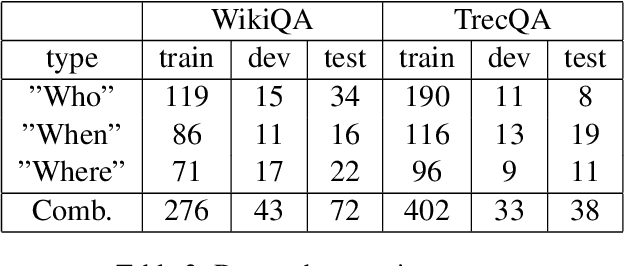

Abstract:Answer selection aims at identifying the correct answer for a given question from a set of potentially correct answers. Contrary to previous works, which typically focus on the semantic similarity between a question and its answer, our hypothesis is that question-answer pairs are often in analogical relation to each other. Using analogical inference as our use case, we propose a framework and a neural network architecture for learning dedicated sentence embeddings that preserve analogical properties in the semantic space. We evaluate the proposed method on benchmark datasets for answer selection and demonstrate that our sentence embeddings indeed capture analogical properties better than conventional embeddings, and that analogy-based question answering outperforms a comparable similarity-based technique.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge